Geospatial technologies such as global positioning systems and geographic information systems infiltrate many people’s daily lives – General Motor’s OnStar, Apple’s iPhone, Yahoo and Google maps, and the meteorological maps that display the local weather forecast are just a few. Due to the influx of new technology and the roles of these technologies in the world, many scholars in the fields of educational technology and teacher education have called for increased and meaningful integration of technology in schools and classrooms. Specifically, many social studies researchers have noted the potential for using geospatial technologies in order to increase student motivation and engagement (e.g., Heafner, 2004; Keiper, 1999), although actual integration has fallen short of expectations (Doering, Veletsianos, & Scharber, 2008; Whitworth & Berson, 2003).

The need for technology integration within social studies teacher preparation and education programs has also lagged behind expectations (Mehlinger & Powers, 2002). In response, our design and research team has developed GeoThentic, an online teaching and learning environment for K-12 geography teachers and students focused on real-world issues (e.g., global warming), content-specific technologies (Google Earth), and appropriate pedagogies (e.g., problem-based learning), grounded in and designed using the technology, pedagogy, and content knowledge (TPACK) framework. This manuscript describes the evolution of the GeoThentic learning environment, how GeoThentic was designed using TPACK, and how teacher TPACK assessment models have been designed and integrated within the environment to assist social studies teaching and learning.

Literature Review

TPACK Defined

Teaching is commonly described as both an art and a science (Eisner, 1994; Schön, 1987), a description that has implications for how a person studies or learns how to become a teacher. Due to the complex nature of teaching and learning, many scholars and educators have attempted to identify the things teachers need to know. Shulman (1986, 1987) put forth one of the most accepted theories of teacher knowledge that encapsulates both art and science conceptualizations. He described the know-how of teachers as pedagogical content knowledge:

…that special amalgam of content and pedagogy that is uniquely the province of teachers, their own special form of professional understanding…it represents the blending of content and pedagogy into an understanding of how particular topics, problems, or issues are organized, represented, and adapted to the diverse interests and abilities of learners, and presented for instruction. Pedagogical content knowledge is the category most likely to distinguish the understanding of the content specialist from that of a pedagogue. (1987, p. 8)

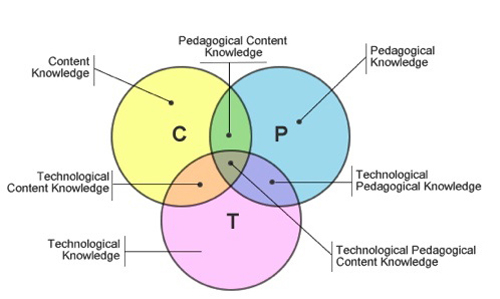

Other scholars in the fields of educational technology and teacher education have extended Shulman’s ideas about teacher knowledge by including a third component – technological knowledge (Hughes, 2000, 2004; Niess, 2005). Mishra and Kohler (2006) formally introduced the union of these three different types of knowledge as representative of what teachers need to know, coining the combined framework “technological pedagogical content knowledge” (TPCK), which was later renamed TPACK (see Figure 1).

Essentially, TPACK consists of the negotiation of and synergy between three forms of knowledge (Mishra & Kohler, 2006, 2008; Thompson & Mishra, 2007-2008). Although the TPACK framework appears simple both in text and diagram forms, it is fairly complex to grasp. Cox (2008) identified 89 versions of TPACK definitions that attempted to tease out and capture the complexities inherent within the teacher knowledge framework. After considering the plethora of TPACK descriptions, Cox offered an “expansive” definition for TPACK:

…knowledge of the dynamic, transactional negotiation among technology, pedagogy, and content and how that negotiation impacts student learning in a classroom context. The essential features are (a) the use of appropriate technology (b) in a particular content area (c) as part of a pedagogical strategy (d) within a given educational context (e) to develop students’ knowledge of a particular topic or meet an educational objective or student need. (p. 40)

In this manuscript, this definition represents our understanding of TPACK.

Figure 1. Technological pedagogical content knowledge (Mishra & Koehler, 2006)

Figure 1. Technological pedagogical content knowledge (Mishra & Koehler, 2006)

Since its formal introduction in 2006, TPACK as a theoretical concept has been embraced by technology integration communities (e.g., Society for Information Technology and Teacher Education; see http://site.aace.org/conf/) who have long struggled to define, explain, and stress the role of technology within the field of education (Doering, Veletsianos, & Scharber, 2007; Thompson & Mishra, 2007). Scholars who study the impact of technology on teaching and learning across content areas understand that the technology itself, as well as the nature of pedagogy and the meaning of learning, all change continually.

Indeed, although technology integration is a complex and “wicked” problem (Mishra & Koehler, 2006), TPACK offers a useful framework to aid communication with non-technology-focused audiences about the necessity of including technology knowledge as a component of teacher knowledge. In addition, TPACK offers the fields of educational technology and teacher education a research framework for guiding pre- and in-service teachers’ knowledge assessment and development as well as technology integration in their classrooms (Doering, Veletsianos, & Scharber, 2007; Hughes & Scharber, 2008; Thompson et al. 2008).

However, despite the framework’s potential usefulness, TPACK should be a temporary construct (Hughes & Scharber, 2008). As technology becomes entwined in classrooms and schools, it will become braided into pedagogical knowledge, content knowledge, and pedagogical content knowledge such that the focus on technology will no longer be needed.

TPACK in the Social Studies

Due to the role of content knowledge in teaching, the call to describe more concretely what TPACK looks like in action and the need to develop assessments to measure and develop TPACK, scholars are beginning to consider TPACK within various content areas (e.g., AACTE Committee on Innovation and Technology, 2008), including social studies.

Historically, the social studies field had been slow to incorporate technology into its teaching and learning. Over 10 years ago Martorella (1997) referred to technology in social studies education as “the sleeping giant” whose potential had not yet been realized. Today, social studies teaching and learning is still dominated by traditional pedagogical practices that are primarily teacher centered, with technology, for the most part, still not being used in transformative ways, if at all (e.g., Bednarz & van der Schee, 2006; Cuban, 2001, 2008; Doering, Veletsianos, & Scharber, 2007; Lee, 2008; Swan & Hofer, 2008). Indeed, research on technology integration in social studies classrooms continues to be minimal and in its “adolescence” (Berson & Bayata; 2004; Doering, Veletsianos, & Scharber, 2007; Friedman & Hicks, 2006; Ross, 2000; Swan & Hofer, 2008).

Despite epistemological resistance from teachers and slow starts in the field of social studies education, there may be renewed interest in and even evolving viewpoints toward technology and the social studies (Swan & Hofer, 2008; Tally, 2007). For example, Theory and Research in Social Education dedicated a special issue to technology in 2007, with the previous special issue dating back to 2000; national social studies and geography standards make explicit reference to technology; the National Council for the Social Studies (2006) recently offered a position statement on technology; and several recent books illustrate the continued growth in the field (e.g., Bennett & Berson, 2007; Doering & Veletsianos, 2007b).

Focus on Geography

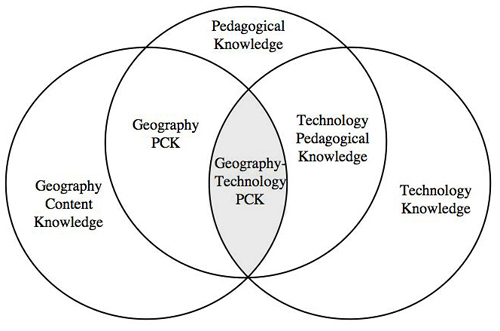

Our specific interest within social studies education is geography. In keeping with the current trajectories of TPACK research, Doering and Veletsianos (2007b) identified geography technological pedagogical content knowledge (see Figure 2) as a necessary component for teacher education programs to focus on in order to facilitate increased integration of geospatial technologies (e.g., Google Earth) into K-12 classrooms. Additionally, Doering, Veletsianos, Scharber, and Miller (in press) have done work in a geography-focused learning environment and in professional development grounded in TPACK.

Figure 2. Geographic technological pedagogical content knowledge (Doering & Veletsianos, 2007b).

Figure 2. Geographic technological pedagogical content knowledge (Doering & Veletsianos, 2007b).

Next Steps With TPACK

Although the TPACK framework offers a theoretical explanation for teacher knowledge, challenges remain prevalent including the identification of ways to develop, assess, and measure TPACK. Research is now beginning to address these challenges. For example, Koehler, Mishra, & Yahya (2007) documented how iterative the development of TPACK can be in their investigation of college faculty working with master’s students in developing online courses. These researchers noted that over the course of the seminar, faculty moved from considering the TPACK constructs separately toward a more complex understanding of the nuanced interplay between the TPACK construct. In previous work, Doering, Veletsianos, Scharber, and Miller (in press) have explored how to use TPACK within professional development for an online learning environment grounded in TPACK. During the professional development meeting, teachers were encouraged to be self-reflective and metacognitive about their knowledge by explicitly thinking about their TPACK and mapping it onto the TPACK Venn diagram. This activity was completed outside of the teaching/learning environment using paper and pencil.

This article takes our research a step further and offers an example of how the TPACK framework is currently being used within the same online geography environment for self-assessment purposes. However, in this iteration, pre- and in-service teachers reflected on the distinct yet synergistic components of the strengths and weaknesses of their teaching knowledge by using an online visual representation of TPACK, as well as additional online assessment tools described later in this manuscript.

Online Geographic Environment Evolution: MSE to LGGT to GeoThentic

Overview

Current approaches to teaching geospatial technologies (GST) in K-12 classrooms have been ineffective as a result of inadequate GST training with both in-service and preservice teachers and an absence of development in pedagogical models for teaching GST (Bednarz & Audet, 2003; Doering, 2004; Doering & Veletsianos, 2007a; Sanders, Kajs, & Crawford, 2001). In an attempt to enhance the teaching, use, and implementation of GST in the K-12 classroom, our research team has been engaged in a 3-year design-based research process to develop and disseminate an online GST learning environment, the latest iteration of which is entitled GeoThentic.

GeoThentic is the primary focus of this article; however, it is helpful to provide an overview of the evolution of this environment and the iterative nature of its successive designs. In the following sections, the methodology of design-based research and each of the learning environments is briefly described.

Design-Based Research

We utilize a methodology of design-based research (DBR) to examine how the theoretical foundation of the Geothentic project has been enhanced through continuous iterations of design, implementation, and research. DBR focuses on complex problems, such as teaching and learning with technology. Such problems are best examined through an investigation of and immersion in the natural context and stimuli, as opposed to engagement with laboratory investigations that are controlled and artificial (Design-Based Research Collective, 2003; Lavie & Tractinsky, 2004; Reeves, Herrington, & Oliver, 2004).

DBR is often achieved through collaboration among practitioners, researchers, and users over extended periods of time (Reeves et al., 2004), and attempts to clarify relationships between practice, theory, and design (Design-Based Research Collective, 2003). In recent years, DBR has become an appealing methodology for instructional designers interested in transforming and enhancing the learner experience through interventions grounded in actual classroom situations (Sandoval, 2004).

The technological and theoretical foundations of the Geothentic learning environment stem from a 3-year, iterative evolution of pedagogical objectives, contemporary design approaches, and research-based classroom implementation. Through these iterative cycles the primary focus of the project has shifted from providing a selection of simple technology scaffolds to support learners in online geography education (multi-scaffolding environment, or MSE), toward providing TPACK development for teachers and learners through on-demand, synced-scaffolds (i.e., interactive supportive technologies working collectively) in a collaborative online learning environment (GeoThentic). In other words, early design implementations focused on individual, component-like tools for the learner, whereas current designs are focused on the interplay of synced-scaffolds to foster knowledge development for both learner and instructor.

Obtaining teacher insights and input were consciously built in, considered, and utilized in the design and development processes of these geographic online learning environments as they progressed. In summer 2006, the environment’s developers received input from 22 in-service teachers about the first generation of the environment. Then, during spring 2007, eight teachers gave input into the next generation of the environment during a 1-day workshop where they engaged with a prototype of the environment. After changes had been made, these teachers then utilized it in their classrooms for an entire semester, providing further input into design and development.

Building on the work of the previous iterations, the third generation of this learning environment, GeoThentic, was funded by the National Geographic Society in 2007. Two iterative cycles were written within this grant for in-service teachers’ input. The first cycle occurred in spring 2008; the second cycle occurred in spring 2009. Both cycles were comprised of a 1-day workshop where teachers from the Minnesota Alliance for Geographic Education (MAGE) used the GeoThentic environment and provided feedback to the designers. The MAGE teachers then used the Geothentic environment within their classrooms in order to provide formative and summative feedback on the impacts of student use with the situated movies, screen capture videos, intelligent agent, and chat scaffolds.

Additionally, during the second cycle, implications for refinement of the teachers’ TPACK development components and grade book functionality that comprise the instructor platform of the environment were also gathered. Methods for data collection across these three environments included large group discussion, teacher and student focus groups, one-to-one feedback from instructors, personal interviews, user observations, user back-end software tracking, and Apple PhotoBooth reflections. Teacher and student data allowed for emergent and persistent themes to guide and granulate future iterations of the learning environment.

Multi-Scaffolding Environment

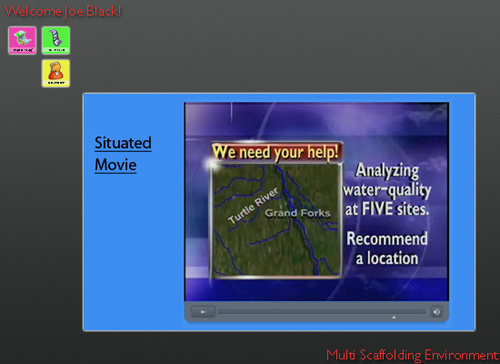

The MSE represented our first attempt at developing a pedagogical model of learning with GST (Doering & Veletsianos, 2007a). MSE provided opportunities for students to learn geography with GST by solving authentic and complex problems in an online environment. The online environment MSE was created around four scaffolds designed to assist learners through a learning experience: a situated movie, a screen-capture video, a conversational agent, and a collaboration zone (Doering & Veletsianos, 2007a). Based on theories of situated learning (Lave & Wegner, 1991) and anchor-based instruction (Cognition and Technology Group at Vanderbilt, 1990) the heart of MSE was the scaffolds, providing on-demand assistance to the learners:

- The situated movie announced, situated, and gave pertinent data about the real-world problem in an authentic context using real-world video clips and situations.

- Screen-capture videos demonstrated how to use a geographic information system (GIS) as an expert to solve the authentic problems posed in the situated video. The screen-capture videos were equivalent to having an expert instructor showcasing the procedural steps of using a GIS.

- A conversational agent, an artificial intelligence avatar, represented expert knowledge as data were extracted from the database when conversing with a learner. These responses from the avatar to the learner were developed based on questions learners had posed when solving the specific problem presented within the situated movie.

- The collaboration zone allowed learners to discuss the problems that were being solved with the GIS with other learners within the environment, situating the conversations in a social context and allowing learners to interact and negotiate meaning (as recommended by Vygotsky, 1978).

Users could select one of the four icons in the upper-lefthand corner to select a situated movie, a screen capture video, a collaboration area, or assistance via a pedagogical agent while they were solving the geographical tasks within Google Earth (see Figure 3).

Figure 3. “Situated Movie” scaffold in the Multi-Scaffolding Environment.

The incorporation of integrated scaffolds for learners was based on a study of three online pedagogical models for integrating GST in preservice teacher education courses (Doering, 2004), which found that learning geography with GST is best accomplished through the use of multiple scaffolds and guidance in a structured problem-solving environment. Moreover, it has been noted that although the use of GST is the one technology that can assist students in meeting all of the National Geography Standards (Audet & Paris, 1995), the integration of it within K-12 classrooms has not been effective because of inadequate training of teachers, a lack of pedagogical teaching models, and the failure of preservice teacher education programs to teach GST in authentic ways (Sanders et al., 2001).

The MSE project was still in its infancy when first used in the summer of 2006. At that time our research team prototyped the learning environment with 22 preservice teachers. Data gathered from this initial round of examination led to an updated version of the learning environment coined by our team as Learning Geography Through Geospatial Technologies (LGGT).

LGGT

MSE evolved into the LGGT environment, which encompassed two primary components: (a) a student environment that assisted learners in solving geographic problems, and (b) a teacher environment focusing on the development of instructors’ TPACK (see Figure 4). Although components of LGGT supported the teachers’ TPACK, it was not explicitly provided in this language to teachers within the learning environment, nor was it in the design team’s iterative processes. Much like MSE, LGGT provided four student scaffolds, but the scaffolds were developed to be more detailed to specific lesson plans that also accompanied the environment.

Moreover, a teacher side was added to the environment, providing teachers with the same scaffolds as the students in addition to lesson plans and handouts. Specifically, the student and teacher environments provided on-demand support to their intended audiences. Within the student environment, learners had access to scaffolds at any time as they solved authentic geographic problems. In parallel, the teacher environment provided instructors with assistance on how to teach geography using geospatial technologies.

During spring 2007, eight in-service teachers provided input and feedback on the design of LGGT during a meeting in which they utilized the environment. After the teachers’ recommendations were incorporated in the environment, these teachers, along with more than 600 of their students, utilized LGGT for one semester.

Based on interviews with teachers and students, focus groups with students, and quasi-experimental data on the use of LGGT, the learning environment underwent yet another final redesign. However, unlike MSE and LGGT, which were funded by small internal grants from the University of Minnesota, the learning environment was now funded and supported through the National Geographic Society.

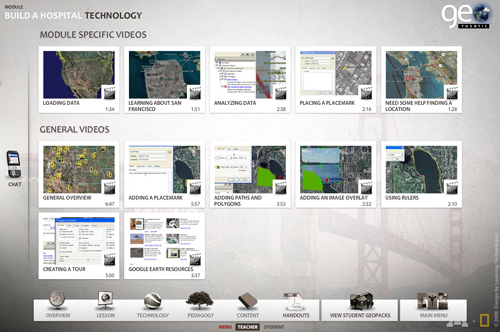

Figure 4. “Screen Capture” Scaffold: Teacher-side within the Learning Geography through Geospatial Technologies Environment.

Figure 4. “Screen Capture” Scaffold: Teacher-side within the Learning Geography through Geospatial Technologies Environment.

GeoThentic

GeoThentic, the current generation of the learning environment, assists teachers and students in teaching and learning geography using geospatial technologies (e.g., Google Earth) through scaffolding, curricula, and TPACK assessment models (see http://geothentic.umn.edu). Similar to LGGT, the GeoThentic online environment has two interfaces: (a) a student interface that students use when solving problems with geospatial technologies (see Figure 5), and (b) a teacher interface that teachers use to prepare for teaching a module and to assess their TPACK (see Figure 6). The introduction of the TPACK framework to teachers explicitly and its prominence in the menu items within GeoThentic are what makes GeoThentic unique.

Figure 5. Student side: “Situated Movie” in the GeoThentic learning environment

Figure 6. Teacher side: “Technology Knowledge” in the GeoThentic learning environment

Student Interface

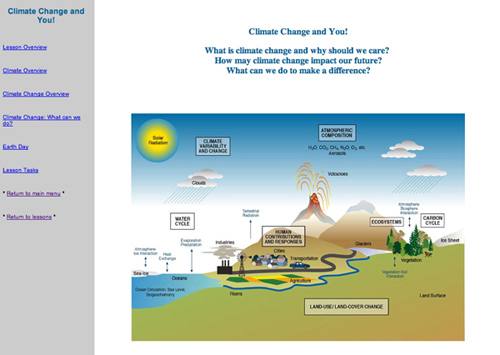

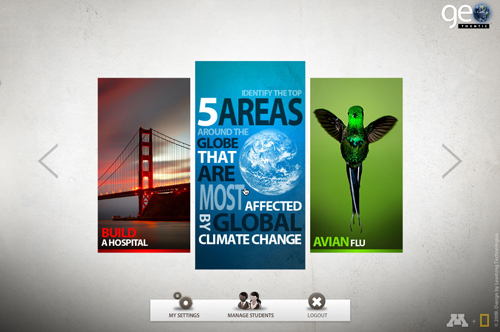

Designed on the premise of providing cognitive apprenticeship (Collins, Brown, & Newman, 1989) by situating learning within an authentic setting, GeoThentic creates opportunities for students to learn with geospatial technologies by solving authentic complex problems within an online environment. These authentic settings are currently represented throughout five modules available in the environment (see Figure 7 and Table 1).

For example, in the San Francisco Hospital module, students analyze layers of socioscientific data (e.g., seismic activity, population density, existing locations of hospitals, etc.) to identify the best location to build a new hospital. To support cognitive apprenticeship, scaffolding and features of coaching are used to assist learners (as identified by Enkenberg, 2001). Expert guidance and peer collaboration are both available through GeoThentic; however, GeoThentic differs from traditional scaffolding by providing inherent and gradual withdrawal of support. All scaffolds are available at all times and the selection and use of individual scaffolds is dependent on the learner.

Figure 7. “Select a Module” screen in the GeoThentic learning environment.

Table 1

GeoThentic Module Overview

Modules | Geospatial Activity | Module-Specific Teacher Scaffolds | Module-Specific Student Scaffolds |

| San Francisco:Where is the best place to build a hospital in San Francisco? | Using multiple data layers within Google Earth, student identify and justify the best place to build a new hospital within the San Francisco region. | • Lesson Overview • Lesson Plans • Technology Knowledge • Content Knowledge • Pedagogical Knowledge • Lesson Resources | Situated Movie Screen-Capture Videos Chat Expert Guidance |

| Global Climate Change: What are the top five places in the world being impacted by climate change? | Using multiple data layers within Google Earth, students identify, rank, and justify the top 5 of 10 locations throughout the world impacted by climate change. | • Lesson Overview • Lesson Plans • Technology Knowledge • Content Knowledge • Pedagogical Knowledge • Lesson Resources | Situated Movie Screen-Capture Videos Chat Expert Guidance |

| Avian Flu: What are the top three places in the world being impacted by the avian flu? | Using multiple data layers within Google Earth, students identify, rank, and justify the top 3 of 10 locations throughout the world impacted by the avian flue. | • Lesson Overview • Lesson Plans • Technology Knowledge • Content Knowledge • Pedagogical Knowledge • Lesson Resources | Situated Movie Screen-Capture Videos Chat Expert Guidance |

| Football Stadium: Where is the best place to build a new football stadium? | Using multiple data layers within Google Earth, students identify and justify the best location to build a new football stadium within the United States. | • Lesson Overview • Lesson Plans • Technology Knowledge • Content Knowledge • Pedagogical Knowledge • Lesson Resources | Situated Movie Screen-Capture Videos Chat Expert Guidance |

| Population Density: What is the location in North Dakota that is most impacted by emigration? | Using multiple data layers within Google Earth, students identify and justify the location in North Dakota that is most impacted by emigration. | • Lesson Overview • Lesson Plans • Technology Knowledge • Content Knowledge • Pedagogical Knowledge • Lesson Resources | Situated Movie Screen-Capture Videos Chat Expert Guidance |

Teacher Interface

The GeoThentic teacher interface is designed to assist instructors with limited background knowledge in teaching geography through the use of geospatial technologies. Unlike MSE and LGGT, TPACK explicitly drives the design of the teacher interface within GeoThentic. The design team believes in the power and clarity of the TPACK framework in communicating with teachers why technology, especially GIS, should be integrated into geography teaching and learning. Therefore, each scaffold within the environment was designed specifically to address the technological, pedagogical, and content knowledge domains of each module.

For example, before teaching the module on global climate change, teachers are scaffolded with content knowledge – a guided curriculum with resources and background information; pedagogical knowledge – three pedagogical models for integrating the module within their classroom; and technological knowledge – screen-capture videos that describe procedural knowledge on how to use the geospatial technologies and assist students with technology problems that may arise. Finally, the teacher interface also includes three interactive TPACK assessment models, which are described next.

TPACK Assessment Models

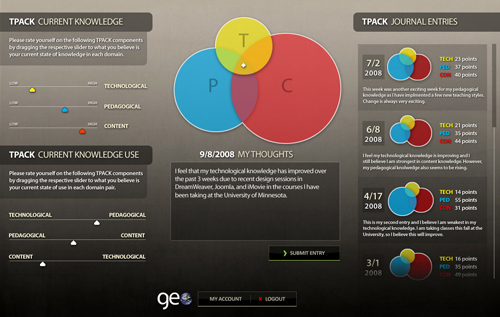

Parallel to providing teachers with TPACK scaffolds, GeoThentic provides instructors with three TPACK interactive assessment models, an innovative design component in the realms of online instructional software and TPACK research and design. TPACK assessments are included in GeoThentic, because the TPACK framework is not only valuable to the technology integration research community, but is also valuable to teachers. When teachers are introduced to and become aware of this knowledge framework, they can use it as a reflective tool, possibly enhancing their classroom practices. TPACK interactive assessment models built into GeoThentic include (a) a teacher-reported model (TRM), (b) an evaluative assessment model (EAM), and (c) a user-path model (UPM).

Teacher-Reported Model of TPACK

The TRM is designed to allow teachers to report the TPACK they have and use. As shown in Figure 8, teachers employ a slider bar to identify where they view themselves within the three knowledge domain areas of content, technology, and pedagogy. As teachers move the slider in the Current Knowledge section at the top left of the screen, the diamond in the TPACK framework is automatically repositioned based upon a predetermined algorithm (i.e., an equation that converts the teacher’s relative scores for each TPACK category to an x,y coordinate superimposed over the Venn diagram), ultimately placing the teacher within the center triangle of TPACK. Moreover, the teachers can position the slider bars in the Current Use section at the bottom left of the screen to identify the TPACK they use. By moving this slider, the size of each representative TPACK domain circle is scaled accordingly. Thus, a circle is scaled larger if the knowledge can be used within the classroom and smaller if it cannot be used.

Teachers who used GeoThentic have noted that, although they might have very strong domain knowledge, the context of the face-to-face learning environment may not be conducive to them employing the knowledge they have (Doering, Veletsianos, Scharber, & Miller, in press). For example, a teacher who places herself in the upper limit of technology knowledge may not be able to employ the knowledge because her elementary classroom located in an impoverished region does not have access to technology, thereby precluding integration. Most importantly, the TRM serves as a metacognitive reflection tool. In other words, it represents an interactive online TPACK journal for teachers as they assess themselves through the GeoThentic modules.

Figure 8. Teacher-Reported Model (TRM) in GeoThentic.

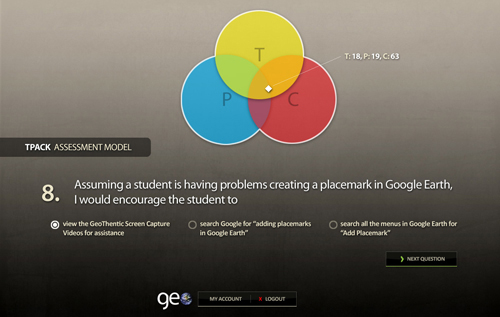

Evaluative Assessment Model of TPACK

The EAM consists of module-specific multiple-choice questions employed to identify the teacher’s current TPACK (see Figure 9). The questions are designed to assess all three knowledge domains while providing teachers with a visualization of where their knowledge is currently located in the TPACK triangle. This location is calculated automatically and visualized for the teacher in real-time. Based on these scores, teachers can see if the TRM aligns with the EAM and how they may close the gap to assist in moving toward the center of the TPACK triangle.

Figure 9. Evaluative Assessment Model in GeoThentic.

The User-Path Model of TPACK

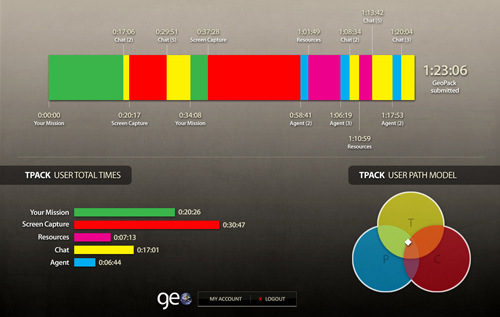

The UPM method of assessing teachers’ TPACK relates to analyzing teachers’ actions within the online learning environment. As teachers engage with GeoThentic, or with any other online environment, they leave behind a trail of data. Such data can be mined and analyzed to determine user actions, needs, and requirements. With reference to Geothentic, teacher’s actions in the online learning environment are related to what teachers (a) know, (b) need assistance with, (c) perceive to be interesting, engaging, or difficult, and (d) deem to be mundane. Consider the following scenario:

Mark is a first time GeoThentic user and chooses to explore the Avian Flu lesson module with his students. After logging into the environment, he views the situated movie once, asks the virtual character (i.e., agent) five technology-related questions, and spends 20 minutes watching screen-capture videos, which provide guidance on how to use the geospatial software. All of Mark’s actions can then be analyzed both quantitatively (e.g., minutes spent on screen capture videos) and qualitatively (e.g., types of questions asked of the pedagogical agent), such that a TPACK profile can be generated for him. For example, in this scenario, Mark demonstrated a need for technology-related guidance, indicating a lack in technology-related pedagogical knowledge.

An initial conceptualization of the UPM method is presented in Doering and Veletsianos (2007a), demonstrating the viability of Web analytics in drawing inferences regarding learner actions (see Figure 10).

Figure 10. Screen capture of the User Path Model in GeoThentic.

Implications

Teaching and learning with technology has been termed a “wicked problem” (Mishra & Koehler, 2007). Such problems are ill defined and require large groups of people (in this case, educators, policymakers, and researchers, just to name a few) to engage with the problem and change the way the problem is perceived. It is naive, at best, to assume that TPACK can be assessed by one single tool or instrument. Rather, TPACK needs to be investigated from a number of complementary angles that contribute to a holistic assessment of how teachers teach with technology. Our hope is that the tools and methods described within this paper present a fresh perspective for envisioning how TPACK can be evaluated and how to design a learning environment based on the TPACK framework.

Through the multiple design iterations, prototype phases, testing, and evaluative measures completed with students and teachers over the past several years, we have found that (a) designers need to capitalize on the power of technological advancements to design and evaluate with TPACK, and (b) learning environments must challenge teachers’ metacognitive awareness of TPACK, while research must begin immediately to validate TPACK assessment processes.

Capitalize on the Power of Technological Advancements

The GeoThentic online environment represents an innovative attempt at merging current technological advancements and affordances with assisting in teachers’ TPACK evaluation. Through close collaboration in our design, development, and research team of professors in interaction design, K-12 technology integration, and online learning, we focus on developing learning environments that implement the latest technological innovations for theoretical advancements. Using Web analytics, user-tracking, information visualization, Flash-based real-time heuristics, and the latest theory, integration, and design practices, teachers will be able to teach by employing scaffolds that assist them in all areas of TPACK, while also using the described TPACK assessment models to identify where professional development may be needed.

Challenging Teachers’ Metacognitive Awareness of TPACK

The assessment models within GeoThentic are not, at this time, meant to be employed as a statistically valid measurement of teachers’ TPACK, but rather to present a baseline for teachers to challenge their metacogntive awareness of TPACK and reflect on progress. With access to three different assessments (the TRM, EAM, and UPM), teachers are able to assess, reflect, and document their TPACK while planning a course of action for professional development.

For example, say Rachael, a social studies teacher, is preparing to teach the GeoThentic module on global climate change. She completes the TRM where she identifies a weakness in the technological knowledge required to teach this unit. She believes she still could implement the module, but decides to complete a practice run within the GeoThentic environment prior to exploring it with her students. After reviewing her UPM, she notices that she is spending the majority of her time on the situated movies to learn the procedural knowledge of Google Earth. In response to this realization, she adjusts her pedagogical strategy in class the following day to provide students with adequate time to “play” with Google Earth and learn how to analyze layers and create placemarks before moving directly into the assignment. As she watches her students “play,” Rachael learns the necessary knowledge to become more comfortable with the technology and learns a pedagogical adjustment that is successful.

Concluding Thoughts

Although our TPACK assessment models are being currently used as metacognitive tools, we hope to contribute to the conversation about how to best assess TPACK knowledge, which may be done by research and validation studies or by design explorations. This process will entail the initial prototype algorithms for TPACK assessment while designing a stand-alone online TPACK interactive journal independent of a specific software package or application that is available to all educators.

References

AACTE Committee on Innovation and Technology (Ed.). (2008). Handbook of technological pedagogical content knowledge (TPCK) for educators. New York: Routledge.

Audet, R. H., & Paris, J. (1997). GIS implementation model for schools: Assessing the critical concerns. Journal of Geography, 96, 293-300.

Bednarz, S., & Audet R. (2003). The status of GIS technology in teacher preparation programs. Journal of Geography, 98,60-67.

Bednarz, S. W., & van der Schee, J. (2006). Europe and the United States: The implementation of geographic information systems in secondary education in two contexts. Technology, Pedagogy, and Education, 15(2), 191-205.

Bennett, L., & Berson, M. J. (Eds.) (2007). Digital age: Technology-based K-12 lesson plans for social studies. Silver Spring, MD: National Council for the Social Studies.

Berson, M. J., & Balyta, P. (2004). Technological thinking and practice in the social studies: Transcending the tumultuous adolescence of reform. Journal of Computing in Teacher Education 20(4), 141-150.

Bransford, J. D., Brown, A. L., & Cocking, R. R. (1999). How people learn: Brain, mind, experience, and school. Washington, DC: National Academy Press.

Cognition and Technology Group at Vanderbilt. (1990). Anchored instruction and its relationship to situated cognition. Educational Researcher, 19(6), 2-10.

Collins, A., Brown, J., & Newman, S. (1989). Cognitive apprenticeship: Teaching the craft of reading, writing, and mathematics. In L. Resnick (Ed.), Knowing, learning, and instruction: Essays in honor of Robert Glaser (pp. 453–494). Hillsdale, NJ: Lawrence Erlbaum Associates.

Cox, S. (2008). A conceptual analysis of technological pedagogical content knowledge. Unpublished doctoral dissertation. Brigham Young University.

Cuban, L. (2001). Oversold and underused: Computers in the classroom. Cambridge, MA: Harvard University Press.

Cuban, L. (2008). Hugging the middle: How teachers teach in an era of testing and accountability. New York: Teachers College Press.

Design-Based Research Collective. (2003). Design-based research: An emerging paradigm for educational inquiry. Educational Researcher, 32(1), 5-8.

Doering, A. (2004). GIS in education: An examination of pedagogy. Unpublished doctoral dissertation, University of Minnesota, Minneapolis.

Doering, A., & Veletsianos, G. (2007a). An investigation of the use of real-time, authentic geospatial data in the K-12 classroom. Journal of Geography, 106(6), 217-225.

Doering, A., & Veletsianos, G. (2007b). Multi-scaffolding learning environment: An analysis of scaffolding and its impact on cognitive load and problem-solving ability. Journal of Educational Computing Research, 37(2), 107-129.

Doering, A., Veletsianos, G., & Scharber, C. (2007). Coming of age: Research and pedagogy on geospatial technologies within K-12 social studies education. In A. J. Milson & Alibrandi, M. (Eds.), Digital geography: Geo-spatial technologies in the social studies classroom (pp. 213-226). Charlotte, NC: Information Age Publishing.

Doering, A., Veletsianos, G., & Scharber, C., & Miller, C. (in press). Using the Technological, Pedagogical, and Content Knowledge Framework to design online learning environments and professional development. Journal of Educational Computing Research.

Enkenberg, J. (2001). Instructional design and emerging models in higher education. Computers in Human Behavior, 17, 495–506.

Eisner, E.W. (1994). The educational imagination: On the design and evaluation of school programs. New York: Macmillan.

Friedman, A. M., & Hicks, D. (2006). The state of the field: Technology, social studies, and teacher education. Contemporary Issues in Technology and Teacher Education, 6(2). Retrieved from https://citejournal.org/vol6/iss2/socialstudies/article1.cfm

Hughes, J. E. (2000). Teaching English with technology: Exploring teacher learning and practice. Unpublished doctoral dissertation, Michigan State University, East Lansing, MI.

Hughes, J. E. (2004). Technology learning principles for preservice and in-service teacher education. Contemporary Issues in Technology and Teacher Education [Online serial], 4(3). Retrieved from https://citejournal.org/vol4/iss3/general/article2.cfm

Heafner, T. (2004). Using technology to motivate students to learn social studies. Contemporary Issues in Technology and Teacher Education [Online serial], 4(1). Retrieved from https://citejournal.org/vol4/iss1/socialstudies/article1.cfm

Hughes, J. E., & Scharber, C. (2008). Leveraging the development of English-technology pedagogical content knowledge within the deictic nature of literacy. In AACTE’s Committee on Innovation and Technology(Eds.), Handbook of technological pedagogical content knowledge for educators (pp. 87-106). Mahwah, NJ: Routledge.

Keiper, T. A. (1999). GIS for elementary students: An inquiry into a new approach to learning geography. Journal of Geography, 98, 47-59.

Koehler, M.J., Mishra, P., & Yahya, K. (2007). Tracing the development of teacher knowledge in a design seminar: Integrating content, pedagogy, and technology. Computers and Education, 49(3), 740-762.

Lave, J., & Wenger, E. (1991). Situated learning: Legitimate peripheral participation. Cambridge: Cambridge University Press.

Lavie, T., & Tractinsky, N. (2004). Assessing dimensions of perceived visual aesthetics of Web sites. International Journal of Human-Computer Studies, 60, 269-298.

Lee, J. K. (2008). Toward democracy: Social studies and TPCK. In AACTE Committee on Innovation and Technology (Ed.), The handbook of technological pedagogical content knowledge (TPCK) for educators (pp. 129-143). New York: Routledge.

Martorella, P. (1997). Technology and the social studies: Which way to the sleeping giant? Theory and Research in Social Education, 25(4), 511-514.

Mehlinger, H. D., & Powers, S. M. (2002). Technology and teacher education: A guide for educators and policy makers. Boston: Houghton Mifflin Company.

Mishra, P., & Koehler, M.J. (2006). Technological pedagogical content knowledge: A framework for teacher knowledge. Teachers College Record, 108(6), 1017-1054.

Mishra, P., & Koehler, M. (2007). Technological pedagogical content knowledge (TPCK): Confronting the wicked problems of teaching with technology. In C. Crawford et al. (Eds.), Proceedings of Society for Information Technology and Teacher Education International Conference 2007 (pp. 2214-2226). Chesapeake, VA: Association for the Advancement of Computers in Education.

National Council for the Social Studies. (2006). Technology position statement and guidelines. Retrieved from http://www.socialstudies.org/positions/technology

Niess, M. L. (2005). Preparing teachers to teach science and mathematics with technology: Developing a technology pedagogical content knowledge. Teaching and Teacher Education, 21, 509-523.

Reeves, T., Herrington, J., & Oliver, R. (2004). A development research agenda for online collaborative learning. Educational Technology Research and Development, 52(4), 53-65.

Ross, E. (2000). The promise and perils of e-learning. Theory and Research in Social Education, 28(4), 482-492.

Sanders, R., Kajs, L., & Crawford, C. (2001). Electronic mapping in education: The use of Geographic Information Systems. Journal of Research on Technology in Education, 34(2), 121-129.

Sandoval, W. (2004). Developing learning theory by refining conjectures embodied in educational designs. Educational Psychologist, 39(4), 213-223.

Schön, D.A. (1987). Educating the reflective practitioner. San Francisco: Jossey-Bass.

Shulman, L. (1986). Those who understand: Knowledge growth in teaching. Educational Researcher, 15(2), 4-14.

Shulman, L. S. (1987). Knowledge and teaching: Foundations of the new reform. Harvard Educational Review, 57(1), 1-22.

Swan, K. O., & Hofer, M. (2008). Technology and social studies. In L. S. Levstik & C. A. Tyson (Eds.), Handbook of research in social studies education (pp. 307-326). New York: Routledge.

Tally, B. (2007). Digital technology and the end of social studies education. Theory and Research in Social Education, 35(2), 305-321.

Thompson, A., & Mishra, P. (Winter 2007-2008). Breaking news: TPCK becomes TPACK! Journal of Computing in Teacher Education, 24(2). Retrieved from http://www.iste.org/Content/NavigationMenu/Membership/SIGs/

SIGTETeacherEducators/JCTE/PastIssues/Volume24/Number2Winter20072008/jcte-24-2-038-tho.pdf

Thompson, A. D., Boyd, K., Clark, K., Colbert, J. A., Guan, S., Harris, J. B., & Kelly, M. A. (2008). TPCK action for teacher education: It’s about time! In AACTE Committee on Innovation and Technology (Eds.), Handbook of technological pedagogical content knowledge (TPCK) for educators. (pp. 289-300). New York: Routledge.

Vygotsky, L.S. (1978). Mind in society: The development of higher psychological processes. Cambridge, MA: Harvard University Press.

Whitworth, S. A., & Berson, M. J. (2003). Computer technology in the social studies: An examination of the effectiveness literature (1996-2001). Contemporary Issues in Technology and Teacher Education [Online serial], 2(4). Retrieved from https://citejournal.org/vol2/iss4/socialstudies/article1.cfm

Author Contacts:

Aaron Doering

University of Minnesota

Email: [email protected]

Cassandra Scharber

University of Minnesota

Email: [email protected]

Charles Miller

University of Minnesota

Email: [email protected]

George Veletsianos

University of Manchester

Email: [email protected]

![]()