Overview

Between 2010 and 2014 the project, Studying Topography, Orographic Rainfall, and Ecosystems with Geospatial Information Technology (STORE) explored the strategies that stimulate teacher commitment to the project’s driving innovation: having students use geospatial information technology (GIT) to learn about weather, climate, and ecosystems. The GIT in STORE was a combination of freely available place-based geospatial data sets and visualization tools. The goal was to structure the innovation and accompanying teacher professional development strategy so that participating teachers would find it appealing, plan for and enact effective instruction with it, achieve optimal impacts on student learning and engagement, and persist with it enough to carry out two implementations.

A professional development strategy for bringing about these ends evolved iteratively. The article describes how STORE addressed the challenges of getting teachers to persist with the innovation and to use it skillfully. It culminated in the positing of a new model—Conceptualization, Iteration, Adoption, and Adaptation (CIAA)—for developing scientific data-centered instructional resources and accompanying teacher professional development.

CIAA emerged as the pathway to high teacher commitment to the project as codevelopers and users. Applying principles guiding the development of grounded theory (Glaser & Strauss, 1967), the project team developed the model as a product of their analyses of the teacher attitudes and behaviors, which they gathered in meetings and interviews with the teachers, observations of their classes, and studies of the curricula they implemented in those classes.

Sustaining teacher commitment to a curricular innovation is problematic, and rates of attrition from innovative practices are high (Kubitskey, Johnson, Mawyer, Fishman, & Edelson, 2012). Reasons include lack of time to learn and pursue the innovation, lack of administrator support, lack of incentives, and absence of a professional learning community (DuFour, 2004; Dunne, Nave, & Lewis, 2000; Louis & Marks, 1998; Strahan, 2003). Another factor may be mismatch between the expectations of the project for teacher involvement and the extent to which the particular participating teachers can meet those expectations (Rogers, 1962; Schoenfeld, 2011).

Commitment to innovation does not necessarily bring about enactment of high quality implementation (Darling-Hammond et. al., 2009). Reasons cited in the literature include lack of teacher skill in enacting high-quality instruction, especially when the instruction calls for student-centered discussion, argumentation, and deep reasoning.

These challenges may arise even when the teacher is a good planner and developer of high-quality lesson plans and assessments (Grossman, Hammerness, & McDonald, 2009). Teachers may lack sufficient content knowledge or background in the technologies needed for successful implementation. In the science classroom in particular, these inadequacies may be manifest in overly didactic and superficial treatment of content and, when there is hands-on technology involved, inordinate attention to procedure at the expense of scientific analysis and communication (Penuel et al., 2006). Hence, two types of barriers confront teacher success in implementing innovations: barriers to their acquiring the skills they need for successful planning and implementation and barriers to their persistence. This article describes how the STORE project addressed these barriers and what were the results. The results are a combination of attitudes expressed on the teacher survey, the record of products that the teachers developed, and the diversity of implementations that occurred.

Background

The STORE project team compiled geospatial data sets from publicly available scientific portals and made them useable in ArcGIS Explorer Desktop and Google Earth. These GIT applications are freely available, and anyone can download onto their computers from the Web. Free accessibility and downloading capability were the main reasons behind the project decision to use those two applications to host the data, even though they are less sophisticated than some other GIT applications, such as ArcMap, that cost money or require schools to obtain grants to purchase.

To guide classroom use of the GIT applications and data sets, the project research team, with the help of six design partner teachers, developed in the first project year the first iterations of six thematically connected, hands-on exemplar lessons that provide students with the opportunity to see focal enduring understandings played out in “study area” regions of mid-California and the western part of New York State. These enduring understandings include orographic impacts on weather and climate, sustainment of plant species and ecosystems, and climate change. In the process, students were to learn about the nature of geospatial data, including how the data are collected and visually rendered in layers on maps.

The lessons make use of parallel data sets about the two study areas. The data provide recent multidecadal averages of temperature, precipitation, and predominating land cover, as well as climate change model-based projections for temperature, precipitation, and land cover in 2050 and 2099. The model is the A2 scenario from the Intergovernmental Panel on Climate Change (Nakicenovic & Swart, 2000). This scenario assumes continued global growth of carbon dioxide levels in the atmosphere corresponding to the continuation of current levels of population growth.

The STORE data tell a relatively clear narrative about orographic rainfall and how it influences the climate and predominating land cover. The California orographic rainfall is influenced by winter weather systems coming off of the Pacific Ocean, going west over the Coastal Range, over the Central Valley, and up the slopes of the Sierra Nevada Mountains. Occasional summer storms coming north from the Gulf of California and northwest from the Gulf of Mexico have a slightly different path. Yet, as with the winter storms, they bring strong orographic rainfall effects.

The Western New York orographic rainfall is more subtle, influenced by less dramatic topographic variances. Also, Lake Ontario and Lake Erie exert a strong influence on the origins, magnitudes, and directions of storms. Hence, these data sets are especially reinforcing of learnings about the water cycle, the characteristics of populations and ecosystems, heredity, differences between weather and climate, principles of meteorology, and projected temperature increases on precipitation and land cover.

Lesson 1 introduces basic meteorological concepts about the relationship between weather systems and topography. Lessons 2 and 3 focus on recent climatological and land cover data (30-year averages). Lesson 4 brings in Excel as the technology for graphing relationships between temperatures at weather stations in the study areas and elevation. Lesson 4 reinforces learnings about temperature lapse rates, a concept first introduced in Lesson 1. The last two lessons focus on model-based climate change projections in relation to the possible fates of different regional species of vegetation. Answer keys are provided for the various constructed response questions in each lesson.

Teachers have in addition access to geospatial data files that display some storm systems that moved over California and New York between the winter of 2011 and summer of 2012. These storm data sets are especially useful for exploring the similarities and differences between weather and climate. Students can study relationships between the storm behavior, the topography of the study areas, and the extent to which the storms mimic the geospatial distributions of the 30-year climatology.

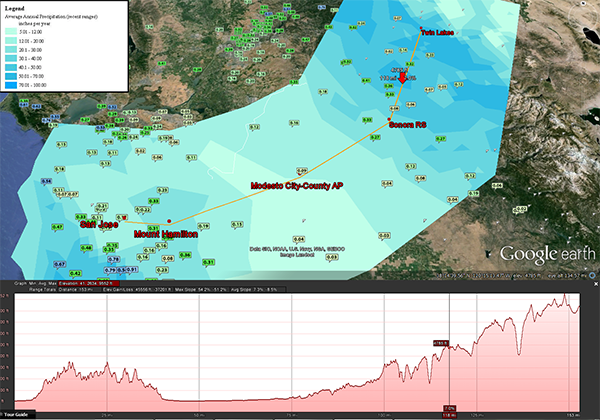

Figure 1 shows an image of how some of the STORE data layers appear in Google Earth. In this image, precipitation accumulations from one particular day in a particular storm that passed through the California study area appear on top of a layer showing 30-year precipitation averages. Below the base map is a transect that crosses weather stations from which the precipitation data were collected for the 30-year averages. This image is rich in conceptual learning. It exemplifies relationships between precipitation and elevation and lends itself to comparing rainfall accumulations from the storm to determine the extent to which the weather on that day was consistent with the different topographically influenced microclimates in the study area. All the data for producing images such as this one are available from the project website, http://store.sri.com.

After the development of the first iterations during the first project year, the six exemplar lessons were refined during subsequent years as needed after being used by students. During the second and third years of the project, the intention was to recruit six other teachers to implement and receive training for doing so. Design partner teachers were also asked to implement in Years 2 and 3, though one decided, instead, to start implementing in Year 1. Hence, the lessons, GIT, and data files were subject to multiple years of implementation and iteration. The 12 teachers were each asked to implement STORE twice in their classrooms. Usually, they implemented over the course of two academic years.

Method

Participants

The project team intended to work with 12 interested teachers, which the team would recruit through informal networking. Appendix A identifies the teachers’ backgrounds, school (https://nces.ed.gov/ccd/) and community (http://www.city-data.com) characteristics, whether they came into the project as design partners or not, their prior familiarity with GIT, how they were recruited, and during which project years they implemented STORE lessons.

We intended that six design partner teachers, who were recruited prior to the writing of the grant proposal for the project, would help the project team design the curricula and tools during the first project year. Half were recruited from locations near project researchers in Menlo Park, CA, and half near Geneva, NY. These six were supposed to begin implementation starting in Year 2 and then carry out another round of implementation in Year 3. One decided to start implementing in the latter half of Year 1, however. After Year 1, five recruited colleagues from their schools, and these pairs (CB and TO, LS and JW, JR and LC, EL and KM, GW and JT) maintained collaborations during the life of the project.

Of all the teachers, five had prior experience with GIT, owing to courses they had already been teaching or professional development they had already received, and seven had no prior experience with it. Of the six design partner teachers, the three from New York had already had experience with the technologies, but the three from California had not. This differentiator was unintended, however, and only the result of who happened to show interest.

Data were gathered in surveys and in semistructured one-on-one interviews to assess teacher attitudes, including their perceptions of challenges and the worth of the project. The interview protocol and survey instrument were developed by the research team. The team adapted items from instruments used on some prior research projects that also examined impacts of curricular innovations on teacher attitudes and classroom practices. With one exception (JR and LC), additional interviews were conducted with the collegial pairs who were teaching at the same schools. The one-on-one interview process was triangulated by the classroom observations (as in Merriam, 1995).

In cases where the teacher was implementing with different classes in multiple school periods, the observer would observe the teacher implementing the same lesson each period during the observation day. The observer audiotaped the classes and took photos of key events (such as a screenshot of the failure for a map to be redrawn in Google Earth following a student interaction requiring the redrawing, or a particular set of interactions between teacher and students on a smartboard). Observation of the teacher multiple times was a way to minimize threats to reliability of the observation data. The taking of photos was a way to validate the observation notes by providing another medium for communicating what happened in the classroom.

During small group time at computers, the observer walked around the room watching students interact and occasionally asking them what they were doing and why. The observer also acted as teacher aide, answering student content and technology questions if a hand was raised and the teacher was busy with another student. The emphasis in the observations was to detect key student and teacher behaviors and artifacts, not rigorous quantification of numbers of times certain behaviors occurred or numbers of students who exhibited them. This approach was in keeping with grounded theory (Glaser & Strauss, 1967)—to detect types of behaviors with less attention to exact frequencies. Yet, there was enough monitoring of frequencies in the broad sense to enable the application of a scoring rubric.

Each one-on-one interview followed on the same day or later the same week. Interviews with pairs would occur at convenient times for each teacher in the pair, not necessarily following an observation. The teachers’ responses to what the interviewer noted in his observations constituted a validity check on the observations because the teachers had an opportunity to contest a particular observer perception or conclusion.

The interviewer asked teachers to reflect back on how the lessons went and what they might do differently next time. The interviewer, equipped with his observation notes, asked them to comment on lesson aspects that he thought represented broad characteristics of the interactions between the teacher and the students and the interviewer’s observations of the extent to which the students appeared engaged with the lessons. Then, teachers responded with perspectives and critiques of what they did, why they did what they did, and what they might do differently the next time. This sharing of notes with the teachers and their responses to those notes constituted validation of the observations.

Individual teacher summaries were prepared in audio or written form from the interview protocols and observations. The summaries were organized by coding categories that emerged as most salient for capturing the key characteristics of what occurred during the implementation: technology (e.g., whether to use the ArcGIS Explorer Desktop or Google Earth software applications, how to orient students to the software, and how to troubleshoot problems that could arise with the software or hardware), instructional practice (e.g., how to tie student investigation of the data to prior knowledge, whether to modify certain tasks in the interest of time, and checking for understanding, stimulating discussion), and lesson content (e.g., tying tasks to previously introduced content, the degree of open-endedness designed into the lessons, tying lesson content to the vocabulary of scientific inquiry, and including student field observation tasks and analysis of storm data). Then, in follow-up discussions they looked at results from student classroom products and assessments as stimuli for additional reflections.

Ten teachers completed a survey after STORE implementation in their classrooms. The teacher surveys looked at the challenges teachers faced with the project, using a series of 4-point Likert scale questions (choices were totally agree, agree more than disagree, disagree more than agree, and totally disagree). Teachers who believed that an item was not applicable to them could instead select “other” and explain why in a comment field. Several other items used different selection scales that involved the teachers rating themselves on their perseverance with STORE. For validation of the survey instrument, the teachers were given the opportunity to provide feedback and additional information in comments if the questions did not capture the characteristics of their attitudes and practices that the survey was intended to capture.

A rubric was developed and used to score the characteristics of teacher instruction from observations of STORE implementations in the teachers’ classrooms (see Appendix B). The rubric was designed to capture by scale the key implementation characteristics of what was observed and corroborated in the postobservation interviews. The rubric was formulated after the first year’s implementations as a tool to capture the domains of behaviors and practices observed during those implementations then was used to rate them during their second year’s implementations.

Data were also gathered on student engagement and learning outcomes through pre and post assessments. The student outcomes are not the subject of this article, however.

Confronting Barriers to Acquiring the Skills Teachers Needed for Successful Planning and Enactment.

Strategies

Providing supports that would meet the needs of different teachers with differing levels of geospatial technology literacy and science knowledge was a challenge. A lesson learned by the STORE team at the first meeting with teachers, 2 months into the project, was that such supports were needed to deepen the teachers’ comfort with making good instructional uses of the GIT and data.

All but four of the teachers started their involvement in STORE as novices with GIT. To meet their technological knowledge needs, the STORE staff, starting in the second month of the first year and ending in the 11th month of the third year, developed and posted supporting teacher resources, for example,

- Decision support tutorial comparing the advantages and disadvantages of the two GIT applications.

- Slide, presentations, and videos on YouTube and on the project website that described for both students and teachers how to use the STORE data in Google Earth, the GIT application that all the teachers chose to use (although two, for their technology classes, chose to also use ArcGIS Explorer).

In addition, both the novice and more experienced GIT-using teachers needed to build their knowledge about the data; about how the data sets were collected, who collected them, and how they were visually represented on the GIT base map. They also needed to understand how the STORE technical team, in order to provide rich layers of projections of future outcomes in the face of climate change, took the climatology data and combined it with model data to make projections beyond the ones provided to the public by the government source agencies.

Three months into the project, the STORE team began developing and posting, first on a wiki, later on its website (http://store.sri.com), documentation that provided this background information, including metadata descriptions of each layer, how the data were derived, and how the STORE team processed the data into map layers.

The assumption driving the decision to document was that with the documentation the teachers would deepen their understanding. That is, they would become better able to help their students understand how scientists and technicians use what they have in data and models to draw conclusions, knowing that the conclusions they draw are not necessarily definitive because the data and models are predicated on assumptions subject to critical scrutiny.

The project was also challenged to come up with appropriate resources for building the teachers’ content knowledge about the scientific phenomena behind the data. Though observations, interviews, and group discussions that occurred in each project year revealed that their content knowledge was stronger than their knowledge of the technology and data, the project research team still treated content knowledge as a need and made content-oriented background information available.

Hence, overview documents from the National Climatic Data Center summarizing the climate characteristics of the states of the participating teachers and students were provided, as well as an answer key to the questions on the six exemplar lessons, and adapted versions of the lessons that the teachers were creating and implementing that showed how they can be resources for each other. For example, an Advanced Placement biology teacher embedded in her adapted lessons content concerning plant adaptability to rapid environmental change, a topic that some of the other teachers knew less about but could learn about by studying this teacher’s embellishments on that subject.

Research supports the value of making curricular materials educative as a vehicle for more effective teacher professional development (Bodzin, Anastasio, & Kulo, 2014). The educative components were more implicit than explicit. For example, the lessons were not embedded with annotations telling teachers why specific student tasks appeared in the lesson and what the teacher should be paying attention to when implementing those lessons. Rather they were educative because they exposed the teachers to examples of scientific understandings that can be drawn from the data and the types of student tasks that can be posed to build these understandings.

We assumed at first that the design research partnership would produce a curriculum that all the teachers would use, with perhaps minor modifications that they would make individually. A pivotal moment occurred during the second meeting of the design partner teachers. In the fourth month of the first year, we realized that this initial strategy would not work and that flexibility was needed. Two of the teachers were conversing over the six lessons. One teacher who taught biology argued that she would not want to teach a particular lesson because it focused too much on student analysis of the orographic rainfall data, whereas another teacher who taught environmental science liked those parts. The biology teacher had nothing against the meteorology parts and only objected because meteorology was not a topic in her course. She, instead, wanted to expand the population and ecosystem components by tying them to what her biology students were learning about evolution of plant species.

Principal investigator and paper co-author Dr. Daniel R. Zalles, who was leading this session, responded by telling these teachers that their inability to reach consensus was not a problem and that they could do what they want with the GIT and data as long as they justified why. Hence, the teachers were allowed to use the six exemplar lessons as is, adapt them, or make up their own. Furthermore, they could decide how much class time to devote to the data and lessons. When this policy of flexibility was articulated, these teachers could become more productive as design partners. They saw themselves as partners in the design of exemplar lessons they did not have to implement if the exemplars were not a good fit for their current courses.

The teachers who were not design partners and who joined the project in Year 2 were told immediately that they could use the six lessons as is, adapt them, or make up their own. Furthermore, they could decide how much class time to devote to the data and lessons. This action had the effect of creating a community of practice. As part of their training, teachers went through the exemplar lessons just like their students would, made judgments about their appropriateness, and then studied the adaptations that the other teachers made for the purpose of deciding on their own adaptations. For design partner teachers, this adapting began to be encouraged during their third group session in the fourth month of the project. For nondesign partner teachers, it was encouraged as soon as they began participating during Year 2.

This STORE strategy of developing a foundational curriculum of six exemplar lessons as a vehicle for building teachers’ capacities to then develop or adapt innovative materials themselves resembles a teacher professional development study that looked at student outcomes of a science education innovation in three comparison groups: (a) teachers implementing a predeveloped curriculum with adaptations as needed, (b) teachers being taught how to carry out their own instructional designs then developing their own curricula, and (c) a hybrid condition where they were expected to do both. The results showed that the attention to helping the teachers develop their own capacities to do instructional design was the most winning ingredient, due to its high correlation with student learning outcomes on common assessments (DeBarger, Choppin, Beauvineau, & Moorthy, 2014; Penuel Gallagher, & Moorthy, 2011).

Other flexible components of STORE included choice of GIT application and choice of data. Teachers could choose which application (Google Earth and ArcGIS Explorer) and which, if any, tools in those applications they would use beyond the viewing of data on map layers. Examples of tools were path making, measuring distances, generating point layers for locations such as the school, and generating elevation profiles. The teachers could also choose which phenomena (temperature, precipitation, or land cover) to focus on and which data to use about those phenomena.

Choices of data included whether they wanted to look at both California and New York State or only one state, whether to look at climate change projections for 2050 or 2099, which type(s) of vegetation/land cover data, and which type(s) of temperature data (e.g., average annual, average highest monthly, or average highest daily). The project assumption behind providing this flexibility was that it would ensure that greater numbers of teachers, teaching many different science courses, would find value in the essential STORE offering: the GIT and the data. Furthermore, by being presented with such a rich set of choices, they would have the opportunity to build their instructional decision making skills.

To enhance the teachers’ ability to carry out deliberative decision-making about what precisely they would implement and why, it was requested from the outset that they precede implementations by completing content representation (CoRe) forms. On these forms, they would need to explain what they intended to teach, why, what student learning challenges they anticipated, and what they expected to do to meet the challenges (Mulhall, Berry, & Loughran, 2006). A CoRe is structured around questions related to the main science content ideas associated with a specific topic, teaching procedures and purposes, and knowledge about students’ thinking. The concepts and topics are documented on a table—one learning objective/big idea per column.

This exercise in completing CoRe forms was useful for six of the 12 (50%) teachers. The following, for example, are misconceptions (1 and 2) and readiness characteristics (3) that teacher JW expected to encounter among his 11th-grade Earth Science students attending his rural high school:

- Climate is the same as daily weather.

- All parts of California experience the same weather, both temperature and rainfall.

- Students are not familiar with the program, nor do they utilize computers regularly at our school (except for word processing or Internet research for papers or projects).

At the end of each classroom implementation, between Years 1 and 4, the teachers were asked to explain what they learned and what they might do differently next time. These reflections were captured in interviews, changes to their CoRe documents, and to a lesser extent in emails and written memos. In addition, they were asked postimplementation to complete reflective surveys and get their students to do the same. The student surveys prompted feedback about student satisfaction with the STORE implementation, what (if anything) they felt they learned from their STORE lessons, and what (if anything) they might want to learn about those topics in the future. Teachers received stipends each year after they completed classroom implementations.

Results

This section describes two types of results of this strategy. First, results concerning instructional planning are described, followed by results concerning classroom implementation. Concerning instructional planning, the flexibility afforded to the definition of what constituted the essence of STORE implementation yielded a wider variety of implementations than expected. The project team expected that STORE would be deemed appropriate only for high school students, but informal referrals among colleagues also created a demand among middle school teachers and community college instructors. The result was a blooming of diverse instructional strategies and curricula for diverse students:

- Community settings: 4 urban, 7 rural

- Schools: 7 regular public, 2 private, 1 charter

- Subjects: 2 Integrated Math-Science, 1 General Science, 1 Biology, 4 Earth science, 3 Environmental Science, 1 Physics, 2 Technology and Science

- Grades: 6, 7, 9, 10, 11, 12, community college

- Student levels: Special needs, nonspecial need but lower end achievers, regular heterogeneous mix, Advanced Placement

- Primary student ethnicities: Asian, Hispanic, Anglo

Seven teachers developed some of their own lessons, though they did make some use of the existing exemplar lessons as well. JT, for his community college Geospatial Technology class, developed a lesson that required students to do an environmental impact report about proposed new construction near the campus. Among other things, his students assessed what drainage issues developers would need to consider. He introduced his students to the power of GIS in this way.

In partnership, CB and TO developed lessons that were about the data from New York State. They also had students compare the New York and California data and added more open-ended inquiry by having students draw their own transects and analyze the data along them. Instead of using only predeveloped transects in the master data sets that connect weather stations. GW, for his community college Earth Science class, developed lessons aimed at building his students’ skills in drawing contour lines on maps so as to better understand how to analyze the STORE data, which also is contoured.

JR, for his middle school course, developed lessons that engaged students in collecting their own predominating land cover data in the forest around their school, in order to better understand how scientists from the United States Geological Service collected the predominating land cover data in the STORE master data sets. EL, for his Advanced Placement Environmental Science class, developed lessons around the storm data that the project staff also put into the master sets of data yet had not developed lessons around.

LC put STORE lessons in a whole new high school science course that she submitted to the University of California for accreditation. The STORE lessons fit into the section of her course about Industry, Technology, and Politics.

Major modifications that teachers made to the exemplar lessons were classified as such if they took students in new directions; for example,

- Framing the data analysis tasks from the exemplar lessons in terms of hypothesis generating and testing, or adding new data to the inquiry, such as plate tectonics data (JT did this for his community college Geospatial Technology class).

- Expanding the scope of the advanced lessons about climate change to bring in more attention to the characteristics of plant species impacting the prospects of their survivability (KM did this for her Advanced Placement Biology class).

- Developing project-based lessons around endangered animal species, using the STORE climate model based projections about temperature and precipitation as the basis for student research into whether a particular California animal species is likely to survive. (LC did this for her Advanced Placement Biology Class).

- Taking the mathematics content about air pressure, dew point, relative humidity, and temperature lapse rate in the first exemplar STORE lesson (Basic Lesson 1), which was written for high school students, and adapting it for middle school students (JR did this for in integrated math-science course for seventh graders)

Minor modifications included changes in phrasing, display, or directions. Teachers’ final curricular products and CoRe forms are posted on the project website.

In the nomenclature of Roger’s (1962) taxonomy of innovation adopters, the STORE teachers, all self-selected, could be characterized as a combination of innovators and early adopters. Innovators are those who like to develop their own instructional materials, whereas early adopters are more disposed to using predeveloped materials.

In one sense, all the STORE teachers were innovators with the primary STORE resources: the GIT and data. All had in common this commitment to using these primary resources, and 10 out of 12 (83%) persisted over 2 years of implementation. Yet, some were more like early adopters in how they decided which GIT data-centered lessons to use, some choosing more than others to change the lessons’ contents. These outcomes have implications for distinguishing innovators from early adopters. These differentiations may need to be refined to become more sensitive to new paradigms of technology-based instructional innovations that are not curriculum centered but tool or data centered.

An element that factored into teachers’ curricular decisions was the time available for STORE in their course schedules. For example, two colleagues at the same high school teaching advanced placement (AP) courses made different decisions about curricula. The AP Biology teacher decided she had little time in her crowded curriculum to spend implementing STORE lessons, so she took only 2 days. However, her colleague teaching AP Environmental Science was accountable to teach a less crowded curriculum and, hence, had time to implement a whole week’s worth of lessons and activities around STORE.

Concerning classroom implementation, ratings were gathered through classroom observations of 11 of the 12 implementing teachers during their final implementation year using the observation rubric. Teachers’ final year results of these ratings provide only a snapshot of teacher practices on the short numbers of days in which they were observed, so it would be wrong to overgeneralize from them. That said, the rating system was useful for articulating a range of teacher performances on the three traits of interest: instructional practice, technology challenge, and classroom management. The scores most related to the STORE professional development goals were about instructional practices and to a lesser extent about meeting technology implementation problems and managing student acclimation to using the GIT in hands-on tasks.

On the instructional practice trait (mean = 2.18, standard deviation = .87), the findings reveal that six of the teachers only occasionally showed some ability to pose thought-provoking, open-ended questions about the scientific content to the students in discussions, which in turn, enabled them to gauge student understanding. The relatively low frequency of such interactions is understandable given that the main objective of the observed lessons was giving students hands-on time with the data on their computers.

Three other teachers also attended to the scientific content in their student interactions but only in terms of reviewing or lecturing about it. Two teachers interacted with students only on procedural technical issues. Hence, these findings, while limited, provide little evidence of high-level instructional enactment capacity. They are consistent with other teaching practice research, in which variances in teachers’ ways of responding to students’ learning needs illustrated individual differences in their how they looked at teaching, what their objectives were, and what instructional resources they used (Schoenfeld, 2011).

Concerning the classroom management trait (mean = 2.73, standard deviation = .47), eight teachers showed skill at keeping the students on task, and only three experienced management challenges that diverted them from teaching for short periods of time. Challenges to technology implementation, the third trait (mean = 2.18, standard deviation = .60) were common, however. Most of their technology problems were beyond their control. Only three teachers had no technology performance problems, seven had some, though only occasionally. One teacher had a severe problem. He had to terminate a STORE session in his computer lab because the district did not have enough bandwidth at the time to support all of his students interacting with Google Earth online. The principal asked him to terminate the lab session so that staff at a different school in the district could go online.

There were instances when teachers showed special adeptness at proactively anticipating what types of implementation challenges might arise and planning ways to circumvent those challenges from getting in the way of learning. For example, one teacher, knowing that her set of student laptops could not be connected to the Internet in her classroom, used a thumb drive to install a local version of Google Earth on each laptop. Then, after being told by the STORE principal investigator that that the local Google Earth access would prevent students from generating numerically accurate elevation profiles, she resegmented her lesson. First she asked students to rate locations in the New York study area according to high, medium, and low, reserving the identification of real values for a class discussion. In the discussion, she projected the real values on her SmartBoard from her teacher computer, which was the only computer in the class that had Internet access.

A different teacher, after observing that his students were not following textual directions about how to use the relevant Google Earth functionality nor watching him demonstrate how at his presentation station, decided instead to use a special application to demonstrate. This application allowed him to take control of all the students’ computers. It caused more students to pay attention, because they could not continue to work on the computers on their own until he finished his demonstration.

Some planning strategies were suggested by the principal investigator during discussions with the teacher; for example, having students collect vegetation data around the school ground, embedding hypothesis-posing questions in lessons, and comparing weather and climate data. Other decisions were influenced by conversations between teachers during group professional development sessions. For example, in one session, a seventh- and eighth-grade teacher from one school got tips from a ninth-grade teacher at a different school about how better to scaffold tasks designed to get students to express conclusions about relationships between temperature and precipitation averages and climate model-derived projections.

These implementation results are interesting from an exploratory perspective. The scores from the observations, however, represent only a glance at the teachers’ experiences with implementing STORE and should not be taken as an indicator of what may have occurred in their classes on other days.

Confronting Barriers to Persistence With the Innovation

Needs Assessment

The project team strove to enact professional development strategies that would maximize the likelihood of tthe teachers’ persisting with the innovation over multiple years. The strategy was informed by a needs assessment. A teacher survey was delivered in the middle of the project to provide that assessment. The administering of the survey in the middle of the project provided an opportunity for teachers to react to what strategies had already been put into place and still inform changes to the strategies if needed. The following are highlights. On a scale of 4 to 1 (4 = very high, 3 = somewhat high, 2 = somewhat low, 1 = very low), they were asked to rate perseverance with STORE. The mean rating was 2.9. Then, items asked participants to rate the agreeability statements about perseverance. Again, these were on a 4-point scale (4 = totally agree, 3 = agree more than disagree, 2 = disagree more than agree, 1 = totally disagree). The means on these items, from highest agreement to lowest, were as follows:

- “It would be easier to persevere with STORE if other teachers at my school were also participating.” (3.3)

- “Even though there have been many e-mails about STORE features and there is now the STORE master website from where you can go to get to any of the STORE resources, I find it hard to commit the time I feel is needed to study all these resources independently and use them in my instructional planning.” (2.9)

- “It would be easier to persevere with STORE if there was a clear relationship between doing STORE activities with my students and the tests I’m accountable to give to them.” (2.7)

- “It would be easier to persevere with STORE if my administrators (i.e., the principal, science department lead, or district administration) were committed to STORE and recommending that teachers use it.” (2.7)

- “I came into the STORE project very motivated to participate but because I have so many other responsibilities, it is challenging to persevere with the project.” (2.4)

These responses suggest that teachers saw challenges in persevering with the project and that, hypothetically speaking, perseverance was increased if they worked with school colleagues who were also implementing and had more time for building mastery via independent study of the project resources. These conditions would make bigger differences than would alignment of the resources to accountability tests and support by administrators. The following comments from them elaborate on what they were thinking concerning available time:

- “Although I had hoped I might spend more time on STORE I have managed to implement some, and the experience has been good for me and for my students. When and how I implemented STORE lessons has changed from my initial plans.”

- “As with so much of what classroom teachers encounter, time is the most precious commodity we have. During the school year there isn’t a day that goes by where the choice to either grade papers and give timely feedback that doesn’t interfere with our desire to develop curriculum, collaborate with colleagues, have more time to talk with students, etc.”

- “I am working on an additional credential which is taking up much of my time.”

- “The help that [the principal investigator] has offered has been very useful but it might be difficult to carve out more time for this.”

- “Time is precious. Every minute of every day is booked up and as awesome as the STORE activities are, they represent a small percentage of what an educator deals with each year. It becomes a cost/benefit problem where you have 150 students, two or more types of classes you teach, thousands of documents to plan, prepare, print, grade, etc. You end up putting your time where it does the most good.”

In some comments, teachers elaborated about how having colleagues share in the innovating improves their persistence.

- “I did have another teacher at my school working with STORE and they have a good knowledge of GIS, so that helped me get over the rough implementation of STORE activities and to brainstorm some possible solutions.”

- “I have a teacher at my school doing it.”

- “I’m lucky to have one teacher at my school who participates.”

- “One other teacher at my school is participating with STORE, and it has been very helpful to discuss with that person how STORE is being used and what challenges have been faced and how those have been overcome. The discussions have been one of the best part about STORE for me, personally. They have allowed me to connect with my colleague in ways I would not have previously and have helped us both to persevere.”

Yet, one teacher gave a conditional response: “I think this depends on the teacher. I have a unique class in teaching AP Environmental Science (the only one at my school) so this didn’t necessarily apply to me.”

Teachers who commented were not concerned about to what extent STORE aligned to their school testing and accountability system and were not perplexed about accommodating STORE in that system.

- “I’ve modified some test items to accommodate the STORE activities that I used.”

- “It can’t always be about the test. Sometimes critical thinking activities like STORE help students to be better learners and hopefully that will allow students to do better on their tests.”

- “The AP Environmental Science exam isn’t taken by all the students in the class. Also, the content areas of the STORE curriculum fits perfectly with the expected learning outcomes.”

- “There is enough tie-in that I could justify the time spent to a test-centric administrator if necessary.”

Three teachers also expressed in their comments a sense that administrators would not make a big difference in implementation, suggesting that these three were a busy yet independently thinking group who had their own metrics for success.

- “Administrators haven’t had any influence one way or the other in this project.”

- “I think it is more motivating when ideas come from within, rather than from another source; thus, if an administrator had deemed STORE important and recommended we use it, I think there may have been some resistance. Maybe not, but that’s my impression. It may have been viewed as another fad in curriculum – the latest whiz-bang thing… On the other hand, if the administration really bought into the concept and provided support in different ways, I could see how it could have helped with perseverance.”

- “This doesn’t matter in my case.”

Two notable exceptions were noted to teacher perspectives about the importance of administrators. These views were expressed by teachers who did not take the survey because they were not in the group of 12 stipend-receiving teachers. Rather, they heard presentations about STORE in various meetings and conferences. One of them remarked that administrators’ behaviors can disincentivize teachers from adopting innovations if the administrators are hostile to innovating because they worry that in the short term it could yield lower student outcomes as the teachers struggle to master it.

Second, a teacher from a poor urban high school remarked several times in meetings that at his school the principal expected teachers to be fully in compliance with mandated daily curricular requirements. This practice was a disincentive to teachers taking individual initiative to innovate, given the day-by-day preplanned rigidity of the schedule.

With these challenges to persistence, the project team strove to enact professional development strategies that would maximize the likelihood of the intended multiyear adoption of the innovation. They had to make assumptions about what was essential and what was not. Teachers were trained in how to use the data sets and the six predeveloped exemplar lessons, yet they were given much flexibility in how they would receive this training. For example, some teachers were more available for group trainings than were others, so accommodations were made to meet all their training needs on an individual basis, with three daylong training sessions.

Eleven out of 12 could find the time to attend at least one of these trainings, either face to face or by conference call. If teachers were unable to attend a group professional development session, they were allowed to continue participating in the project. The principal investigator would afterward debrief missing teachers about what occurred. In addition, 11 out of 12 received one-on-one sessions with the principal investigator at least once after he observed their implementations. The 12th had to be interviewed without a preceding observation because he did not give the principal investigator advance notice of when he would be implementing. Since some of the participating teachers taught at the same schools as another participant, one to three sessions were held between the principal investigator and each of these four collegial pairs.

In addition, optional monthly afterschool sessions by phone were offered for any teacher who had questions for the project staff or for each other. After two of these, however, this practice was eliminated, because so few teachers participated. Anecdotally reported reasons for nonparticipation included lack of a predetermined focus, competing demands, and the practice of waiting to focus on STORE until a few days before classroom implementation. This latter reason was admitted to by a teacher who said that she always waits like this to plan a unit even though she knows that she should start earlier. She also stated that she, hence, deemed these afterschool sessions unnecessary, because the two that were carried out occurred several months before her implementation.

Results

The strategy yielded high teacher persistence with STORE. Twenty-four implementations were completed, and most of the participating teachers remained committed for the expected 2 years. By the end of Year 3, the promised set of 12 teachers implemented STORE in their classrooms, and by the end of Year 4, 10 had done it twice. One of those 10 did two implementations in Year 3. Two others did their first implementations in the third year of the project (i.e., the 2012-2013 academic year). Of those two, one implemented for his second round during Year 4, but the other did not. He cited a forest fire that hit his school and community as his reason, because the school had to close for a while, thus shortening teaching time and forcing him to make cuts in his scheduled activities. STORE was deemed an activity that could be cut because it required student hands-on work on concepts that could be covered in lectures in less time. Two implementations were by teachers carrying out a third round, even though they were not getting a stipend any more. A different teacher stated that she would have implemented again for a third round in Year 4 but retired from teaching at the end of Year 3.

In the case of four other teachers, there was some attrition as well, though two attritions were due to factors having nothing to do with the project. In Year 2 a teacher volunteered to participate, received a little professional development, committed to implement in Year 3, then left because he accepted a job at a university. A different teacher started on the project as one of the six design partner teachers but took medical leave due to illness. For a few months, he participated in design partner meetings until it became clear that he would not have the opportunity to be a guest implementer of STORE lessons in a colleague’s classroom at his former school. Then, due to no longer having the chance to implement, he left the project.

Only one teacher passed completely on implementation for reasons that may have had to do with interest and commitment. This teacher planned to implement in Year 2 and received professional development accordingly, then was in an auto accident 3 weeks before implementation and decided to postpone the implementation to the following year. For reasons unknown, she did not follow through and did not respond to emails asking for updates about her intentions.

The other teacher who passed completely on implementation was one of the New York State design partner teachers in Year 1. He decided to discontinue participation after that year because he had taken on too many commitments, he said.

The teachers’ perception of STORE’s value was revealed in survey comments indicating desire to persist despite concerns expressed in other comments.

- “Ultimately, I really enjoyed the process of working with the [STORE staff], especially [the principal investigator]. The integration of authentic learning tools continued to encourage me to participate.”

- “I have many duties and I have been able to persevere with STORE even though I take on a lot of different tasks. I’m a strong believer in the value of geospatial technology and have, thus, been motivated to use the STORE curricula in several of my courses.”

- “I came into the project after it had already started and was only involved for 1.5 of the 3 years. I participated for three semesters—one the first year and two this past year. My motivation level has remained high, but it has been a challenge to work the curriculum into my previously existing curriculum; however, I have managed to meet the challenge.

A big motivator in teacher persistence with STORE was a belief that it was a valuable resource for teaching about climate change. On a 4-point scale, where 4 = totally agree, 3 = agree more than disagree, 2 = disagree more than agree, and 1 = totally disagree, the mean was 3.6 on an item that asked them to react to this statement: “The STORE resources have made it easier for me to teach about climate change.” Comments included the following:

- “It allows the students to see the data in an organized manner. This should strengthen the relationships learned during class.”

- “The STORE curricula have been helpful to me to teach climate science and modeling in ways I previously have wanted to, but haven’t had the resources to (i.e., in terms of technical availability [hardware/software] or data).”

- “The STORE resources allow me to expose students to another side of studying climate change than I would have been able to do otherwise. Having the use of actual data sets that are articulated in such a way to be user friendly is special.”

- “The appeal of this project to me, the instructor, is many layered. I believe that all students should be aware of 1) the evidence for, and consequences of, climate change; 2) how and why precipitation varies in our state and what we should do as citizens to protect it; and 3) the computer technology available and how it is used for scientific analyses. In addition to being part of California State Science standards, these concepts are critical to developing an informed citizenry able to understand that climate change will have far reaching impacts on our future in California. In their future, students will need to make decisions on how to mitigate the climate changes that are coming, including choices regarding water use and storage in California. Finally, our local community college has an excellent geospatial information science program (and instructors!). The STORE project provides me with tools to interest students in that program, collaborate with the instructors there, and possibly help students find a future career.”

Another positive outcome was that despite occasional technical challenges, all of the implementing STORE teachers persisted in their commitment to engage their students in hands-on STORE GIT activities rather than only the data via printouts or lectures. This finding is consistent with other research about the appeal of GIT to surveyed teachers who reported similar problems yet, nevertheless, also maintained positive perseverance attitudes about the educational value of the GIT (Baker & Kerski, 2014).

Conclusions

The STORE team aimed to see to what extent teachers would find it appealing to use the STORE GIT and geospatial data in the science classroom, how they would go about using it, and what would make them persist with using it in two classroom implementations. The professional development strategy for meeting these objectives evolved as we studied the teachers’ attitudes and behaviors and made strategic adjustments as needed. Phases of design research were carried out with a small group of self-selected design partner teachers. These design partners provided critical input concerning which GIT to use, what data, and which examples of activities students can pursue with the GIT to investigate the data. The exemplar classroom innovation was student use of the GIT and of at least some of the data, in order to build understanding of some key scientific concepts about weather, climate, and ecosystems. Design partner teachers implemented in their classrooms, as did other teachers who were recruited to participate starting in the second year.

The strategy employed for achieving the goal was to structure with flexibility the resources and interactions with teachers. This strategy led to much teacher persistence (100% implemented once and 83% twice) and to a diversity in how and what they implemented in their different courses, while remaining committed to the essential aspects of the innovation. Though the accompanying foundational curriculum was consensually endorsed by the design partner team, wide latitude was permitted in individual teacher adoption and adaptation.

Project assumptions about what strategies to pursue with the teachers were driven by what the literature has identified as constraints to classroom innovation adoption (DuFour, 2004; Dunne et al., 2003; Louis & Marks, 1998). To address lack of time to learn and pursue the innovation, the project leaders were flexible in how and when they provided professional development and individualized it to be responsive to each individual teacher’s needs and level of readiness.

Constraints cited in this literature concerning lack of administrative support proved not to be a hindrance to teacher implementation, primarily because, as expressed on surveys, no strong indication came from these particular teachers that they needed such support to carry out their implementations. As to the constraint imposed by lack of a professional learning community, the project did what it could to encourage the teachers to interact through a wiki, a group email list, and in the various group meetings convened by the principal investigator. Yet, though they enjoyed the group interactions during whatever scheduled meetings they chose to attend, the teachers did not avail themselves of all the professional learning community-building structures that the project offered them. Rather, survey and interview comments suggest that most valued were professional interactions on the project between the four teacher pairs who taught at the same schools and shared project participation, as well as with one-on-one or small group interactions with the principal investigator during site visits.

Literature attesting to constraints arising from mismatches between teachers’ capacities and the project’s expectations was both supported and not supported by the project. It was supported in the sense that observations yielded less-than-hoped-for evidence of teachers’ inclinations to facilitate deep student thinking about the lessons’ content and data (the kind described in Schoenfeld, 2011, and Darling-Hammond et al., 2009), yet less supported in the sense that all teachers felt sufficiently comfortable with the innovation to implement student investigations of the data and recognize when they would have to adapt the lessons’ contents to better meet the students’ needs.

The literature positing different levels of teacher commitment to an innovation (Rogers, 1962) proved to be somewhat salient in that different teachers took on more or less responsibility for putting their individual stamps on what they implemented. Through its policy of permitting and encouraging curricular adaptation as long as the geospatial data were involved, the project avoided having these differences become constraints.

Last, the literature attesting to persistence with GIT was supported by STORE outcomes. The teachers could have simply handed their students paper versions of the data visualizations but all, instead, chose to expose their students to the GIT in hands-on computer activities.

Had the project goal been wider, that is, getting teachers to simply use GIT in any way they thought appropriate, open-ended curriculum development by individual teachers would have been an appropriate strategy. Alternatively, if the goal had been a narrower one, to get teachers to use a particular curriculum targeted for specific courses at specific grade levels, consensual curriculum development and common adoption would have been appropriate.

Hence, in keeping with the kind of innovation it was, the STORE project epitomized the Conceptualization- Iteration- Adoption-Adaptation (CIAA) that emerged from the project deliberations as salient for bringing about the development of scientific data-centered instructional resources and accompanying teacher professional development. The pathway that STORE took in its distinctively evolving way epitomized the model’s three phases:

- Conceptualization. A scientist-educator team developed exemplar curriculum in tandem with deciding which geospatial data and visualizations would provide the raw material for student investigations that reinforce learning of targeted science concepts.

- Iteration. A small set of partner teachers who teach different courses and grade levels in secondary courses reviewed the lessons and suggested changes, which were then made. Consensus on broad acceptability is needed, but not consensus on appropriateness to all the teachers’ courses and student grade levels.

- Adoption and Adaptation. Teachers adopted and adapted whichever of the exemplar lessons and data they wanted to use and could also add new data or new lessons, provided they used at least some of the exemplar data and the GIT that generates visualizations of the data (these being the only requirements).

The salience of the model is attested to by the high levels of commitment to persist with the innovation that most of the teachers exhibited. Yet, the variances of implementation behavior captured in the rubric-derived ratings suggests that more is needed to ensure that teachers’ commitments to persist also yield the most optimally effective instructional and classroom management practices.

Suggestions for Further Research

Further research studies could test the salience of this model for different populations of self-selected teachers who are using STORE resources. Researchers could investigate whether the outcomes from this first group of teachers could be duplicated. To stretch the testing of the model even further, it would be appropriate to conduct professional development and implementations of the STORE resources among larger groups of teachers who come from a common school or district, bound by common school cultures, policies, administrators, and demographics. Then, it would be appropriate to test the model with other data-centered instructional resources to see if there may be differences in outcomes among similar test populations of teachers and students relative to the characteristics of those other resources.

Examples of innovation features that could be varied in the determination of model salience include data characteristics (e.g., spatially distributed, temporally distributed, and extent of connectedness to prior knowledge and the visible world), domain (e.g., scientific, societal, or both), subject (e.g., Earth science, biology, and history) and topic (e.g., weather, genetics, and social demographics).

References

Baker, T., Kerski, J. (2014) The lonely trailblazers: Examining the early implementation of geospatial technologies in science classroom. In J. MaKinster & N. Trautmann (Eds.), Teaching science and investigating environmental issues with geospatial technology: Designing effective professional development for teachers (pp. 251-268). New York, NY: Springer.

Bodzin, A., Anastasio, D., & Kulo, V. (2014) Designing Google Earth activities for learning Earth and environmental science. In J. MaKinster & N. Trautmann (Eds.), Teaching science and investigating environmental issues with geospatial technology: Designing effective professional development for teachers (pp. 213-232). New York, NY: Springer.

Darling-Hammond, L., Wei, R. C., Andree, A., Richardson, N., & Orphanos, S. (2009). Professional learning in the learning profession. Washington, DC: National Staff Development Council.

DeBarger, A.H., Choppin, J., Beauvineau, Y., & Moorthy, S. (2014) Designing for productive adaptations of curriculum interventions. National Society for the Study of Education, 112(2), 300-319).

DuFour, R. (2004). What is a “professional learning community”? Educational Leadership, 61(8), 6-11.

Dunne, F., Nave, B., & Lewis, A. (2000). Critical friends: Teachers helping to improve student learning. Phi Delta Kappa International Research Bulletin, 28, 9-12.

Glaser, B.G., & Strauss, A.L. (1967). The discovery of grounded theory: Strategies for qualitative research. Chicago, IL: Aldine.

Grossman, P., Hammerness, K., & McDonald, M. (2009). Redefining teacher: Re-imagining teacher education. Teachers and Teaching: Theory and Practice, 15(2), 273-290.

Kubitskey, B., Johnson, H., Mawyer, K., Fishman, B., & Edelson, D. (2014). Curriculum aligned professional development for geospatial education. In J. MaKinster & N. Trautmann (Eds.), Teaching science and investigating environmental issues with geospatial technology: Designing effective professional development for teachers (pp. 153-172). New York, NY: Springer.

Louis, K. S., & Marks, H. M. (1998). Does professional community affect the classroom? Teachers’ work and student experiences in restructuring schools. American Journal of Education, 33(4), 757-798.

Merriam, S.B. (1995). What can you tell from an N of 1?: Issues of validity and reliability in qualitative research. PAACE Journal of Lifelong Learning, 4, 51-60.

Mulhall, P., Berry, A., & Loughran, J. (2003). Frameworks for representing science teachers’ pedagogical content knowledge. Asia-Pacific Forum on Science Learning and Teaching, 4(2), Article 2.

Nakicenovic, N., & Swart, R. (Eds.). (2000). Special report on emissions scenarios: A special report of Working Group III of the Intergovernmental Panel on Climate Change. Cambridge, ENG: Cambridge University Press.

Penuel, W. R., Bienkowski, M., Gallagher, L., Korbak, C., Sussex, W., Yamaguchi, R., & Fishman, B. J. (2006). GLOBE Year 10 evaluation: Into the next generation. Menlo Park, CA: SRI International. Retrieved from http://www.sri.com/work/publications/globe-year-10-evaluation-next-generation

Penuel, W. R., Gallagher, L. P., & Moorthy, S. (2011). Preparing teachers to design sequences of instruction in Earth science: A comparison of three professional development programs. American Educational Research Journal, 48(4), 996-1025.

Rogers, E. M. (1962). Diffusion of innovations. New York, NY: Free Press.

Schoenfeld, A.H., (2011). How we think: A theory of goal-oriented decision making and its educational applications. New York, NY: Routledge.

Strahan, D. (2003). Promoting a collaborative professional culture in three elementary schools that have beaten the odds. The Elementary School Journal, 104(2), 127-146.

Author Notes

This material is based upon work supported by the National Science Foundation under DRL Grant No. 101965. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science Foundation.

The author wishes to acknowledge the contributions of Dr. James MaKinster, Anne Elston, Linda Hawke-Gerrans, Kathleen Haynie, and an esteemed group of advisers and teachers, without whom the work would not have been possible.

Daniel R. Zalles, Ph.D.

SRI International

email: [email protected]

James Manitakos, Ph.D.

Appendix A

Teacher-by-Teacher Information

| Teacher | State & School | Courses | Recruit | Design Partner | Prior Familiarity With GIT | Implementation Years |

| AA | CA public high serving socio-economically diverse urban and suburban county (2013 estimated median household income: $88,602)

| Advanced Placement Environmental Science | informal referral | no | yes | 2-4 |

| CB* | NY public high in rural community (2013 estimated median household income: $50,332) *

| General Science | prior contact with project researcher | yes | yes | 2-3 |

| EL | CA public high in urban /suburban community (2013 estimated median household income: $121,074) *

| AP Environmental Science | school colleague | no | no | 3-4 |

| GW | CA community college in rural area | Earth Science | school colleague | no | yes | 3 (in two different semesters) |

| JR | Private school in rural CA community | 7th-grade Science and Math | school colleague | no | no | 2-3 |

| JT | same as GW | Geospatial Technology | referral from a high school teacher in same community | no | yes | 2-3 |

| JW | CA public high in rural community (2013 estimated median household income: $30,922) *

| Applied Earth Science | school colleague | no | no | 3 |

| KH^ | K-8 private school with a Chinese theme in a large city. | 6th-grade Physical Science and Math | prior contact with project researcher | no | no | 3 |

| KM | same as EL | AP Biology | prior contact with project researcher | yes | no | 2-3 |

| LC * | same as JR | AP Environmental science | prior contact with project researcher | yes | no | 1-4 |

| LS | same as JW | College Prep Earth Science | announcement on professional listserv | no | no | 2-3 |

| TO* | same as CB | AP Environmental science | prior contact with project researcher, thanks to school colleague | yes | yes | 2-3 |

| Note. Teachers are identified by initials that correspond to pseudonyms used to identify their curricular products on the project website, STORE.sri.com. ELL = English language learners; IEPs = Individualized Education Plans; AP = Advanced Placement. | ||||||

Appendix B

Observation Rubric

- Trait: Instructional Practice

- 3 = A blend of hands-on STORE computer use by students was observed, coupled with opportunities for group discussion, in which the instructor blended direct instruction with elicitation of students’ expressions of higher order understanding via question-and-answer sessions, discussions, or student demonstrations of learning in front of the class.

- 2 = A blend of hands-on STORE computer use by students was observed, coupled with the teacher presenting information to the large group, yet with little or no posing of open-ended questions or other forms of elicitation of expressions of higher order thinking (though such higher order thinking may have been prompted on the teacher’s STORE hands-on activity sheets). Large group time may have been spent on reviewing convergent response questions from prior homework assignments or worksheets.

- 1 = All student content attention was observed occurring exclusively while doing STORE hands-on computer based tasks; any large group interaction was around procedure.

- Trait: Classroom Management

- 3= Teacher minimized disruptions and distractions (e.g., student navigation challenges with the technology or student misbehavior) and in so doing maximized the amount of time students could focus on the science learning.

- 2 = Teacher routines were sometimes but not always effective in minimizing disruptions and distractions.

- 1 = Teacher routines were rarely effective in minimizing disruptions and distractions.

- Technology implementation

- 3 = Few if any technology performance problems disrupted the lessons.

- 2 = Some technology problems disrupted the lessons.

- 1 = Frequent technology problems disrupted the lessons.

![]()