The emergence and increasing usage of tablet technologies is changing the work of teacher educators (Murray & Olcese, 2011). As the post-PC age progresses (Norris & Soloway, 2011; Smlierow, 2013; Wingfield, 2013), computers and CD-ROMs, once the dominant instructional technologies in preK-12 classrooms, are being replaced with tablets and applications (or “apps”; Johnson, Smith, Willis, Levine, & Haywood, 2011; Waters, 2010).

As these new technologies become more ubiquitous and available, teacher candidates need tools to guide their selection, integration, and effective use of apps as they begin their teaching careers. Although online listings of recommended educational apps are available (Dunn, 2013; eSchool News, 2013; Heick, 2013), these listings typically only provide a brief description of an app rather than develop conceptual understanding of how an app could be used in the classroom and are, therefore, not practical enough to facilitate strong instructional planning and delivery.

Instead, teacher educators must extend candidates’ conceptualizations of apps by first explaining to them what apps are and then how to think about selecting apps for educational purposes. Furthermore, candidates must develop skills for integrating apps into their instructional practices.

To support this work, a brief history of apps is provided, followed by a description of classification systems that informed our work before outlining a framework for classifying apps. This framework can be taught by teacher educators and, in turn, used by teacher candidates as they begin their professional teaching careers to guide their determination and selection of apps that are most effectual for instructional purposes.

Defining Apps

Although many teacher candidates use apps daily, understanding what an app is and having a context for how they have evolved will help build their conceptualizations of apps. An app is essentially a small computer program that can be quickly downloaded onto a mobile computing device, (such as a tablet or smartphone) and immediately engaged without rebooting the device (Lucey, 2012; Pilgrim, Bledsoe, & Reily, 2012). As of October 2013, most apps were programmed to run on eitherApple’s iOS operating system (Apple Inc., 2013) or Android, Google’s Linux-based operating system (Droid, 2012); consequently, Apple and Google are the world’s largest providers of apps (Godwin-Jones, 2011).

There are both free apps that do not require money to download and paid apps that cost users $0.99 or more to download. Founded in 2008 with 500 apps, Apple customers are directed to the App Store to browse, purchase (if necessary), and download apps for their iPads, iPods, and iPhones. As of May 2013, Apple customers had downloaded more than 50 billion apps (Apple Press Info, 2013; Woollaston, 2013).

Also founded in 2008 and formerly known as the Android Market, Google Play allows Google customers to browse, purchase (if necessary), and download apps for their Android smartphones and tablets. By June 2012, 20 billion apps had been downloaded from Google Play (Fingas, 2012).

As of October 2013, App Trace (http://www.apptrace.com), a website specializing in app analytics, reported that 618,064 free apps and 382,867 paid apps were available to be downloaded. However, teachers searching the App Store or Google Play will likely encounter challenges when identifying apps to use with their students based on their app classification system.

Challenges in Selecting Apps by Subject Area and Function

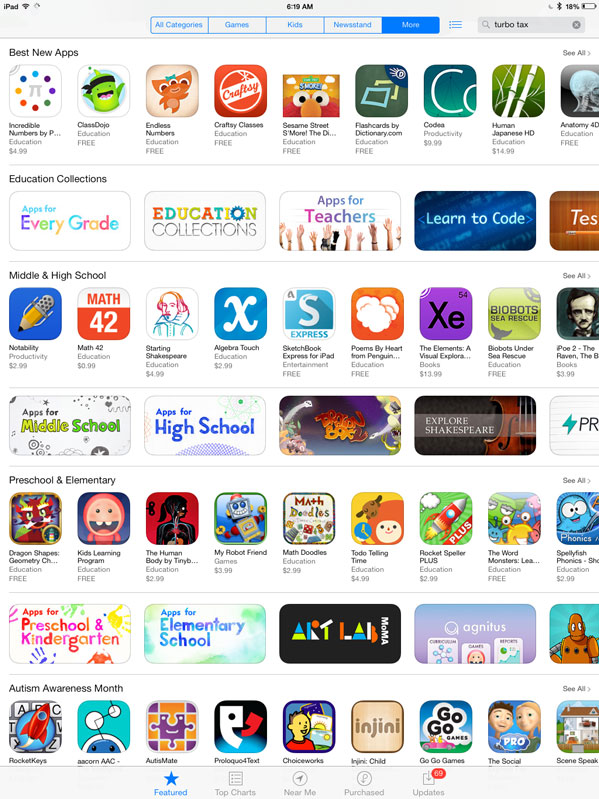

With over 20,000 educational apps available for download (Rao, 2012), teachers visiting the App Store or Google Play can quickly become overwhelmed when seeking to select the most effective app for their instructional needs (McGarth, 2013; Walker, 2011). In the App Store, for instance, when teachers search for “education” apps, they are presented the menu shown in Figure 1.

Figure 1. Screenshots of the App Store’s Education section, March 31, 2014.

Figure 1. Screenshots of the App Store’s Education section, March 31, 2014.

From these menu options, teachers must decide how they want to browse apps. If they choose to browse the menus for “Best New Apps” or view apps by grade level (e.g., apps for Middle & High School or apps for Preschool & Elementary) on this screen, the App Store lumps apps together using no specific organization pattern. This lack of organization results in teachers having to search through apps that could be used in any subject area and for a variety of purposes.

The first app shown for Middle & High School (Figure 1) is Notability, an app used to help students take notes, followed by Math 42, an app designed to assist students in solving math problems, and Starting Shakespeare, an app designed to introduce students to Shakespearean works. The lack of organization impedes teachers from finding apps quickly and efficiently because the App Store clusters apps together without considering subject area implications.

A middle school social studies teacher looking for an app using this menu would have to scroll to the 18th app before finding Today’s Documents by the National Archives (not shown in Figure 1), the first app indexed by the App Store that is specific to the social studies classroom.

Today’s Documents shows a primary source and provides background about how the primary resource is connected to a specific calendar. If that social studies teacher wanted to use an app to develop students’ abilities for analyzing primary sources, this app would be beneficial. Yet, to find an app to develop students’ map reading skills, the teacher would have to scroll to the National Geographic World Atlas app, which is the 42nd app listed in this index, to find an app that includes maps.

Because the App Store does not use an effective organizational strategy for categorizing apps on its main menu for education apps, teachers may spend a significant amount of time trying to locate apps that align with their instructional needs. However, if teachers select the “Education Collections” option from the main menu, the App Store does a better job in categorizing apps.

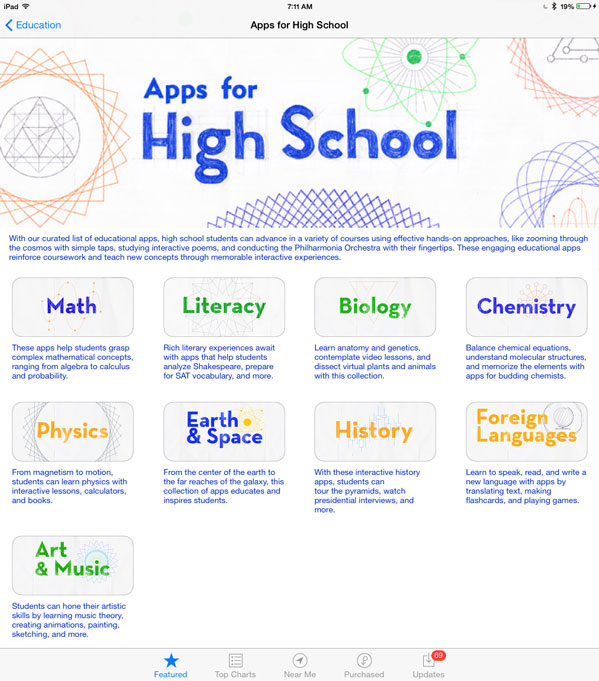

When selecting an Education Collections option such as Apps for Every Grade, Apps for Middle School, or Apps for High School, the App Store loads a menu that allows teachers to select a specific subject area, as shown in Figure 2.

Figure 2. Screenshots of the App Store’s menu that allows high school teachers to select a specific subject area, March 31, 2014.

The options provided in this menu, however, are not consistent. For example, whereas the subdisciplines for Math (e.g., Algebra, Geometry, and Calculus) and History (e.g., American History, World History, Civics, American Government, and Economics) are lumped together, the subdisciplines for Science (e.g., Biology, Chemistry, Physics, and Earth & Space) are listed as separate subject areas. Yet, English language arts is not classified as a subject area; rather, it is renamed Literacy. Teachers of any discipline trying to locate apps must select the option that most closely aligns with their subject area, which again becomes complicated when considering apps’ purposes.

Therefore, if biology teachers are looking for an app, they would select the Biology option from the menu shown in Figure 2, and they would be presented with the classifications shown in Figure 3.

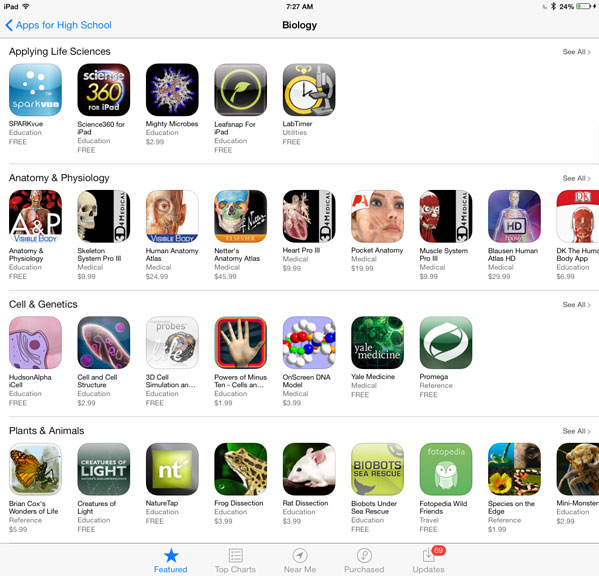

Figure 3. Screenshots of the App Store’s categories for Biology, March 31, 2014.

Figure 3. Screenshots of the App Store’s categories for Biology, March 31, 2014.

The challenge is that even these more nuanced classifications do not consider the function of specific apps, and the apps classified as Plants & Animals illustrate this challenge.

For biology teachers searching for apps about endangered species, they would likely be drawn to the apps classified as Plants & Animals on this screen. When browsing these apps, biology teachers are presented with apps that index animals, include videos of animals in their habitats, and guide students through virtual animal dissections. Because each of these apps has a distinct function—such as presenting information to students in the form of text, images, and videos; quizzing students about related topics; and letting students explore animal cadavers—biology teachers must review these apps until they find one that meets their specific needs, which can become complicated. Biology teachers who want their students to complete a research report about an endangered species would need to choose multiple apps. Specific apps needed for this assignment include apps that present information to students, ask students questions about endangered species, and let students document their learning in the form of a document, presentation, song, video, or image.

Teachers likely could identify apps that present information about endangered species and perhaps find an app that asks students questions about endangered species; however, they would not find an app that allows students to document their learning. To find that type of app, teachers would need to browse the entire App Store’s Education section, because there is no specific category for apps with that type of functionality when searching for apps by subject area. This lack of clarity when considering how apps are classified by subject area and function carries over to the website databases that index apps.

Existing Databases for Selecting Educational Apps

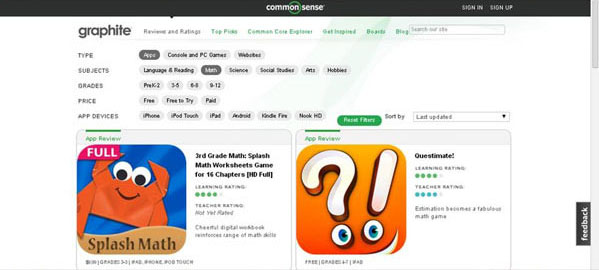

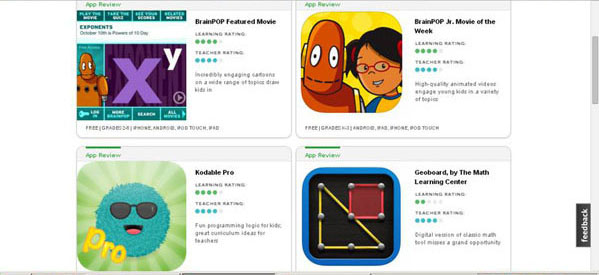

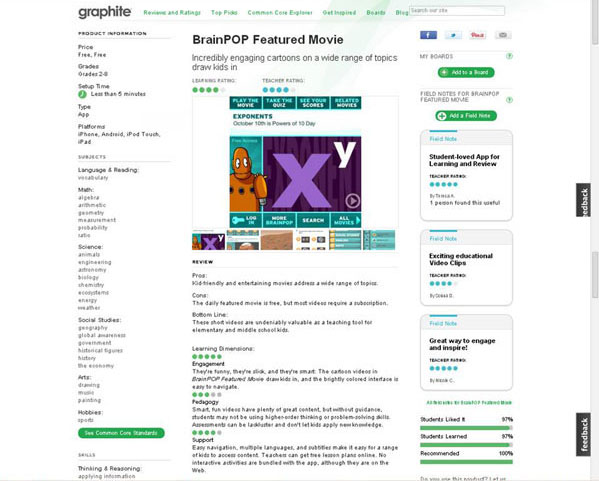

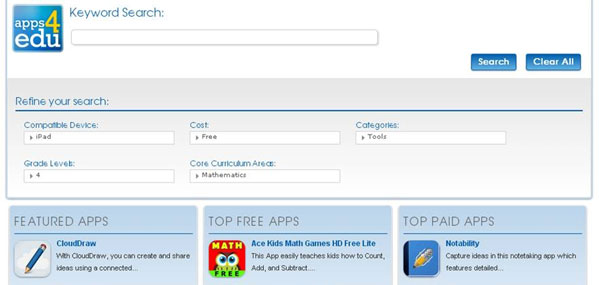

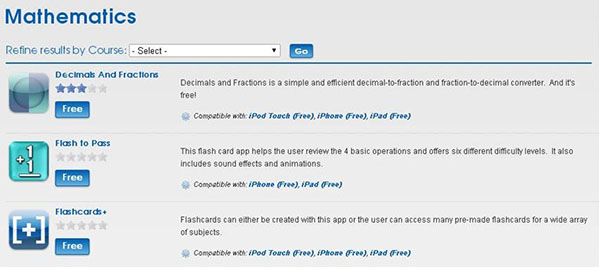

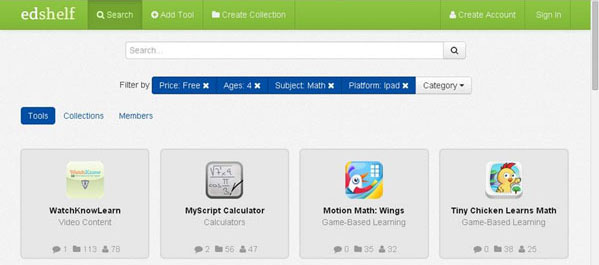

Outside of the App Store, Google Play, top apps for education lists, and app recommendations from colleagues and leaders of professional development sessions (Highfield & Goodwin, 2013), only a handful of databases are designed for parents and teachers to search for educational apps. Examples of these databases include Graphite.org created by the Common Sense Media company, Apps4Edu created by the Utah Education Alliance, EdShelf created by the EdShelf company, and Appitic supported by Apple Distinguished Educators. [Editor’s Note: Website URLs can be found in the Resources section at the end of this paper.]

Each of these databases contains searchable indexes of educational apps that provide users with a description, general evaluation, subject area classification, and information about the app’s cost and device compatibility. Appendix A shows screenshots related to how these databases provide information to their users. Although these considerations are useful in a general sense, they are of limited use to the teacher education community, because an app’s purpose is not included in the information these databases provide.

Illustrating the Significance of Purpose

Whereas these website databases allow teachers to search for apps using more specific criteria than does the App Store, they still provide only limited data about specific apps that can be grouped together. Although multiple apps may seem to address the same subject area, skill, content, or knowledge, the way they do so differs, resulting in widely varying effectiveness. For example, both the apps MeMe Tales and One Minute Reader are designed to develop young children’s reading abilities, but they use very different approaches. MeMe Tales is designed to build enjoyment for reading by allowing children to choose a colorful book and then decide if they would like to read the book aloud or have the app read it to them.

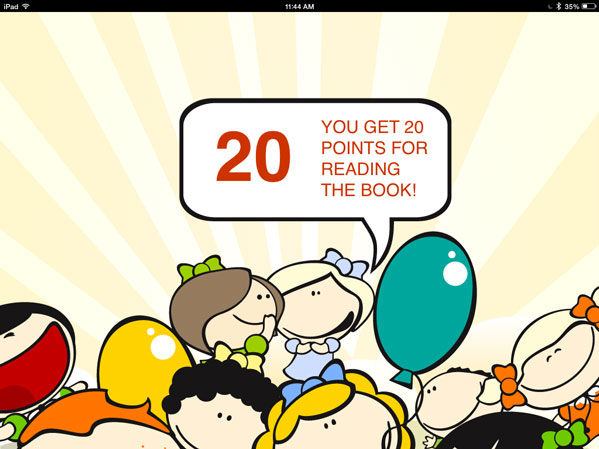

After reading the book or having it read to them, MeMe Tales awards children points (Figure 4). In this way, MeMe Tales encourages children to enjoy reading without using assessments.

Figure 4. A screenshot of how MeMe Tales uses points as a reward for reading, March 30, 2014.

Figure 4. A screenshot of how MeMe Tales uses points as a reward for reading, March 30, 2014.

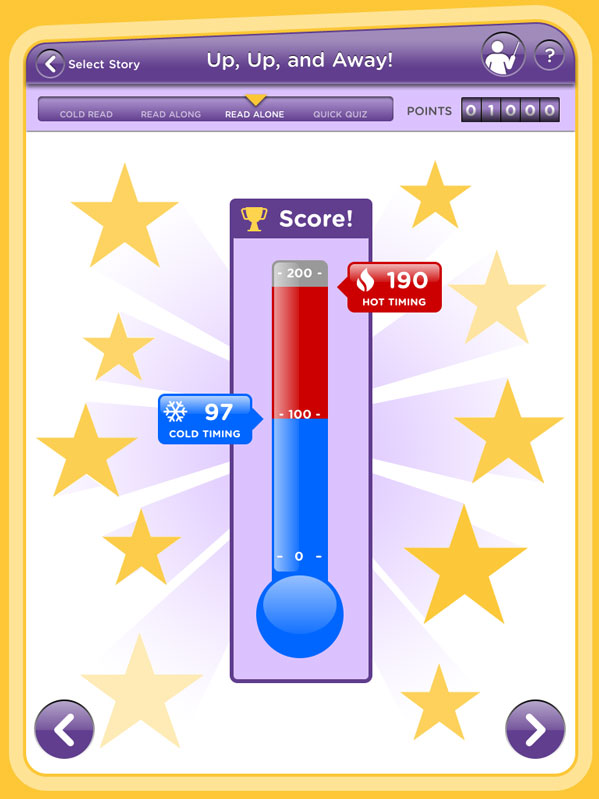

On the other hand, One Minute Reader is designed to develop children’s reading skills by using a variety of fluency and comprehension assessments. One Minute Reader first assesses the amount of words children can read per minute by timing them on a cold (first time) reading passage. Next, the app models a fluent reading of the same passage by reading it aloud. Children then read the same passage a third time (hot reading), and the app tracks the number of words read per minute to determine if there was an increase in reading fluency during the third reading as compared to the cold reading score (Figure 5).

Figure 5. A screenshot of One Minute Reader reporting the amount of words read during a cold reading against the amount of words read during hot reading, March 30, 2014.

Figure 5. A screenshot of One Minute Reader reporting the amount of words read during a cold reading against the amount of words read during hot reading, March 30, 2014.

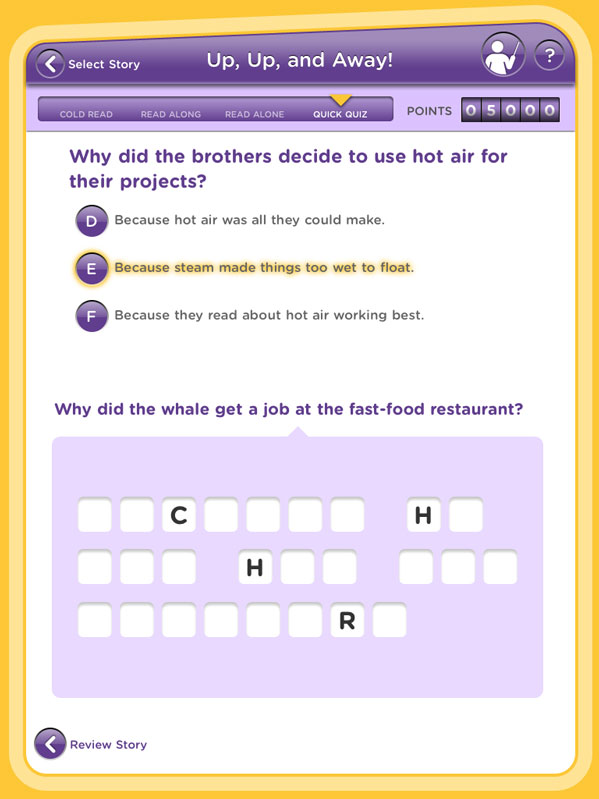

Last, the app asks comprehension questions to monitor children’s understanding of the passage (Figure 6).

Figure 6. A screenshot of a comprehension question used by One Minute Reader, March 30, 2014.

Figure 6. A screenshot of a comprehension question used by One Minute Reader, March 30, 2014.

MeMe Tales uses a low stakes, nonevaluative approach to developing children as readers, whereas One Minute Reader uses a higher stakes approach that features multiple assessment points. Because both MeMe Tales and One Minute Reader focus on developing literacy skills, existing app databases classify these apps only by subject area (e.g., Reading or Language Arts), which is misleading and counterproductive. By labeling them as Reading or Language Arts apps, the databases do not differentiate and report their specific purposes. The teacher must then conduct additional research to understand how the app functions and what its purpose is. A framework to support the classifications of apps that uses criteria for discerning the differences between apps with similar subject area implications—in this case, developing children’s reading abilities—would help teachers consider which apps are most appropriate to use with their students.

Review of Existing App Classifications Systems

Although the advent of iPads and tablets is fairly recent, the presence of computers in classrooms is not. Likewise, evaluation of educational software is nothing new. Researchers have been studying how computers impact student learning for decades (Clements & Nastasi, 1988; Haugland, 1992; Wolfram, 1984). Therefore, it is logical to begin an evaluation of instructional apps by borrowing from extant practice, then refine as needed. This strategy provides insight into how researchers classified computer software, as well as to demonstrate how the task of creating a classification framework for categorizing instructional apps builds upon the work of previous researchers. Because the evaluation process largely differs from the classification process, we focused specifically on the selection of articles representing how researchers established organizational frameworks in classifying educational software, not assessing the quality of educational software.

In an early analysis of how schools were using computers after they were first introduced, Pelgrum and Plomp (1993) used a questionnaire to collect data from 60,000 educators worldwide. As part of the questionnaire, respondents were asked to classify their school’s available educational software into one of 23 categories (see Appendix B for a complete list of the categories). Although Pelgrum and Plomp did not provide a description for the categories, they did identify that so-called drill-and-kill software, educational games, and word processing programs were the three most frequent uses of computers in elementary schools. Conversely, the software identified as least used in elementary schools included programs for interfacing with labs, producing interactive videos, and designing/making products.

Additionally, when comparing software used in elementary schools to software used in lower secondary (middle) and upper secondary (high) schools, Pelgrum and Plomp found that word processing programs, software that taught programming language, and spreadsheet and database programs were the four most frequently used. Similar to elementary school findings, the software used least frequently in lower and upper secondary schools included programs for interfacing with labs, storing questions for tests, and producing interactive videos. A critique of Pelgrum and Plomp’s work is that their classification framework considered only the function of the software instead of what users are able to do with the software (McDougall & Squires, 1995). As such, other classification systems for categorizing educational software were later developed.

Bruce and Levin (1997) proposed a taxonomy for classifying educational technologies that consisted of four main categories with subcategories embedded in each. Bruce and Levin chose the term media instead of software or programs “to shift the focus from the features of hardware or software per se to the user or learner” (p. 83). In this way, they honored McDougal and Squires’ (1995) suggestion by considering how users engage the technology being classified.

The first category of Bruce and Levin’s taxonomy is Media for Inquiry, which contains four subcategories: (a) Theory Building, (b) Data Access, (c) Data Collection, and (d) Data Analysis. Each subcategory is designed to support users in formulating and answering questions. For example, when users are working to answer a question, they would use the Theory Building subcategory to assist them in understanding the question. Next, they would use the Data Access subcategory to identify previously discovered knowledge about that question. Users would then draw on the technologies in the Data Collection subcategory to assist them in gathering and storing information related to their work. Finally, the Data Analysis subcategory would support users in interpreting the data collected. Thus, the Media for Inquiry technologies are classified together because they all support users as they seek knowledge to answer their inquiries.

The category Media for Communication contains four subcategories: (a) Document Preparation, (b) Communication, (c) Collaborative Media, and (d) Teaching Media. Each subcategory is designed to support users in sharing their work. For example, Document Preparation contains technologies to assist users in creating artifacts of their work, then users engage the subcategories of Communication technologies to share those artifacts.

The Collaborative Media subcategory consists of technologies that allow multiple users to contribute to one artifact together, and the Teaching Media subcategory contains technologies to support users in teaching the knowledge they produced or gained when using the Media for Inquiry technologies. The technologies in the Media for Communication category were classified together because they all help users disseminate information related to their inquiries.

The third and fourth categories do not contain subcategories, and Bruce and Levin (1997) described each of these categories with bulleted lists. For instance, the category Media for Construction is designed to include technologies that help users “affect the physical world” (p. 6). This category would include technologies that allow users to design pieces of architecture or alter physical landscapes. Media for Expression, their final category, includes technologies for creating images, audio recordings, and multimedia projects. The major difference between these two categories is that technologies in the Media for Construction category result in concrete, tangible objects (e.g., blueprints for a house), while technologies in the Media for Expression category result in digital artifacts (e.g., a slideshow movie or a music video).

In their review of technologies, Bruce and Levin found that 58.9% were aligned to the Inquiry category, 36.9% were aligned to the Communication category, 4% were aligned to the Construction category, and no technologies applied to the Expression category. Bruce and Levin claimed that technologies were not included in the Expression category because “personal expression in the sense Dewey meant” (p. 90) was not emphasized, and they recommended additional research be conducted in response to this finding.

Although multimedia composition software and animation programs that allow users to personalize their projects are now available, these technologies were still developing at the time of Bruce and Levin’s work, which may also explain why their Expression category did not include any technologies.

After analyzing these two types of classification frameworks—a framework that focuses only on the functionality of the computer software and another that considers how users engage the computer software—we determined that a classification system must consider both the purpose and practice of the technologies being classified, and the use of both categories and subcategories was an effective grouping strategy. With this in mind, we then searched for classification frameworks constructed specifically for apps.

Searching for preexisting classification frameworks was challenging because the field of educational apps is still a relatively new topic of research. After experimenting with different search terms that used varying word combinations of app, education, and classify, the search term “education apps” classification was selected. This search term was selected because when other terms were used, nearly all the articles reported were off topic or did not relate to classifying educational apps. Consequently, when “education apps” classification was used, two studies were found.

Handal, El-Khoury, Campbell, and Cavanagh (2013) reviewed more than 100 apps designed for primary and secondary math classrooms. The authors classified the apps into one of nine categories by analyzing each app’s content for “the kind of learning activities associated with the app, the instructional experiences supported by the app and their media richness, [and] the anticipated levels of cognitive involvement and users’ control over their learning” (p. 144).

While this article did speak to McDougal and Squires’ (1995) demand to consider the learner’s interaction with the software, but the classification system’s limitation only to math apps made it challenging to extend their findings to the other subject areas. Additionally, the nine categories the authors created convoluted their findings more than they helped to clarify. Having to consider nine different possible categorizations may overwhelm teachers when deciding how to use an app, which can consume teachers’ limited instructional planning time. Therefore, we found the nine categories limited to math as being an inefficient app classification model.

Australian researchers Goodwin and Highfield (2012a,b) investigated the pedagogical design of 240 apps, for which they created three classifications. Their classifications included Instructive, Manipulable, and Constructive apps (and two hybrid classifications: Constructive/Manipulable and Manipulable/Instructive).

Instructive apps are described as promoting rote memorization of content through drill-and-skill activities. These apps require students to practice a skill repeatedly in order to increase their accuracy using the skill, and these apps can be likened to digital worksheets. Manipulable apps provide students with guided discovery, which allows them to make choices about the topic they are learning and how they demonstrate that learning using a preconstructed context, template, or structure. For example, students using a search engine to gather information about a topic would be using a Manipulable app because they have the freedom to select their search terms, but they are limited to the results reported by the search engine.

Constructive apps are described as providing students with open-ended contexts, templates, or structures allowing them to create learning artifacts (e.g., images, videos, or texts). For instance, when students create a PowerPoint presentation, they engage an open-ended template, in which they have the power to design the presentation and its content to their liking.

Of the 240 apps they reviewed, Goodwin and Highfield labeled 75% as Instructive, 23% as Manipulable, and only 2% as Constructive. They concluded that an alarmingly high number of apps targeted for young learners are Instructive, and they called for more Constructive apps to be developed. In reviewing their research, we supported Highfield and Goodwin’s concepts for delineating apps by their instructive, manipulable, and constructive qualities; however, they did not fully develop these categories.

While Highfield and Goodwin’s (2012a,b) framework provides a structure for classifying educational apps, it does not consider the value of an app’s specific skills or purposes. Rather, they made generalizations about large groups of apps. For example, Tap Quiz Map is an app that uses memorization strategies and quizzes to help students recall specific geographical places, and its purpose is for students to remember the names and locations of exact places. Another app, Phonics Genius, repeatedly shows and pronounces the sounds of letter combinations to build students’ phonological awareness, and its purpose is to develop students’ reading abilities.

Using Highfield and Goodwin’s classification system, both of these apps would be labeled as Instructive, because they rely on memorization to teach their content. The problem is that the value of the skill being taught is not considered when using their classifications; instead, Highfield and Goodwin consider only the instructional method. Whereas students can look up the capital of Oklahoma in order to answer a Tap Quiz Map question, they would not be able to read that information without being phonologically aware, which is a skill that Phonics Genius develops.

By labeling all apps that use repetition to build students’ knowledge as instructive, Highfield and Goodwin are discounting that some instructive apps teach students relevant, foundational skills needed to engage manipulable and constructive apps, while other apps, such as Tap Quiz, teach only isolated knowledge that has little use to students (Daggett, 2005). To prepare teacher candidates to integrate apps effectively into their instructional practices, a classification system is needed that uses categories and subcategories to analyze the skills apps teach, recognizes how apps are interrelated, and provides examples of these skills and interrelations.

Grouping apps into disparate categories without also considering the purpose of the knowledge students acquire from the apps does not help teacher candidates make these vital connections. However, a framework that breaks Highfield and Goodwin’s classification system down into more precise categories and subcategories and is more closely aligned to an established framework of teacher knowledge, such as technology, pedagogy, and content knowledge (TPACK; Koehler & Mishra, 2005, 2009), would recognize these subtle nuances.

Methodology for Developing a Framework for Categorizing Apps

When planning how to conduct this work, we used qualitative research methods (Coffey & Atkinson, 1996; Glense, 2006), because classifying apps is an act of interpretation, which requires the researcher to become a research tool (Connelly & Clandinin, 1990).

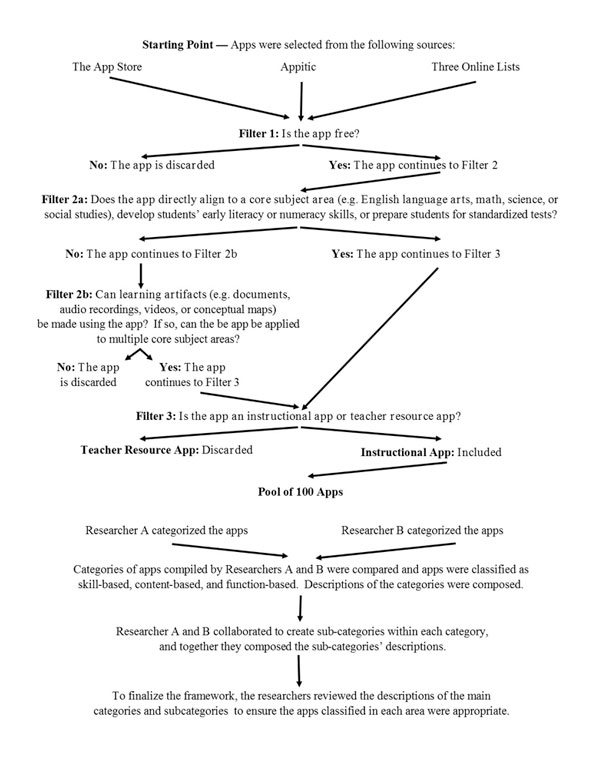

To develop our framework for classifying apps, we reviewed 92 apps; Appendix C shows a visual representation of our app selection and classification process. Because over 20,000 educational apps have been developed, purposeful sampling techniques were employed (Glense, 2006). Our first selection criterion was that the app had to be free of charge. Because policies about school districts and teachers being able to purchase apps vary greatly, we wanted to safeguard against any potential challenges related to cost. Using only free apps in developing our framework allowed us to avoid those potential pitfalls.

We also selected apps across the subject areas of English language arts, mathematics, science, and social studies. Unlike the classification system created by Handal et al. (2012) that classified apps only for the mathematics classroom, our framework was closer to the one designed by Goodwin and Highfield (2012a,b), which can be used by all subject areas teachers. Although apps for art, music, and physical education among several other subject areas exist, it was more practical to limit our framework to the core curriculum subject areas present in public schooling.

We agreed that practicality and ease of use were important components of our work, and we found that the educational sections of both the App Store and Google Play contain apps for each subject area. However, we still needed to reduce the number of apps we were to review for our classification system to a practical number using specific selection criteria.

After working with these initial criteria, we discovered that not all educational apps have a specific subject area. For example, educational apps such as Educreation and Popplet can be used in multiple subject areas. Educreation is an app that allows users to create a shareable video about a specific topic. Popplet allows users to create conceptual maps and graphic organizers about most any topic.

Both apps can be used after reading a specific content-area text. For example, students can respond to the text they read by creating a video using the Educreations app to elaborate on the text or offer a critique of it. Students can use the Popplet app to design a conceptual map in response to the text to display their understanding of it. Because both of those apps can be applied to multiple subject areas, the selection of apps by subject area only was impractical. In response, we identified non-subject-specific apps by reading the description of the app posted in the App Store, which led us to the final filter.

Two other relevant types of apps are Instructional and Teacher Resource. We defined Instructional apps as apps that help teachers deliver content knowledge or build students’ critical thinking skills and Teacher Resource apps as apps that help teachers manage a class or plan lessons. Teacher Resource apps were not included in our framework. For instance, Class Dojo is an app designed to support teachers in managing their classrooms, and we labeled it as a Teacher Resource app because it does not help teachers deliver instruction to students. The Common Cores State Standards app is also a Teacher Resource app because it helps teachers plan instruction, not deliver it. As a result, we delimited our framework to Instructional apps. We used this selection criterion because we wanted to work with a pool of apps to which all teachers had access.

Selecting Apps

To select apps, we consulted the App Store for English language arts, math, science, and social studies instructional resources from across the preK-12 spectrum. We searched the App Store using the terms English language arts, science school, social studies, and math school (the word school was added to science and math, because those two terms are widely used outside of educational contexts; adding the word school to each term narrowed our search to apps designed for educational purposes).

Although some apps can be applied to multiple subject areas (e.g., Educreation and Popplet), several apps can be applied to one specific subject area. To establish validity (Messick, 1989), we chose 10 apps for each subject area that met our selection criteria. Ten apps provided a large enough sample size within each category to gain an understanding of the types and functions of apps designed for the subject area. Additionally, many apps have been created to support students’ standardized test readiness. As such, eight apps designed for this purpose were included. Furthermore, when cross-referencing Shuler’s (2009) review of the top 100 educational apps based on age and subject area, we that found apps for teaching literacy, general learning, and math to toddlers and preschool students represented a significant amount of the most popular apps downloaded. Therefore, we purposely selected 10 apps that targeted building toddlers’ and preschoolers’ early literacy skills and 10 apps that targeted building their early numeracy skills.

Finally, because the apps we included at this point were subject-specific apps, test-readiness apps, or apps targeting young learners, we wanted apps that could be used by multiple content areas and be used to create learning artifacts. As such, we consulted Appitic because it allows users to search its database according to Bloom’s Taxonomy, not only by subject area. Using Appitic enabled us to identify 15 apps that could be applied across subject areas.

Last, because teachers may consult blogs and online lists of best apps for education, we conducted an Internet search using Google and the search term “top apps for education” to identify top online resources (Heick, 2013; Noonoo, 2013; Sawers, 2012 ) where teachers may go to select apps, excluding the databases we had already consulted. From these resources, we identified an additional nine apps, which brought our count to 92.By following this selection process, we minimized selection bias while generating a pool of diverse, (relatively) popular, and high-quality apps for analysis.

Categorizing Apps

Because analyzing apps involves understanding their functions and purposes, we followed a qualitative coding methodology (Coffey & Atkinson, 1996; Glense, 2006). To code, we used Mean’s (1994) categories for technology analysis—tutor, explore, tool, and communicate—as a basis for our framework, and we modeled Murray and Olcese’s (2011) methodology for analyzing apps according to those categories. In this way, Mean’s categories offered us a guide for thinking about how apps could be classified. For each app we identified, we first downloaded and used it to gain a general familiarity for it. Then we asked the following questions:

- What is the primary purpose of this app?

- What does this app require users to do?

- How could teachers use this app in their classrooms?

To ensure reliability, (Coffey & Atkinson, 1996; Merriam, 2009), two of this project’s authors examined each app, and recorded responses using a spreadsheet. After all the apps were examined, commonalities related to their purpose, requirements, and usages were used to group the apps into skill-based, content-based, and function-based categories.

Also, a Pearson correlation coefficient was calculated for each category (Benesty, Chen, Huang, & Cohen, 2009). We found a .85 correlation for the skill-based category, a .85 correlation for the content-based category, and a correlation of 1.0 for the function-based category. As the numbers of apps placed in each category swelled and nuanced distinctions between the apps in the same categories were recognized, we collaborated to break the larger categories into subcategories. The resulting classification framework is as follows:

- Skill-based

- Description: Apps that use recall, rote memorization, and skill-and-drill instructional strategies to build students’ literacy abilities, numeracy skills, standardized test readiness, and subject area knowledge.

- Bloom’s Taxonomy Implications: Remembering and Understanding

- Content-based

- Description: Apps that give students access to vast amounts of information, data, or knowledge by conducting searches or through exploring pre-programmed content.

- Bloom’s Taxonomy Implications: Applying and Analyzing

- Function-based

- Description: Apps that assist students in transforming learned information into usable forms.

- Bloom’s Taxonomy Ranking: Evaluating and Creating

For representative apps in each subcategory, see Table 1.

Table 1

Representative Apps for the Classification Framework’s Subcategories

Skill-Based Apps | Content-Based Apps | Function-Based Apps | Educational |

| Literacy Fluency Grammar Handwriting Language Acquisition Spelling Vocabulary Outlier-Joy of Reading Outlier-Reading Assessment | Subject Area English Language Arts Math Science Social Studies | Canvas Educreation | Intelligence – What’s My IQ HD |

| Graphic Organizers – Idea Sketch | Trivia – Icon Pop Quiz

| ||

| Learning Community – Edmodo | |||

| Note Taking – Notability | |||

| Reference Calculator Encyclopedias Geography Language Search Engines Video Libraries

| Office – CloudOn | ||

| Presentation – Prezi

| |||

| Numeracy Digital Flashcards Games | |||

| Science – QuotEd ACT Science | |||

| Social Studies – US State Capital Quiz | |||

| Test Preparation State Exam College Entrance |

Skill-Based Apps. Skill-based apps use recall, rote memorization, and skill-and-drill instructional strategies to build students’ literacy abilities, numeracy skills, standardized test readiness, and subject area knowledge. Literacy and numeracy apps typically target students ranging in age from toddlers through elementary school, but students in middle and high school who need literacy and numeracy remediation may also benefit from using them.

The standardized test preparation and subject area apps target students in middle and high school. According to Goodwin and Highfield (2012a,b), because all of these apps place lower order thinking demands on students, they should be labeled as Instructive apps. However, these apps build the foundational literacy, numeracy, and testing skills and the subject area knowledge students need to meet the current and future learning demands placed on them by Manipulable and Constructive apps (and standardized tests), which gives Skill-Based apps value.

When classifying apps as Skill-Based, Literacy, Numeracy, Science, Social Studies, and Test Preparation subcategories were developed. Literacy apps develop students’ fluency, grammar, handwriting, language acquisition, spelling, and vocabulary skills. Each Literacy app typically focuses on developing only one of these skills. Few Literacy apps reviewed focused on more than one literacy skill, and those that did concentrated on vocabulary and spelling by requiring students to recall a word’s definition and spell it correctly.

Two groups of Literacy Outlier apps included Joy of Reading and Reading Assessments. Joy of Reading apps were designed for early readers. They consisted of colorful picture books children could read aloud or have read to them by the app, and they typically included some activities children could do during or after the reading. Reading Assessment apps contained evaluations that gathered quantitative data about students’ reading grade level, fluency rate, or reading comprehension to help teachers assess students’ reading abilities.

Numeracy apps were designed to engage students in completing basic mathematical operations (e.g., adding, subtracting, multiplying, and dividing) and used either digital flashcards or game formats to do so. The apps that used digital flashcards displayed a math problem students were to solve by typing in the correct answer, and the game format apps required students to answer math questions to advance levels in the game. Both the digital flashcard and game format apps typically allow students to select the level where they would like to begin, while increasing in difficulty as students answer questions correctly.

The Science and Social Studies subcategories are characterized by multiple choice questions or matching activities, where students must select the correct answer from a list of choices or from a geographic region on a map. These apps either build or review students’ subject area knowledge. Challenges with creating these subcategories included identifying English language arts and math apps and limiting the specificity of each subject area. We found no apps that used low order thinking skills to extend student knowledge of English language arts and math outside of early literacy and numeracy skills. As such, no subcategories were created for English language arts or math.

Apps that taught students foundational science and social studies using lower order thinking skills were found, and subcategories were created. Detailed subcategories were not created, owing to the complexity of the task and the increased level of personal bias this process would inject. For example, the National Council for Social Studies lists its applicable school-based disciplines as “anthropology, archaeology, economics, geography, history, law, philosophy, political science, psychology, religion, and sociology, as well as appropriate content from the humanities, mathematics, and natural sciences” (2013, para. 3). With so many disciplines, the task of creating a subcategory for each discipline within the social studies subject area falls outside this project’s scope. The same is true for developing subcategories for science.

Finally, the Test Preparation categories were created for apps that taught students the foundational skills and knowledge needed to be successful on standardized tests. As apps were categorized as Test Preparation, distinctions between apps that prepared students for college entrance exams and states’ standardized tests were soon identified; subsequently, the College Entrance Exam and State Exam subcategories were created. Both subcategories used repeated exposure to test content to build students’ readiness for the tests. Apps in the College Entrance Exam subcategory prepare students for the ACT and SAT while apps in the State Exam subcategory prepare students for state high stakes accountability exams (e.g., the New York Regent’s Exam and Virginia’s Standards of Learning exams).

When using skill-based apps in their classrooms, teachers must be mindful that the rigor of these apps ranks low on Bloom’s Taxonomy (Anderson, Krathwohl, & Bloom, 2001; Bloom, 1956), because students are usually required to recall and remember previously learned knowledge to answer questions. However, the feedback these apps give students about their performance is immediate, which allows students easily to track their learning by providing them information about their performance after answering a question or a small series of questions. While low on rigor, the relevance of these apps ranks high because students will use the literacy, numeracy, testing skills, and subject area knowledge these apps develop as they engage more challenging learning tasks.

Content-Based Apps. Content-based apps give students access to vast amounts of information, data, or knowledge by conducting searches or through exploring preprogrammed content. Students in upper elementary school through high school can use these apps to learn and research topics that interest them; however, students must have the literacy and numeracy abilities that Skill-Based apps develop in order to access the information Content-Based apps provide.

Common characteristics of Content-Based apps are they do not assess students or require them to complete a learning task; rather, these apps are designed for students to read texts, view images, and watch videos related to specific topics, similar to walking from exhibit to exhibit in a museum. Goodwin and Highfield (2012a,b) would label these apps as Manipulable because they allow students to make some choices about the content they explore in a predetermined context. In this category, the predetermined context is the app’s design for how students search, explore, and engage the app’s content.

To distinguish the design of apps in this category, Subject-Area and Reference subcategories were created. Subject-Area apps contain preprogrammed content designed to deepen students’ understandings of an academic content area (e.g., art, English language arts, math, science, and social studies). These apps are considered top-down apps (Rouse, 2005) because the content students engage is already programmed into the app, limiting students to nothing beyond the preprogrammed content. Reference apps allow students to access, search, browse, and explore a wide variety of topics. These apps are regarded as bottom-up apps (van der Vet & Mars, 1995), because students are able to conduct their own searches for content to develop their understanding of a topic.

Content-based apps are useful when students must conduct research or explore a topic. These apps promote higher order thinking skills because they require students to evaluate the quality of the information provided before synthesizing it into a meaningful form. A drawback to these apps is that they do not provide feedback about how effectively students use that information. Therefore, teachers will need to spend class time instructing students on the effective use of search engines (Schrock, 2013; Steele-Carlin, 2005) and evaluating the quality of their search results (Milner, Milner, & Mitchell, 2012; Vacca, Vacca, & Mraz, 2011).

Function-Based Apps. Function-Based apps assist students in transforming learned information into usable forms. Middle and high school students likely benefit most from Function-Based apps, but there are many ways elementary students can use them as well. Function-Based apps do not feature assessments or contain academic content; rather, students use these apps to create learning artifacts, which may include textual depictions, visual representations, or multimedia presentations of their learning. In this way, Function-Based apps use the literacy and numeracy skills students learned from Skill-Based apps to display the knowledge they learned from Content-Based apps. Goodwin and Highfield (2012a,b) would classify these apps as Constructive because students can create artifacts that demonstrate their learning of specific information using open-ended templates.

To help classify Function-Based apps, multiple subcategories were created. First, we created subcategory Canvas for apps that allow students to use text, images, shapes, and voice recordings to illustrate their learning of a specific topic, similar to Highfield and Goodwin’s (2012a,b) constructive category. The products students can create using these apps include images, texts, and videos that can usually be emailed or posted to class a website.

The Graphic Organizer subcategory contains apps for students to construct conceptual maps. These maps may take a variety of forms and examples include flow charts, webs, Venn diagrams, cyclical diagrams, and cause and effect relationships.

The Learning Community subcategory consists of apps that provide teachers and students with digital classrooms (Chan, 2010; Stiglingh, 2006). Characteristics of these apps include students having the ability to share their work and thoughts electronically with classmates, while teachers can send out announcements and assignments to their students using a class message board. Additionally, teachers are able to upload and store class documents in online folders that students can access.

The Note Taking subcategory includes apps for recording information, and students can use these apps while reading a text, listening to a lecture, or watching a video. These apps typically let students write notes using their fingers and include a system for organizing notes by topic, theme, or subject.

The Office subcategory houses apps students can use to create text documents, spreadsheets, or presentations. These apps include many of the same features as Microsoft Office, and products students create can usually be saved in the app and emailed as attachments.

Finally, the Presentation subcategory contains apps students can use to report information they learned. Whereas Office apps include only electronic slide presentations, Presentation apps include a variety of alternative formats for students to report their learning.

Because students use Function-Based apps to create products, these apps rate on the top two levels (e.g., creating and evaluating/evaluate and synthesis) on Bloom’s Taxonomy (Anderson, Krathwohl, & Bloom, 2001; Bloom, 1956). Function-based apps also develop students’ 21st-century skill base (Piirto, 2011; Walser, 2008), because through their use, students create and share learning artifacts digitally. One drawback of Function-Based apps is that they do not provide students with feedback as to the quality of the products they create. Additionally, teachers will need to spend class time instructing students how to use these apps and modeling exemplary products created with these apps. Without this support, students will lack the knowledge and scaffolding (Hammond & Gibbons, 2001) to create and share artifacts effectively using Function-Based apps.

Educational Misfits. Educational Misfits are apps that have little educational merit, and their content generally targets high school students. These apps have their own category because people may mistakenly consider them to have educational value if they are listed in the App Store or in a website database, but these apps do not build students’ literacy or numeracy skills, provide students with access to content, or allow students to create learning artifacts or present their ideas. Rather, these apps have students engage content that has little value. Goodwin and Highfield (2012a,b) did not develop a term for apps of this variety.

To classify these apps, the subcategories of Intelligence Test and Trivia were created. The Intelligence Test subcategory contains apps that measure individuals’ intelligence quotient (IQ) score. These apps contain series of questions for individuals to answer and they calculate an approximate IQ score upon completion.

The Trivia subcategory asks individuals themed questions ranging from popular culture and sports to biblical studies and music. After answering a question or a series of questions, these apps typically provide individuals with a score summarizing their overall performance. Although these apps can be fun and entertaining, they have little educational worth and are not recommended for use in preK-12 classrooms.

Implications for Teachers Educators

As school districts from across the nation heavily invest in tablet technologies (Gale, 2013; Gliksman, 2013; Holeywell, 2012), apps will continue to have a large presence in preK-12 public schooling for the foreseeable future. Teacher educators must prepare teacher candidates to make informed decisions as to how and why they integrate apps into their teaching practices. The integration of tablets and apps, however, cannot be merely an add-on to previously designed lessons. Rather, it needs to be a deep consideration of how using technology can advance candidates’ pedagogical content knowledge, and TPACK is a framework developed for this purpose (Koehler & Mishra, 2005, 2009).

TPACK is a framework describing how knowledge of content, pedagogy, and technology can be meaningfully integrated into the instruction teachers provide to students (Koehler & Mishra, 2009). In short, content knowledge refers to the materials, concepts, and theories teachers want to impart on their students (Shulman, 1987). Pedagogical knowledge consists of the methods—both general instructional methods and discipline-specific methods—teachers use for students to engage the content (Shulman, 1987). Pedagogical content knowledge then “goes beyond knowledge of the subject matter per se to the dimension of subject matter knowledge for teaching” (Shulman, 1986, p. 9), meaning that a teacher must know the best methods for representing the knowledge and making it comprehensible for students.

Technological knowledge is the use of tools to support teachers in delivering their instruction (Koehler & Mishra, 2009). Important to technological knowledge is understanding that there are both existing technologies and emerging technologies (Cox & Graham, 2009). Existing technologies include tools that were once state-of-the-art but have now become common tools used in daily life. Examples of these technologies used in education include pencils, whiteboards, and overhead projectors.

Emerging technologies are new tools created by modern advances in different technological fields that have yet to become commonplace. Examples of these technologies used in education include tablets, computer software, and interactive whiteboards. Two additional dimensions are technological content knowledge (TCK) and technological pedagogical knowledge (TPK). Cox and Graham (2009) explained TCK as the “the knowledge of how to represent concepts with technology” (p. 64), and teachers using software to demonstrate how a natural disaster could impact a local community is an example of TCK. TPK is, then, the use of technology to motivate or engage students in the content being delivered (Cox & Graham, 2009), and teachers using tablets to motivate and engage students in the content being taught is an example. However, our app classification framework is situated in technological knowledge.

When teachers use tablets as the technological component of TPACK for their instruction, a conceptual understanding of apps and their differing purposes and functions is needed. This understanding is crucial, because it will support teachers in selecting and, subsequently, using apps to strengthen the instructional methods they use to deliver content to their students. For example, earlier we discussed the differences between the MeMe Tales and One Minute Readers apps. Although both apps are classified as skill based and support early literacy skills, knowing that the purpose of MeMe Tales is to develop students’ enjoyment of reading, whereas the purpose of One Minute Reader is to assess students’ reading fluency and comprehension skills is significant. Without this understanding, teachers may attempt to use One Minute Reader to build students’ enjoyment of reading, which would be a misuse of this app and not meet students’ instructional needs.

By classifying these apps using our framework’s categories and subcategories, the distinctions between these apps become apparent. Our framework aligns with TPACK by providing support to teachers in matching their content and pedagogy to the functionalities and purposes of specific apps.

Additional examples of our app classification framework’s usefulness comes from teachers assigning students research assignments or wanting students to take notes during a lecture. If a teacher assigns students a research project and wants them to use apps to gather information, that teacher should identify apps classified under the Content-Based category and further delineate into the subcategory of Reference. At this point, the teacher and students would need to select the type of reference apps that would best serve their needs, whether they be apps that functions as encyclopedias, search engines, or video libraries. No matter the selection, the apps classified in these subcategories provide students access to large amount of information that they can use in their research projects.

If a teacher plans to lead a discussion about a topic and wants students to take notes, the teacher would use our framework to select an app from the Function-Based category and then choose an app from either the Note Taking or Office subcategories, depending on the type of notes the teacher wants students to create. In both examples, our app classification framework aligns with TPACK, because it supports teachers in matching their content and pedagogy to the functionalities and purposes of specific apps.

Currently, the resources available to support teachers in selecting apps are limited in that they do not make clear distinctions about apps based on their purposes or align to a theoretical framework for using technology such as TPACK. Rather, the resources tend to group apps together by subject area or grade level, which discounts the varying cognitive demands that different apps require of users and the different purposes and functionalities of apps, in general. Moreover, this lack of information requires more work from teachers.

For example, when teachers search a database or review a blog’s app suggestions, they must then download the app and test it to ensure it meets their instructional needs. Because these databases and blogs do not classify apps based on their purpose, teacher candidates need to understand how to assess apps based on the cognitive demands they place on students in how they function. In this way, the framework we put forward is intended to support teacher educators in explaining these important differences between apps to their candidates. When candidates graduate from their teacher education programs, they will be more prepared to select the apps that are most appropriate for their instructional needs.

Conclusion

As educational apps continue to increase in number and gain popularity, teacher candidates must have the ability to discriminate, integrate, and use apps effectively as they begin their teaching careers. The framework presented here, with its embedded relationship to TPACK, is a starting point to ensuring this preparation takes place. We anticipate, just as the technology continues to evolve, so too will our consideration of appropriate frameworks to understanding and selecting apps for educational purposes.

This framework highlights the need a comprehensive criteria for the evaluation of apps. Rubrics that have limited research bases, such as the ones put forward by Buckler (2012) and Walker (2011) are good beginnings, but their rubrics’ limited dimensions make generalizable considerations about the worth of apps. As we continue considering the quality of educational apps, we must understand that the app’s purpose—whether it is to teach a skill, provide access to content, or serve a function—will dictate how an app scores on generalizable rubrics. It is our hope that the framework presented here will lend itself to these conversations.

References

Anderson, L. W., Krathwohl, D. R., & Bloom, B. S. (2001). A taxonomy for learning, teaching, and assessing. White Plains, NY: Longman.

Android Developers. (2012). Android, the world’s most popular mobile platform. Retrieved from http://developer.android.com/about/index.html

Apple Press Info. (2013). Apple’s App Store marks historic 50 billionth download [Press release]. Retrieved from the Apple Inc. website: http://www.apple.com/pr/library/2013/05/16Apples-App-Store-Marks-Historic-50-Billionth-Download.html

Apple inc. (2013). iOS7. Retrieved from http://www.apple.com/ios/.

Benesty, J., Chen, J., Huang, Y., & Cohen, I. (2009). Pearson correlation coefficient. In I. Cohen, Y. Huang, J. Chen, & J. Benesty (Eds.), Noise reduction in speech processing (pp. 1-4). Berlin, Germany: Springer

Bloom, B. S. (Ed.). (1956). Taxonomy of educational objectives: The classification of educational goals. White Plains, NY: D. McKay.

Bruce, B. C., & Levin, J. A. (1997). Educational technology: Media for inquiry, communication, construction, and expression. Journal of Educational Computing Research, 17(1), 79-102.

Buckler, T. (2012). Is there an app for that? The Journal of BSN Honors Research, 5(1), 19-32.

Chan, T. W. (2010). How East Asian classrooms may change over the next 20 years. Journal of Computer Assisted Learning, 26, 28-52.

Clements, D. H., & Nastasi, B. K. (1988). Social and cognitive interactions in educational computer environments. American Educational Research Journal, 25(1), 87-106.

Coffey, A., & Atkinson, P. (1996). Making sense of qualitative data: Complementary research strategies. Thousand Oaks, CA: Sage Publications.

Connelly, M., & Clandinin, J. D. (1990). Stories of experience and narrative inquiry. Educational Researcher, 19(2), 2-14.

Cox, S., & Graham, C. R. (2009). Using an elaborated model of the TPACK Framework to analyze and depict teacher knowledge. TechTrends, 53(5), 60-69.

Daggett, W. R. (2005). Achieving academic excellence through rigor and relevance [Report] Retrieved from the International Center for Leadership in Education website: http://www.leadered.com/pdf/Achieving_Academic_Excellence_2014.pdf

Dunn, J. (2013). A crowdsource list of the best iOS education apps. Edudemic. Retrieved from http://www.edudemic.com/the-best-education-apps-for-ios/

eSchool News. (2013). New: 10 of the best Apple and Android apps for education in 2013. eSchool News. Retrieved from http://www.eschoolnews.com/2013/04/26/new-10-of-the-best-apple-and-android-apps-for-education-in-2013/

Fingas, J. (2012). Google Play hits 600,000 apps, 20 billion total installs. Engadget. Retrieved from http://www.engadget.com/2012/06/27/google-play-hits-600000-apps/

Gale, H. (2013, August 15). School district hopes to connect with people through mobile app. MyHorryNews.com. Retrieved from http://www.myhorrynews.com/news/education/article_34216df8-05eb-11e3-a151-0019bb30f31a.html

Glense, C. (2006). Becoming qualitative researchers: An introduction. Boston, MA: Pearson.

Gliksman, S. (2013, September 24 ). Will LAUSD’s iPad upgrade work? Jewish Journal. Retrieved from http://www.jewishjournal.com/opinion/article/will_lausds_ipad_upgrade_work

Godwin-Jones, R. (2011). Emerging technologies: Mobile apps for language learning. Language Learning & Technology, 15(2), 2-11.

Goodwin, K., & Highfield, K. (2012a, March). iTouch and iLearn – A examination of educational apps. [Electronic slideshow]. Presentation made at the Early Education and Technology for Children Conference, Salt Lake City, UT. Retrieved from http://www.eetcconference.org/wp-content/uploads/Examination_of_educational_apps.pdf

Goodwin, K., & Highfield, K. (2012b, March). iTouch and iLearn–An examination of “educational” apps. Paper presented at the Early Education and Technology for Children Conference, Salt Lake City, UT.

Hammond, J., & Gibbons, P. (2001). What is scaffolding? In A. Burns & H. d. S. Joyce (Eds.), Teachers’ voices 8: Expliciting supporting reading and writing in the classroom (pp. 8-16). Sydney, Australia: National Centre for English Language Teaching and Research.

Handal, B., El-Khoury, J., Campbell, C., & Cavanagh, M. (2013). A framework for categorising mobile applications in mathematics education. Proceedings of the Australian Conference on Science and Mathematics Education (pp. 142-147). Canberra: IISME. Available at: http://ojs-prod.library.usyd.edu.au/index.php/IISME/article/view/6933

Haugland, S. W. (1992). The effect of computer software on preschool children’s developmental gains. Journal of Computing in Childhood Education, 3(1), 15-30.

Heick, T. (2013, March 13). The 55 best free education apps for iPad. TeachThought. Retrieved from http://www.teachthought.com/apps-2/the-55-best-best-free-education-apps-for-ipad/

Highfield, K., & Goodwin, K. (2013). Apps for mathematics learning: A review of ‘educational’ apps from the iTunes App Store. In V. Steinle, L. Ball., & C. Bardini (Eds.). Mathematics education: Yesterday, today and tomorrow. (Proceedings of the 36th annual conference of the Mathematics Education Research Group of Australasia). Melbourne, VIC: MERGA.

Holeywell, R. (2012, June). Texas school district pays $20M for iPads. Governing the States and Localities. Retrieved from http://www.governing.com/topics/education/gov-struggling-texas-school-district-buys-ipads.html

Johnson, L., Smith, R., Willis, H., Levine, A., & Haywood, K., (2011). The 2011 Horizon Report. Austin, TX: The New Media Consortium.

Koehler, M., & Mishra, P. (2005). What happens when teachers design educational technology? The development of technological pedagogical content knowledge. Journal of Educational Computing Research, 32(2), 131–152

Koehler, M., & Mishra, P. (2009). What is technological pedagogical content knowledge (TPACK)?. Contemporary Issues in Technology and Teacher Education, 9(1), 60-70. Retrieved from https://citejournal.org/vol9/iss1/general/article1.cfm

Lucey, T. A. (2012). Reframing financial literacy: Exploring the value of social currency. Charlotte, NC: Information Age Pub.

McDougall, A., & Squires, D. (1995). An empirical study of a new paradigm for choosing educational software. Computer Education, 25(3), 93-103.

McGrath, N. (2013). Attack of the apps: Helping facilitate online learning with mobile devices. eLearn, 2013(3), 4.

Means, B. (Ed.). (1994). Technology reform: The reality behind the promise. San Francisco, CA: Jossey-Bass.

Merriam, S. B. (2009). Qualitative research: A guide to design and implementation. San Francisco, CA: Jossey-Bass.

Messick, S. (1989). Validity. In R. L. Brennan (Ed.), Educational measurement (3rd ed., pp. 13-103). Washington, DC: American Council on Education.

Milner, J. O., Milner, L. M., & Mitchell, J. F. (2012). Bridging English (5th ed.). Boston, MA: Pearson.

Murray, O. T., & Olcese, N. R. (2011). Teaching and learning with iPads, ready or not? TechTrends, 55(6), 42-48.

National Council for the Social Studies. (2013). About National Council for the Social Studies. Retrieved from http://www.socialstudies.org/about

Noonoo, S. (2013, February 26). 31 top apps for education from FETC 2013. T.H.E. Journal. Retrieved from http://thejournal.com/articles/2013/02/26/31-top-apps-for-education-from-fetc-2013.aspx

Norris, C. A., & Soloway, E. (2011). Learning and schooling in the age of mobilism. Educational Technology, 51(6), 3.

Pelgrum, W. J., & Plomp, T. (1993). The worldwide use of computers: A description of main trends. Computers & Education, 20(4), 323-332.

Piirto, J. (2011). Creativity for 21st century skills: How to embed creativity into the curriculum. Boston, MA: Sense Publishers.

Pilgrim, J., Bledsoe, C., & Riley, S. (2012). New technologies in the classroom. The Delta Kappa Gamma Bulletin, 78(4), 16-22.

Rao, L. (2012, January 19). Apple: 20,000 education iPad apps developed; 1.5 million devices in use at schools. TechCrunch. Retrieved from http://techcrunch.com/2012/01/19/apple-20000-education-ipad-apps-developed-1-5-million-devices-in-use-at-schools/

Rouse, M. (2005). Structured programming (modular programming). Retrieved from the TechTarget website: http://searchcio-midmarket.techtarget.com/definition/structured-programming

Schrock, K. (2013). Successful web search strategies [Electronic slideshow handout]. Retrieved from http://www.schrockguide.net/uploads/3/9/2/2/392267/searching.pdf

Shuler, C. (2009). iLearn: A content analysis of the iTunes App Store’s education section. New York, NY: The Joan Ganz Cooney Center at Sesame Workshop

Shulman, L.S. (1986). Those who understand: Knowledge growth in teaching. Educational Researcher, 15(2), 4-14.

Shulman, L. S. (1987). Knowledge and teaching: Foundations of the new reform. Harvard Educational Review, 57(1), 1-21.

Smlierow. (2013, April 29). Steve Jobs on the post PC era [Video]. Retrieved from YouTube: http://www.youtube.com/watch?v=YfJ3QxJYsw8

Steele-Carlin, S. (2005). Surfing for the best search engine teaching techniques. EducationWorld. Retrieved from http://www.educationworld.com/a_tech/tech078.shtml

Stiglingh, E. J. (2006). Using the Internet in higher education and training: A development research study (Doctoral dissertation, University of Pretoria).

Vacca, R. T., Vacca, J. L., & Mraz, M. (2011). Content area reading: Literacy and learning across the curriculum (10th ed.). Boston, MA: Pearson.

van der Vet, P. E., & Mars, N. J. I. (1995). Bottom-up construction of ontologies: The case of an ontology of pure substances. (Technical report UT-KBS). The Netherlands: Knowledge Base Systems Group. Retrieved from http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.24.719&rep=rep1&type=pdf

Walker, H. (2011). Evaluating the effectiveness of apps for mobile devices. Journal of Special Education Technology, 26(4), 59-66.

Walser, N. (2008, September/October). Teaching 21st century skills. Harvard Education Letter. Retrieved from http://siprep.ccsct.com/uploaded/ProfessionalDevelopment/Readings/21stCenturySkills.pdf

Waters, J. K. (2010). Enter the iPad (or not?). T.H.E.Journal, 37(6), 38-40.

Wingfield, N. (2013, April 10). PC sales still in a slump, despite new offerings. The New York Times, B5.

Wolfram, S. (1984). Computer software in science and mathematics. Scientific American, 251(3), 188-92.

Woollaston, V. (2013, May 16). There are 50 BILLION apps for that: Apple’s App Store hits milestone and gives away £6,550 to celebrate. Mail Online. Retrieved from http://www.dailymail.co.uk/sciencetech/article-2325625/Apples-App-Store-hits-50-billion-downloads-gives-away-6-550-celebrate.html

Author Notes

Todd Cherner

Coastal Carolina University

Email: [email protected]

Judy Dix

Coastal Carolina University

Email: [email protected]

Corey Lee

Coastal Carolina University

Email: [email protected]

Appitic (http://www.appitic.com)

Apps4Edu (http://www.uen.org/apps4edu/)

EdShelf (http://www.edshelf.com/)

Graphite.org (http://www.graphite.org)

Website Databases Designed for Apps

The following screenshots show how users can search for apps on website databases designed for apps and the information these website databases provides users.

Figure A1. Screenshot from Graphite.org that shows how users can search its contents, March 30, 2014.

Figure A2. Screenshot from Graphite.org that shows how it presents search results to users, March 30, 2014.

Figure A3. Screenshot from Graphite.org that shows the types of information it presents to users about a specific app, March 30, 2014.

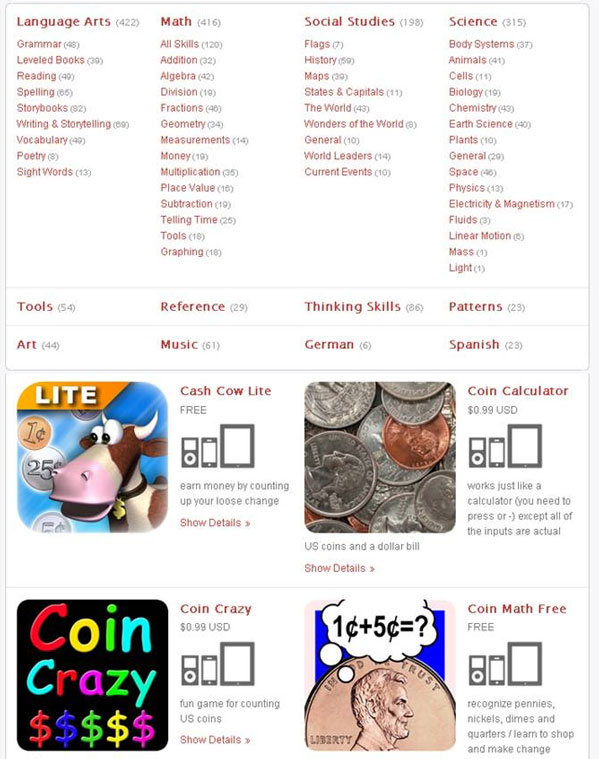

Figure A4. Screenshot from Apps4Edu that shows how users can search its contents, March 30, 2014.

Figure A5. Screenshot from Apps4Edu that shows how it presents search results to users, March 30, 2014.

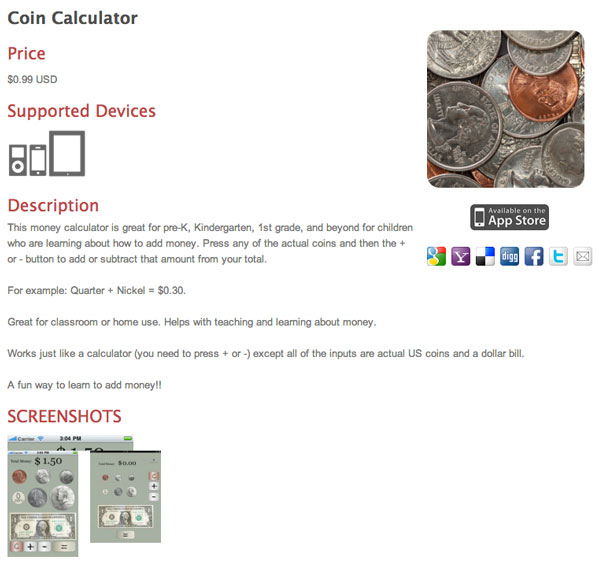

Figure A6. Screenshot from Apps4Edu that shows the types of information it presents to users about a specific app, March 30, 2014.

Figure A7. Screenshot from EdShelf that shows how users can search its contents, March 30, 2014.

Figure A8. Screenshot from EdShelf that shows how it presents search results to users, March 30, 2014.

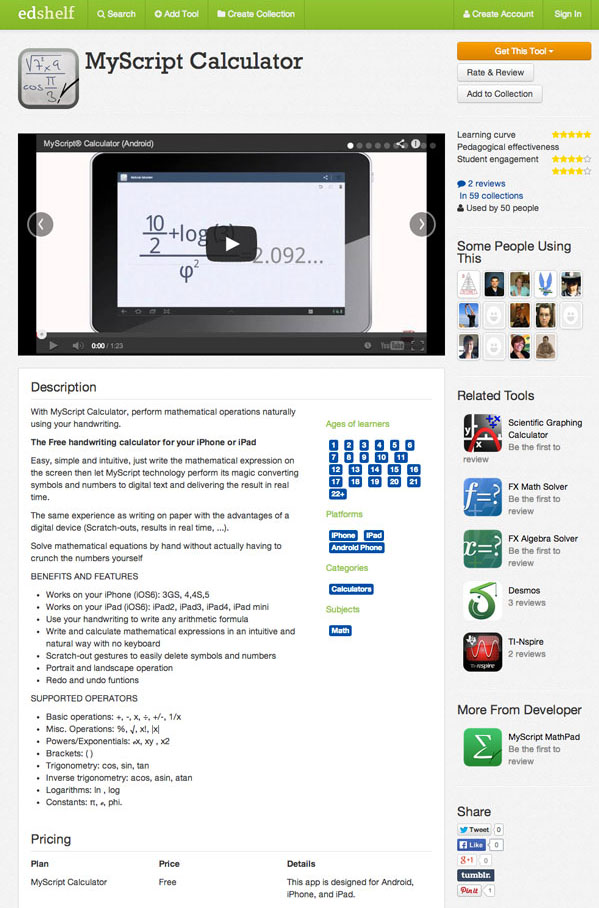

Figure A9. Screenshots from EdShelf that shows the types of information it presents to users about a specific app, March 30, 2014.

Figure A10. Screenshot from Appitic that shows how users can search its contents, March 30, 2014.

Figure A11. Screenshots from Appitic that shows how it presents search results to users, March 30, 2014.

Figure A12. Screenshots from Appitic that shows the types of information it presents to users about a specific app, March 30, 2014.

List of Classification Categories Used by Pelgrum and Plomp (1993)

Authoring programs

CAD/CAM

Comp. communication

Control devices

Database programs

Drill and practice

Educational games

Gradebook programs

Interactive video

Item bank for tests

Lab interfaces

Mathematical graphing

Music composition

Painting or drawing

Programming language

Recording & scoring

Recreational games

Simulation programs

Spreadsheet programs

Statistical programs

Tools and utilities

Tutorial programs

Word processing/dtp

Work Flow Chart

![]()