This article reports on an exploratory project in which we designed an innovative interactive video method to help preservice teachers practice critical observation of other preservice teachers as preparation for eventually observing their own classroom teaching on video. The interactive video method targeted classroom noticing (van Es & Sherin, 2002) as an aspect of teacher expertise (Berliner, 1986).

The interactive video method adapted the expert-novice research paradigm. Both experienced teacher educators (experts) and preservice teachers (novices) viewed the same video clips, looking for instances of classroom management and student questioning. The experts’ observations were offered to the novices as a way of guiding the novices to notice classroom behaviors more like experts do, thereby beginning to build an important aspect of teacher expertise even while in the early stages of teacher education.

We, the authors, designed, implemented, and compared two versions of the interactive video method that differed in complexity. The more sophisticated version used video annotation technology and procedures initially developed for qualitative research but have been repurposed for instruction by teacher education researchers (Rich & Hannafin, 2009). In this version, preservice teachers viewed video clips of classroom teaching, wrote their observations of classroom management or student questioning issues, and then compared their written observations to those of the experienced teacher educators. This version was highly interactive, both in requiring preservice teachers to write their observations and also in cognitively challenging them to reconcile differences between their observations and those of the experts before watching the next video clip.

Although we created a manual prototype (see Methods section) for research purposes, this version would ultimately require a software program similar to those used for video annotation to operate as a self-instructional interactive video module. We wanted to test the feasibility of this complex and highly interactive approach before committing to further development.

We also designed a less sophisticated version of the interactive video method that maintained the critical design elements of using classroom teaching video of other preservice teachers for stimulus material and using experts’ observations as feedback. In this version, preservice teachers could read the written comments of experienced teacher educators while they viewed the video clips. Preservice teachers were instructed to compare the experts’ observations to their own, but were not required to write their observations. This version was much less interactive but could be executed as a group activity in a teacher education classroom or set up as a simple self-instructional activity on a learning management system (e.g., Blackboard).

We conducted a small-scale experiment with students in a teacher education program that compared these two interactive video methods to each other and to a no-video control group. However, the primary goals of this project were to apply innovative technology and theory to advance the long-established use of video in teacher education. In addition to a review of literature, therefore, we also describe the numerous decisions involved in designing interactive video methods. Design issues such as source of video footage, classroom context provided, length of video clips, and type of learner interaction should be considered in any use of classroom video for teacher education.

Theories of Video Use in Teacher Education

Many teacher education programs aspire to produce reflective practitioners (Schön, 1987) who are able to observe critically and evaluate their own teaching practice. Although becoming a good observer of the classroom environment is, itself, a fundamental aspect of teacher expertise (Berliner, 1986, 2004) and pedagogical content knowledge (Shulman, 1986), overcoming self-consciousness in order to observe oneself critically is also a substantial challenge, especially when self-observation involves viewing classroom video recordings (Greenwalt, 2008). However, while video-based self-observation is challenging for many developing teachers, it is also becoming more important, as assessment of both preservice and in-service teachers increasingly involves classroom video evidence (Hannafin, Shepherd, & Polly, 2010).

Teacher candidates are well served by embracing video as a means of both proving and improving their classroom teaching. That way, they can be better prepared for high-stakes video assessment such as Teacher Performance Assessment (TPA), which has become part of licensure in many states (Butrymowicz, 2012). They can be more confident in using video evidence for professional recognition, such as National Board Certification. As professional teachers, they can continually improve their teaching through sharing classroom videos with supervisors, mentors, and peers in contexts such as video clubs (Sherin, 2007; Sherin & Han, 2004).

Video-based self-assessment is increasingly important and well researched (see Tripp & Rich, 2012, for a review of 63 studies involving video-based self-observation). Yet, an underexplored use of video in teacher education is scaffolding preservice teachers’ video-based self-observation (self-video) by having them first practice critically observing the classroom videos of other preservice teachers at a similar stage of development, whom we call near peers. Self-video can be difficult for preservice teachers to process, both emotionally and analytically. Indeed, the term video confrontation has been used to describe critical observation of self-video (Fuller & Manning, 1973), a process that requires teachers to separate from and objectify their on-camera selves (Greenwalt, 2008).

Not surprising, students who are new to video self-observation tend to focus primarily on themselves. As Kagan and Tippins (1991) pointed out (cited in Wang & Hartley, 2003, p. 126), preservice teachers viewing video of their own teaching, even with direct prompts to identify and interpret signs of student learning and behavior, still struggle to get beyond focusing on their own lesson delivery.

Kagan and Tippins found that preservice teachers could more readily overcome this egocentric focus when critiquing video of others teaching rather than video of themselves teaching (different from peer video sharing). Providing critical feedback to peers is a mature skill probably more appropriate for late-stage preservice and in-service teachers than for early preservice teachers. Viewing near peer videos allows early preservice teachers to practice being critical without the emotional complications of self-critique or of critiquing actual cohort peers.

Limitations on what can be seen in classroom video recordings are another issue. Classroom video suffers from a keyhole effect (van Es & Sherin, 2002) that limits observers’ ability to judge many elements of the dynamic classroom situation. One way of addressing the keyhole effect is to provide narratives and artifacts so that viewers can more accurately and fully appreciate the actual teaching performance, even when it is captured by a stationary video camera. Another way to circumvent the limitations of classroom video is to use video footage, not as a way to study the actual classroom, but rather to trigger (Brouwer, 2011) observations by both experts and novices.

Whether trigger video shows a full representation of the particular classroom environment is not ultimately important. The video must be authentic and must provide opportunities for experts and novices to react to what is visible on the video, even when the quality of the video or the quality of the teaching is not optimal. Indeed, video that depicts problematic teaching rather than depicting best practices can provide a richer window for observation (Sherin, Linsenmeier, & van Es, 2009).

Interactive Video in Teacher Education

Video has long been used to model exemplary teaching practices and to provide feedback to preservice or in-service teachers on their own teaching. In the 1990s, a few adventurous teacher education researchers enhanced video-based observation of video teaching cases by developing interactive videodisc programs that required viewers to answer questions while viewing teaching episodes (e.g., Abell, Cennamo, & Campbell, 1996; Cronin & Cronin, 1992; McIntyre & Pape, 1993). Until recently, however, cost and ease-of-use issues have limited widescale deployment of interactive video. Advances in technology now make interactive video more attractive for wider application to teacher education (e.g., de Mesquita, Dean, & Young, 2010; Kurz & Batarelo, 2010; Mayall, 2010).

Video Annotation

In recent years, video-based observation has been taken to a high level as teacher education researchers have repurposed the video annotation methods and tools developed for qualitative research (Rich & Hannafin, 2009), usually with the instructional goal of generating deeper self-reflection (e.g., Calandra, Brantley-Dias, Lee, & Fox, 2009; Rich & Hannafin, 2008; van Es & Sherin, 2002). Video annotation activities typically involve preservice or in-service teachers coding video recordings of themselves delivering classroom lessons. The preservice or in-service teachers use one of several available computer-based video annotation tools, most of which were developed for qualitative research in classroom environments, to identify incidents of interest, mark the beginning and ending video time-code of the incident, and enter descriptive data (see Rich & Tripp, 2011, for a summary of video annotation tools).

Data, with video segments attached, can then be sorted and grouped so that teachers can inspect multiple instances of target behaviors. Teacher education students typically write and submit a reflective essay based on their video analysis. Sometimes students also edit and post video clips to illustrate their reflections (Calandra, Gurvitch & Lund, 2008; Fadde, Aud, & Gilbert, 2009).

In addition to self-analysis, video annotation methods have also been used to assess preservice or in-service teachers’ pedagogical content knowledge by having them code video cases showing teachers other than themselves. For example, Norton, McCloskey, and Hudson (2011) used video-based predictions to access prospective teachers’ knowledge of mathematics teaching.

Kucan, Palincsar, Khasnabis, and Chang (2009) used a video annotation activity to assess teachers’ knowledge about innovative reading instruction methods taught in a workshop. Both before and after the workshop, participants viewed short (approximately 5-minute) video clips and wrote comments about how teachers in the videos were executing the reading instruction strategies. The researchers maintained that changes in the workshop participants’ ability to notice relevant events and behaviors in the videos revealed their understanding of reading instruction techniques in ways a written test would not have.

A number of research studies have repurposed classroom videos that were originally recorded to study teaching methods for the 1999 Trends in International Mathematics and Science Study (TIMSS). TIMSS was a classic use of video annotation to conduct qualitative classroom research. Researchers analyzed video recordings of authentic and typical, rather than exemplary, classroom teaching to compare how math and science lessons were taught in different countries.

In later studies not associated with the TIMSS project, researchers judged teachers’ understanding of mathematics and science teaching techniques based on their observations of TIMSS videos (Kersting, 2008; Star & Strickland, 2008; Wong, Yung, Cheng, Lam, & Hodson, 2006). Kersting (2008) concluded, “Teachers’ ability to analyze video might be reflective of their teaching knowledge (p. 845).”

Peer Video Sharing

Researchers have investigated peer video sharing activities in the context of video clubs that involve in-service teachers discussing video from their classrooms with peers for professional development purposes (Borko, Jacobs, Eiteljorg, & Pitman, 2008; Sherin, 2007; Sherin & Han, 2004; van Es & Sherin, 2008). Peer critique using video sharing and web 2.0 technologies has also been used to generate dialogic learning among student teachers (Heintz, Borsheim, Caughlan, Juzwik, & Sherry, 2010). While peer video sharing has generally been well received, two studies that implemented peer video sharing among student teachers noted some reluctance by the student teachers to share videos of their own teaching and to criticize other student teachers’ videos (Harford & MacRuairc, 2008; So, Pow, & Hung, 2009).

Providing preservice teachers with opportunities to practice analyzing videos of near-peer preservice teachers may help them prepare emotionally and cognitively to analyze video recordings of their peers critically as well as of their own teaching. Early practice of video-based classroom observation may also help preservice teachers gain more insights from field observation activities that come later in a teacher education program. As pointed out by Star and Strickland (2008), observation of teaching practice is not always fruitful, because many preservice teachers are not able to focus on key features of teaching while they are observing.

Situation Awareness

Classroom teaching joins many other areas of performance, including sports, music, aviation, surgery, radiology, and neonatal emergency room nursing, in which expertise research has shown situation awareness, based on pattern recognition, to be a key source of expert performance advantage (Ericsson, Charness, Feltovich, & Hoffman, 2006). Experts, including expert teachers (Berliner, 2004), make rapid and largely unconscious decisions that can appear to be intuitive. Anthony Bryk (2009), president of the Carnegie Foundation for the Advancement of Teaching, noted,

This capacity to recognize critical patterns in classroom activity and react quickly and appropriately distinguishes novices from experts across many domains. Consequently, it is sensible to conceptualize teacher learning as a problem of expertise development. Individuals develop expertise by having many opportunities to engage in guided practice with others who are more expert. (p. 599)

The instructional design theory of expertise-base training (XBT) proposes that key cognitive subskills underlying intuitive expertise can be developed early in the trajectory from novice to expert by using instructional activities that are similar to expert-novice research methods (Fadde, 2009). Typically, expert-novice studies involve both experts and novices completing the same task and researchers comparing their processes or outcomes in order to locate areas of expert advantage. Instead of revealing expert-novice differences, XBT tasks are intended to hasten the development of novices into experts. An XBT approach leads preservice teachers (novices) increasingly to see what teacher-educators (experts) see when they view classroom teaching videos.

Classroom Noticing

Teacher education researchers have conceptualized teachers’ classroom awareness as noticing (Rosaen, Lundeberg, Cooper, Fritzen, & Terpstra, 2008; Sherin & van Es, 2005). Sherin and van Es defined noticing as going beyond simply recognizing the presence of particular events or behaviors to the attaching of meaning. Early teacher education students are likely to lack the knowledge of subject content and pedagogical methods necessary to attach meaning to the events and behaviors that they observe. However, classroom noticing combines distinct subprocesses of selective attention and knowledge-based reasoning (Sherin, 2007), and learning to notice can potentially address these two subprocesses separately.

An appropriate early step in developing classroom noticing, then, is developing selective attention—learning what is worth attending to and what is not worth attending to within the classroom environment. Lenses to guide classroom observation, therefore, need to have two dimensions: what to look for (foci), and what level is considered worth noticing (threshold).

Foci of observation. Santagata and Angelici (2010) noted that “teachers’ reflections can be unproductive…unless specific lenses are provided to guide their analyses….Yet little is known about what constitutes an effective lens for observing and analyzing teaching and what kind of reflections different lenses might afford.” (p. 346) For this study, we chose the focusing lenses of classroom management, which preservice teachers traditionally list as their top concern (Emmer & Hickman, 1991), and student questioning, which is foundational to student-centered teaching and which has been the focus of other video annotation studies (e.g., Calandra et al., 2008). These lenses also represent Domain 2: Classroom Environment and Domain 3: Instruction in the Danielson Framework, which is often associated with video-based assessment of teaching (The Danielson Group, 2011).

Threshold of observation. Selective attention requires not only perceiving classroom events and teacher/student behaviors but also deciding which of the myriad events and behaviors should be attended to. Little theory or research is available to guide the selection of a threshold for classroom observation. Calandra et al. (2008) instructed advanced preservice teachers to identify critical incidents from their videos to act as evidence in support of a written reflective essay, but left participants to decide which incidents were critical.

Design Decisions About Interactive Video Interventions

The video annotation methods developed by teacher education researchers have shown great promise for guiding sophisticated analysis of self-video by late-stage preservice teachers and in-service teachers. Because the target group for this project was early preservice teachers, we designed an interactive video activity that drew heavily from the video annotation approach but changed it because the video annotation approach may be more sophisticated than necessary for early-stage teacher education students. Another consideration was that video annotation methods generally require video-computer programs (Rich & Tripp, 2011), which add complexity as well as cost for teacher-educators. We, therefore, designed a less-sophisticated, but also less-interactive, guided video viewing activity to compare with the video annotation approach.

Interactive Video Interventions

Video Annotation. The video annotation activity involved preservice teachers viewing short (1-2 minute) video clips edited from authentic classroom videos and then annotating, or coding, the video clips with time-code referenced comments. After the preservice teachers viewed each video clip, they wrote down their comments concerning any classroom management or student questioning behaviors they observed in the video clip. They were then shown the comments made by three experienced teacher-educators while viewing the same video clip. The preservice teachers were instructed to compare their observations mentally to those of the teacher-educators and to think about any differences before viewing the next video clip.

The goal of the video annotation activity was for the novice preservice teachers to align increasingly their classroom observations with the observations of the expert teacher-educators over the course of viewing and coding several video clips. This interactive video approach assumed that students had already received formal instruction on what to look for in classroom situations, so that the video annotation activity served as application and practice.

Guided Video Viewing. The second interactive video activity we designed maintained key features of the video annotation activity but was much simpler to develop and deliver. In the guided video viewing activity participants viewed the same near-peer teaching video clips and were shown the same expert observations of the clips. However, the experts’ observations were made available to students all through their viewing of each clip rather than being revealed only after students had written their own observations. The guided video viewing activity did not require students to write down their own observations when viewing the video clips. Rather, students were instructed to think about what they would notice in the video clips and to compare that with the written observations of the experts.

We designed the two interactive video approaches to address several issues that teacher-educators and researchers face when using video for self-observation. One issue is that authentic classroom video footage can be difficult to acquire for teacher education purposes, leading researchers to investigate the use of computer animation in place of actual video (Smith, McLaughlin, & Brown, 2012). In this project, we repurposed existing classroom videos recorded for self-observation purposes in previous years.

Especially with the growing use of video in teacher candidate assessment, we expect that classroom video recordings of intern and student teachers will be increasingly available. While videos of preservice teachers are not the model videos or case videos that are more commonly used in teacher education, they are available and can—as we demonstrate in this study—provide valuable trigger video materials.

Video Design Issues

Length of Video Segments. Research studies have ranged from using whole, intact video lessons to using highly edited video clips (Sherin et al., 2009). Longer videos show more contextual information, which is important if the primary purpose of the video is to study the teacher or the teaching situation depicted in the video. However, when classroom video segments are used to trigger the observations of viewers, then representation of the full classroom context is less critical. Video clips to be used in our video annotation activity were edited to durations between 1 and 2 minutes in order to assure that expert feedback could be provided immediately after students wrote their observations.

Longer video segments could have been used in the guided video viewing activity since expert observations were constantly available and referenced to a visible time-code on the video so the viewer could watch the video and check the experts’ observations without interrupting the video playback. For our research purpose of comparing the two interactive video activities, however, the same sets of short video clips were used for both activities.

Content of Video Segments. In an extensive consideration of who and what to show in video analysis activities Sherin et al. (2009) focused on three issues: authenticity, context, and expertise. These authors claimed widespread agreement among researchers and teacher-educators that preservice teachers gain more from viewing authentic classroom video than from viewing staged video scenarios.

Context is defined both by the lens used to focus viewing and the way video clips are edited. In this project, we focused observation through the lenses of classroom management and student questioning. We adopted a threshold that early-stage teacher education students should readily understand: those incidents that an experienced teacher-supervisor observing a student teacher would comment upon in a short postobservation debrief session. In a direct instruction activity, we would select video segments depicting the behaviors of interest. However, in this study the task of noticing target behaviors dictated that some video clips did not contain any of the target behaviors. Therefore, a graduate assistant was instructed to excerpt 1- to 2-minute video segments that seemed to represent units of activity, but without regard to the video clip showing examples of particular teacher or student behaviors (in this case, classroom management and student questioning).

A third video content issue noted by Sherin et al. (2009) was whether teaching videos should depict exemplary teaching practices or problematic teaching. The authors cite numerous researchers as favoring videos that depict problematic rather than “best practices” teaching for purposes of generating reflection by viewers. Sherin et al. further maintained that video segments depicting problematic teaching behaviors can provide rich windows for observation—as is the case with the near-peer classroom teaching videos that were used as trigger video in this study.

Source of Teaching Videos. The short video clips (1-2 minutes each) that preservice teachers viewed were edited from videos of lessons delivered by other preservice teachers in an Introduction to Reflective Practice course that were recorded several years earlier. The course included students observing in area schools once a week. Students were also given the opportunity to teach a 50-minute lesson to the class that they had been observing (see Figure 1 for a still-frame from a student-taught lesson). In some cases, these were the first lessons the teacher education students had presented in an authentic classroom. The students in the original course were given a DVD of their lesson presentation for personal review and to support the writing of a reflective essay on their lesson teaching experience, which they then uploaded to an electronic portfolio system. Permission forms signed by the preservice teachers and by the classroom students who appeared in the original videos included permission to use the videos for future teaching and research purposes.

Figure 1. Still-frame of near-peer classroom lesson video.

Expert Feedback. Any instructional activity that involves using experts can get bogged down in definitional arguments of who is an expert. It would certainly be tricky to define “expert teacher” without specifying at least content area and school level (elementary, middle school, high school, higher education). Indeed, research consistently shows expertise to be highly specific to certain skills even within defined areas of performance (Ericsson et al., 2006).

In addition to being difficult to define, even people with acknowledged expertise may disagree in their judgments. We addressed these concerns by recruiting three experienced teacher-supervisors with clear expertise in the target task of observing teachers. We also limited the unit of analysis (1-2 minute video clips) and the foci of observation (classroom management and student questioning). We then collected observations from all three experts for each video clip and compiled their observations for presentation as expert feedback.

Research Method

This study used a quasi-experimental design to compare the effects of two interactive video activities—Video Annotation (termed coding) and Guided Video Viewing (termed viewing)—against a no-video control group. We implemented both interactive video activities in a 50-minute computer lab session and compared them along two dimensions: Transfer of learning, which was assessed a week after participating in video activities, and learner confidence, which was assessed during the video activities. The control group did not participate in any video-based learning activities but received the same instruction and field experiences as the experimental groups. The control group completed the transfer of learning assessment to provide a baseline for comparison.

Data Sources and Research Questions

Transfer of Learning to a Classroom Observation Test. Would the coding or the viewing approach result in better alignment of teacher education students’ observations with those of expert teacher-educators on a text-based posttreatment test of classroom observation? We expected that both the coding and viewing activities would lead to students improving their classroom noticing compared to a no-video control group. Further, we expected that students who had engaged in the coding activity would outperform students in the less interactive viewing activity, since schema theory suggests that learners who must actively negotiate meaning learn more deeply through a process of tuning (Rumelhart & Norman, 1978). In this case, teacher education students tuned their classroom awareness by comparing their classroom observations with those of experts.

We considered testing students in both interactive video conditions by having them write observations while viewing novel near-peer teaching videos; however, students who had participated in the coding condition would have had an advantage since the test would essentially replicate the instructional activity. Therefore, we created a text-based multiple-choice test to assess students’ performance in noticing classroom management or student questioning issues in short descriptions of classroom situations.

Students were directed to put themselves in the role of a teacher-supervisor observing a preservice teacher. Since the written test described classroom situations that were different from those shown in the interactive video activities, the classroom observation test was considered to measure transfer of learning.

The three experienced teacher-educators (experts) who coded the trigger videos also completed the written classroom observation test, and their responses were used to establish a scoring rubric. Validity and reliability of items on the classroom observation test were assessed in two ways. First, the experts were asked to identify any test items that seemed to be confusingly worded or that did not address the constructs of classroom management or student questioning. Three items of the original 23 items were identified by at least one expert as lacking face validity and were removed from the test.

Since completing the classroom observation test essentially involved coding classroom scenarios, we used interrater reliability to check internal validity of test items. We applied the most conservative criterion of exact agreement by all three experts. Twelve of the 20 items met this measure of validity and were used in the classroom observation test. For example, all three experts responded to the following test item as “a problem worth commenting on”:

During a difficult activity, the teacher gives extensive help to a student who is struggling.

a) not a problem

b) a problem, but not worth commenting on

c) a problem worth commenting on

Learner Confidence. To assess their confidence, students in both interactive video conditions were asked before starting, “How closely do you think your observations of these videos will match with those of university teacher-supervisors?” After viewing each of two sets of video clips participants were asked, “If you were to view another set of video clips, how closely do you think your observations would match teacher-supervisors’ observations?” Participants selected responses between 1 (no agreement expected with experts) and 4 (total agreement expected with experts) at three assessment points: before viewing the first set of video clips, after viewing the first set of clips, and after viewing the second set of video clips.

We expected that students in the viewing condition would have higher confidence in their alignment with experts because they did not confront the ways in which they disagreed with the experts. Thus, we expected that students in the viewing condition would have more confidence than the students who engaged in the coding activity.

Participants

Students (n = 63) in a course that introduced the teacher education program participated in one of the three study conditions. Students in the interactive video conditions were drawn from two sections of the introductory teacher education program course and randomly assigned to the two interactive video conditions. In regular class meetings of both sections students reconvened in a computer laboratory where participating students completed their assigned video task. Two computers were incorrectly set up for viewing rather than coding condition resulting in a mismatched number of participants (23 participants in the viewing condition and 19 in the coding condition).

Students in a third section (n = 22) of the course, serving as a control group, did not engage in a video activity but took the same paper-based classroom observation test as students in the two video conditions. The control group consisted of an intact course section in order to avoid one third of the students in each section of the course not having an assigned activity during computer laboratory sessions. The control section did not convene in the computer lab but rather participated in a review session for a coming test.

All of the students were in the final month of a course that prepared them for entry into a four-semester teacher education program. The course textbook and lecture materials had covered the topics of classroom management and student questioning, and students in the course had recently completed a field observation activity in area classrooms.

Procedures

Students participating in both video conditions viewed QuickTime trigger video clips on PC laptop computers and completed a web-based questionnaire. Students in both video conditions viewed two sets of six video clips (12 clips total) that depicted classroom lesson presentations by preservice teachers, one a middle school language arts lesson and one an early elementary mathematics lesson. Students clicked thumbnail images displayed on the computer desktop to play each individual clip.

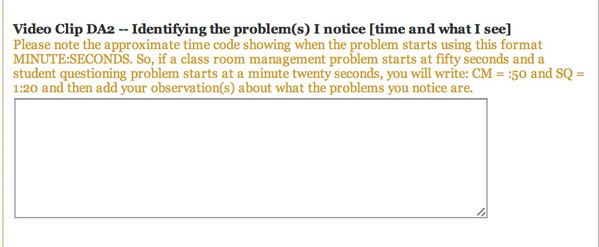

After viewing each video clip students in the coding condition typed their observations related to incidents of classroom behavior and student questioning onto an online form (Google Forms) that collected the data (see Figure 2). Students typed the beginning time-code of each observed incident in addition to their comment. After mouse-clicking an onscreen button to submit their written observations participants in the coding condition then turned over an index card from a stack of cards next to each computer station. The index card showed the experts’ time-coded and typed observations related to the particular video clip, such as the following:

CM (1:10) – Three students are trying to help each other and teacher squashes.

Not knowing how to use peer assistance.

Students were instructed to think about how their observations of the particular video clip were similar to and different from the observations of the experts. Students could replay the video clips.

Figure 2. Screenshot of video coding data entry screen (Google Forms).

Figure 2. Screenshot of video coding data entry screen (Google Forms).

In the viewing condition students watched the same clips but were instructed to turn over the card associated with the clip as soon as they started playing the clip and to read the experts’ comments when the corresponding time-code appeared in the video playback window. Students were instructed to read and think about the experts’ observations while viewing each video clip. Students in both conditions were instructed to fill out a form on the computer after viewing each set of clips that asked how confident they were that they would match the experts’ observations on the next set of video clips they viewed. Students in both conditions were instructed to work at their own pace, mindful that the activity was scheduled to take 50 minutes.

Learner confidence was assessed during and immediately following the video activities via the webpage questionnaire. Transfer of learning was assessed 1 week after completing the interactive video activities by all of the students participating in the interactive video activities as well as the control group.

Results

Transfer of Learning

As shown in Table 1, students in the viewing condition had a higher mean score on the 12-item written classroom observation test (7.74 correct, sd = 1.64) than those in the coding condition (6.64, sd = 1.75) or the test-only control condition (6.48, sd = 1.18). When the performance of the three groups on the classroom observation test was contrasted using an ANOVA, F(2) = 2.822, p = .067, the probability that the mean differences were due other than to chance alone, with alpha of p < .05, was not considered significant. A t-test that contrasted only the viewing condition with the control condition showed viewing produced significantly better test performance, t(40) = 2.5750, p = .014, while a t-test contrasting the coding condition with the control condition was not significant.

Table 1

Mean Scores and Pass Rates for Classroom Observation Test

Condition | n | Mean Score | Pass Rate (%) |

| Control | 21 | 6.48 (sd = 1.18) | 22% |

| Video Coding | 23 | 6.64 (sd = 1.75) | 36% |

| Video Viewing | 19 | 7.74 (sd = 1.64) | 63% |

| Note. ANOVA: Coding/Viewing/Control (mean scores), p = .067. T-test: Viewing vs. Control (mean scores), p = .014 | |||

Pass Rate

After reviewing results of the written classroom observation test the instructors of the course said that students’ scores seemed lower than they would have expected, but that it was difficult to interpret the raw test scores. In consultation with the course instructors, we set an arbitrary pass rate of 75% correct as a framework for comparing the two video conditions with each other and with the control condition. As shown in Table 1, 22% of the students in the control condition would have passed the classroom observation test, while 36% of the students in the coding condition and 63% of the students in the viewing condition would have passed the test. Statistical analysis was not applied to pass rate.

Contrary to our expectation, both the mean scores and pass rates suggest that the less-complex guided video viewing condition led to better performance on the written classroom observation test by this group of early-stage teacher education students.

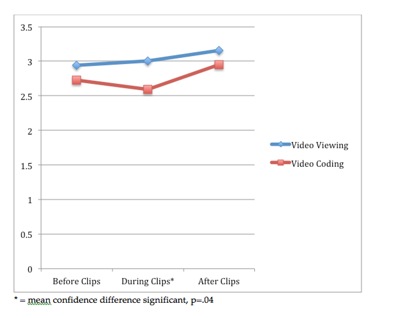

Confidence Test

The viewing group started the video activity with higher confidence that they would match the experts’ observations (2.94 on a scale of 1 to 4) than did the coding group (2.73), although this difference did not reach statistical significance using a t-test comparison. Students in the viewing condition increased in confidence slightly at subsequent confidence checkpoints—reporting mean confidence of 3.00 at the midpoint and 3.16 at the end of the session.

The coding group followed a somewhat different trajectory. After interacting with the first set of video clips, the coding group’s reported confidence in matching the experts’ observations on the next set of video clips fell to a mean of 2.59. A t-test showed the difference in confidence between the two groups was significant at the midpoint check, t(39) = 2.0842, p = .04. However, at the third measuring point of students’ confidence, the coding group rebounded.

Although the viewing group still had a higher mean confidence score (3.16), the coding group’s mean confidence score after viewing the second set of videos had risen to 2.95, and there was again no significant difference between the confidence of students in both video groups, t(41) = 1.6766, p = .10. As expected, the confidence of students in the coding condition went down after the first time that they confronted disagreement with the experts. Whether the observed rebounding of confidence indicates a trend would require a longer implementation with more points at which to observe learners’ confidence (see Figure 3.)

Figure 3. Mean self-ratings of confidence of viewing and coding groups that they would match experts’ noticing.

Figure 3. Mean self-ratings of confidence of viewing and coding groups that they would match experts’ noticing.

Conclusions and Implications

Although video has been a part of teacher education for decades, recent research on advanced video activities, such as video annotation and video clubs, promises a new level of video use in preparing preservice teachers to become reflective practitioners. Of course, many issues must be resolved in the design and implementation of innovative video activities. In this exploratory study, we started with the well-researched and sophisticated activity of video annotation and then made three adaptations to the video annotation method (near-peer video; expert feedback; video study approach) and also implemented it with early-stage rather than late-stage teacher education students.

No doubt, the numerous adjustments we made, along with the nonstandard research measures we used, limited the generalizability of our findings. However, we demonstrated the flexibility and viability of video annotation activities. We intend to continue a program of design research in which we systematically vary some of the numerous design and implementation elements as we continue to use the method. In the meantime, the video annotation method and the adaptations we explored offer interesting ideas to teacher educators for classroom or online video-based activities.

One of the adaptations was to use near-peer rather than self-video to practice critical observation. Video of preservice teachers from earlier cohorts is readily available in many teacher education programs, with permissions already in place to use it for instructional purposes. In future studies, we plan to further investigate the acceptance of near-peer video by teacher educators and preservice teachers. Used in the right ways, we believe that critical observation of near-peer video is a way to scaffold self-observation and to lessen the emotional impact of video confrontation.

The second adaptation we made to the video annotation method was using experts’ observations of the same video segments as feedback to learners. This strategy can be done live in a teacher education classroom or can be incorporated into an interactive video module that teacher education students complete as self-directed learning. For many teacher educators, watching video clips and writing comments is easier than acting as a Subject Matter Expert for an instructional program. The strategy can be an easy and natural way both to extract and share teaching expertise.

However, anyone using the expert feedback approach should be aware that different experts, especially those with experience and expertise in different pedagogical or content areas, are likely to make different critical observations. Indeed, watching the same clips of classroom teaching but with the comments of different experts can be beneficial to preservice teachers.

The third adaptation we made to the video annotation method was the only one investigated experimentally. Our primary treatment consisted of the video annotation with expert feedback method (coding) that included teacher education students writing their video-based observations before being shown the experts’ observations. This approach has great potential. Indeed, we intend to conduct further studies with more advanced preservice teachers and more involved implementations than in this study.

In this exploratory study, however, investigating a lower overhead version of the innovative method appeared to be valuable to teacher educators. We removed the aspect that introduced the most complexity while maintaining the key elements of near-peer video and expert task feedback. In this particular study, the simplified guided video viewing activity led to significantly better expert-novice alignment on the paper-based posttest of classroom noticing. The more sophisticated video coding activity based on methods that have improved self-reflection in late-stage preservice teachers did not seem to have much learning impact on these early-stage teacher education students.

We do not conclude that the guided video viewing activity is better than the video coding activity, only that it appears to be more appropriate for the particular target learners, instructional context, and learning outcomes defined in this project. Both interactive video approaches have potential to amplify preservice teachers’ learning from video-based observation. Indeed, the simplicity of the guided video viewing activity may help overcome the resistance that Shepherd and Hannafin (2008, as cited in Rich & Hannafin, 2009, p. 64) encountered from teacher education faculty, preservice teachers, and cooperating teachers to using video annotation tools for analyzing the teaching practice of student teachers.

Incorporating simpler video observation activities early in teacher education may lead to greater acceptance of more advanced video observation activities, such as video annotation and video clubs, during student teaching and professional practice, preparing new teachers for an era of accountability that increasingly relies on video (Rich & Hannafin, 2009).

In summary, the interactive video approaches developed in this project used video of near-peer preservice teachers to trigger the observations of both experts and novices, with the experts’ observations used to guide preservice teachers’ classroom awareness. Both interactive video approaches can be used in traditional classroom settings to generate group discussion and can also be developed as standalone, self-paced learning activities that can be delivered on a learning management system, such as Blackboard, or an electronic portfolio system, such as LiveText. With high-stakes video-based assessment of classroom teaching on the rise and technologies for video recording, sharing, and commenting becoming easier, this time is fruitful for teacher-educators and researchers to experiment with new ways of using video earlier and better in teacher education.

References

Abell, S. K., Cennamo, K. S., & Campbell, L. (1996). Interactive video cases developed for elementary science methods courses. Tech Trends, 41(3), 20-23.

Berliner, D. C. (1986). In pursuit of the expert pedagogue. Educational Researcher, 15(7), 5-13.

Berliner, D. C. (2004). Describing the behavior and documenting the accomplishments of expert teachers. Bulletin of Science, Technology & Society, 24(3), 200-212. doi: 10:1177/0270467604265535.

Borko, H., Jacobs, J., Eiteljorg, R., & Pitman, M. E. (2008). Video as a tool for fostering productive discussions in mathematics professional development. Teaching and Teacher Education, 24, 417-436.

Brouwer, N. (2011, April). Imaging teacher learning: A literature review on the use of digital video for preservice teacher education and professional development. Paper presented at the annual meeting of the American Educational Research Association, New Orleans, LA.

Butrymowicz, S. (2012, May 5). Minnesota, 24 other states to test new assessments of teachers-to-be. MinnPost. Retrieved from http://www.minnpost.com/education/2012/05/minnesota-24-other-states-test-new-assessments-teachers-be

Bryk, A. S. (2009, April). Support a science of performance improvement. Phi Delta Kappan, 90(8), 597-600.

Calandra, B., Brantley-Dias, L., Lee, J. K., & Fox, D. L. (2009). Using video editing to cultivate novice teachers’ practice. Journal of Research on Technology in Education, 42(1), 73-94.

Calandra, B., Gurvitch, R., & Lund, J. (2008). An exploratory study of digital video editing as a tool for teacher preparation. Journal of Technology and Teacher Education, 16(2), 137-153.

Cronin, M. W., & Cronin, K. A. (1992). Recent empirical studies of the pedagogical effects of interactive video instruction in “soft skill” areas. Journal of Computing in Higher Education, 3(2), 53-85.

The Danielson Group (2011). The framework for teaching. Retrieved from http://www.danielsongroup.org/article.aspx?page=frameworkforteaching

de Mesquita, P. B., Dean, R. F., & Young, B. J. (2010). Making sure what you see is what you get: Digital video technology and the preparation of teachers of elementary science. Contemporary Issues in Technology and Teacher Education, 10(3), 275-293. Retrieved from https://citejournal.org/vol10/iss3/science/article1.cfm

Emmer, E. T., & Hickman, J. (1991). Teacher efficacy in classroom management and discipline. Educational and Psychological Measurement, 51(3), 755-765.

Ericsson, K. A., Charness, N., Feltovich, P., & Hoffman, R. R. (2006). Cambridge handbook of expertise and expert performance. Cambridge, UK: Cambridge University Press.

Fadde, P. J. (2009). Expertise-based training: Getting more learners over the bar in less time. Technology, Instruction, Cognition, and Learning, 7(2), 171-197.

Fadde, P. J., Aud, S., & Gilbert, S. (2009). Incorporating a video editing activity in a reflective teaching course for preservice teachers. Action in Teacher Education, 31(1), 75-86.

Fuller, F. F., & Manning, B. A. (1973). Self-confrontation reviewed: A conceptualization for video playback in teacher education. Review of Educational Research, 43(4), 469-528.

Greenwalt, K. A. (2008). Through the camera’s eye: A phenomenological analysis of teacher subjectivity. Teaching and Teacher Education, 24, 387-399.

Hannafin, M. J., Shepherd, C. E., & Polly, D. (2010). Video assessment of classroom teaching practices: Lessons learned, problems and issues. Educational Technology, 50(1), 32-37.

Harford, J., & MacRuairc, G. (2008). Engaging student teachers in meaningful reflective practice. Teaching and Teacher Training, 24, 1884-1892.

Heintz, A., Borsheim, C. Caughlan, S., Juzwik, M. M., & Sherry, M. B. (2010). Video-based response & revision: Dialogic instruction using video and web 2.0 technologies. Contemporary Issues in Technology and Teacher Education, 10(2), 175-196. Retrieved from https://citejournal.org/vol10/iss2/languagearts/article2.cfm

Kersting, N. (2008). Using video clips of mathematics classroom instruction as item prompts to measure teachers’ knowledge of teaching mathematics. Educational and Psychological Measurement, 68(5), 845-861.

Kucan, L., Palincsar, A. S., Khasnabis, D., & Chang, C-i. (2009). The video viewing task: A source of information for assessing and addressing teacher understanding of text-based discussion. Teaching and Teacher Education, 25, 415-423.

Kurz, T. L., & Batarelo, I. (2010). Constructive features of video cases to be used in education. Tech Trends 54(5), 46-52.

Mayall, H. J. (2010). Integrating video case studies into a literacy methods course. International Journal of Instructional Media, 37(1), 33-42.

McIntyre, J. D., & Pape, S. (1993). Utilizing video protocols to enhance teacher reflective thinking. Teacher-educator, 28(3), 2-10.

Norton, A., McCloskey, A., & Hudson, R. A. (2011). Prediction assessments: Using video-based predictions to assess prospective teachers’ knowledge of students’ mathematical thinking. Journal of Mathematics in Teacher Education, 14(4), 305-325. doi:10.1007/s10857-011-9181-0.

Rich, P.J., & Hannafin, M. J. (2008). Decisions and reasons: Examining preservice teacher decision-making through video self-analysis. Journal of Computing in Higher Education, 20(1), 62-94.

Rich, P. J., & Hannafin, M. (2009). Video annotation tools: Technologies to scaffold, structure, and transfer. Journal of Teacher Education, 60(1), 52-67.

Rich, P. J., & Tripp, T. (2011). Ten essential questions educators should ask when using video annotation tools. Tech Trends, 55(6), 16-24.

Rosaen, C. L., Lundeberg, M., Cooper, M., Fritzen, A., & Terpstra, M. (2008). Noticing noticing : How does investigation of video records change how teachers reflect on their experiences? Journal of Teacher Education, 59(4), 347-360.

Rumelhart, D. E., & Norman, D. A. (1978). Accretion, tuning, and restructuring: Three modes of learning. In J. W. Cotton & R. L. Klatzky (Eds.), Semantic factors in cognition (pp. 37-53). Hillsdale, NJ: Erlbaum.

Santagata, R., & Angelici, G. (2010). Studying the impact of the lesson analysis framework on preservice teachers’ abilities to reflect on videos of classroom teaching. Journal of Teacher Education, 61(4), 339-349.

Schön, D. (1987). Educating the reflective practitioner. San Francisco, CA: Jossey-Bass.

Sherin, M. G. (2007). The development of teachers’ professional vision in video clubs. In R. Goldman, R. Pea, B. Barron, & S. Derry (Eds.), Video research in the learning sciences (pp. 383-395). Hillsdale, NJ: Erlbaum.

Sherin, M. G., & Han, S. Y. (2004). Teaching learning in the context of a video club. Teaching and Teacher Education, 20, 163-183.

Sherin, M. G., Linsenmeier, K. A., & van Es, E. A. (2009). Selecting video clips to promote mathematics teachers’ discussion of student thinking. Journal of Teacher Education, 60(3), 213-230.

Sherin, M. G., & van Es, E.A. (2005). Using video to support teachers’ ability to notice classroom interactions. Journal of Technology and Teacher Education, 13(3), 475-491.

Shulman, L. S. (1986). Those who understand: Knowledge growth in teaching. Educational Researcher, 15 (2), 4-14.

Smith, D., McLaughlin, T., & Brown, I. (2012). 3-D computer animation vs. live-action video: Differences in viewers’ response to instructional vignettes. Contemporary Issues in Technology and Teacher Education, 12(1). Retrieved from https://citejournal.org/vol12/iss1/general/article1.cfm

So, W. W-m., Pow, J. W-c., & Hung, V. H-k. (2009). The interactive use of a video database in teacher education: Creating a knowledge base for teaching through a learning community. Computers & Education, 53, 775-786.

Star, J. R., & Strickland, S. K. (2008). Learning to observe: Using video to improve preservice mathematics teachers’ ability to notice. Journal of Mathematics Teacher Education, 11, 107-125.

Tripp, T., & Rich, P. (2012). Using video to analyze one’s own teaching. British Journal of Educational Technology, 43(4), 678-704.

van Es, E. A., & Sherin, M. G. (2002). Learning to notice: Scaffolding new teachers’ interpretations of classroom interactions. Journal of Technology and Teacher Education, 10(4), 571-596.

van Es, E. A., & Sherin, M. G. (2008). Mathematics teachers’ ‘‘learning to notice’’ in the context of a video club. Teaching and Teacher Education, 24, 244–276.

Wang, J., & Hartley, K. (2003). Video technology as a support for teacher education reform. Journal of Technology and Teacher Education, 11(1), 105-138.

Wong, S. L., Yung, B. H. W., Cheng, M. W., Lam, K. L., & Hodson, D. (2006). Setting the stage for developing preservice teachers’ conceptions of good science teaching: The role of classroom videos. International Journal of Science Education, 28(1), 1-24.

Author Information

Peter Fadde

Southern Illinois University

Email: [email protected]

Patricia Sullivan

Purdue University

Email: [email protected]

![]()